Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

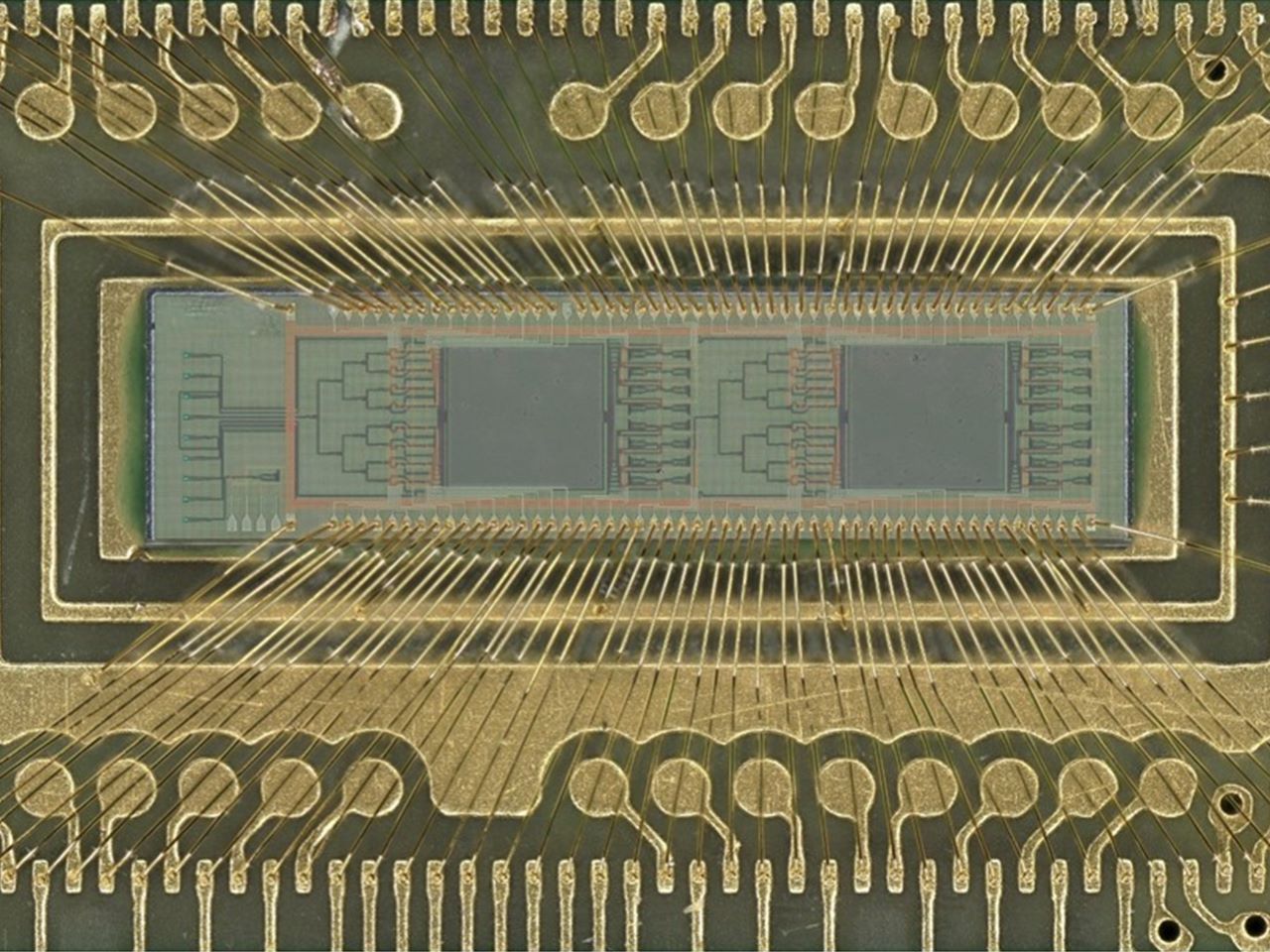

New light-based chip boosts power efficiency of AI tasks 100 fold

A team of engineers has developed a new kind of computer chip that uses light instead of electricity to perform one of the most power-intensive parts of artificial intelligence — image recognition and similar pattern-finding tasks. Using light dramatically cuts the power needed to perform these tasks, with efficiency 10 or even 100 times that of current chips performing the same calculations. Using this approach could help rein in the enormous demand for electricity that is straining power grids and enable higher performance AI models and systems. This machine learning task, called “convolution,” is at the heart of how AI systems process pictures, videos and even language. Convolution operations currently require large amounts of computing resources and time. These new chips, though, use lasers and microscopic lenses fabricated onto circuit boards to perform convolutions with far less power and at faster speeds. In tests, the new chip successfully classified handwritten digits with about 98% accuracy, on par with traditional chips “Performing a key machine learning computation at near zero energy is a leap forward for future AI systems,” said study leader Volker J. Sorger, Ph.D., the Rhines Endowed Professor in Semiconductor Photonics at the University of Florida. “This is critical to keep scaling up AI capabilities in years to come.” “This is the first time anyone has put this type of optical computation on a chip and applied it to an AI neural network,” said Hangbo Yang, Ph.D., a research associate professor in Sorger’s group at UF and co-author of the study. Sorger’s team collaborated with researchers at UF’s Florida Semiconductor Institute, the University of California, Los Angeles and George Washington University on study. The team published their findings, which were supported by the Office of Naval Research, Sept. 8 in the journal Advanced Photonics The prototype chip uses two sets of miniature Fresnel lenses using standard manufacturing processes. These two-dimensional versions of the same lenses found in lighthouses are just a fraction of the width of a human hair. Machine learning data, such as from an image or other pattern-recognition tasks, are converted into laser light on-chip and passed through the lenses. The results are then converted back into a digital signal to complete the AI task. This lens-based convolution system is not only more computationally efficient, but it also reduces the computing time. Using light instead of electricity has other benefits, too. Sorger’s group designed a chip that could use different colored lasers to process multiple data streams in parallel. “We can have multiple wavelengths, or colors, of light passing through the lens at the same time,” Yang said. “That’s a key advantage of photonics.” Chip manufacturers, such as industry leader NVIDIA, already incorporate optical elements into other parts of their AI systems, which could make the addition of convolution lenses more seamless. “In the near future, chip-based optics will become a key part of every AI chip we use daily,” said Sorger, who is also deputy director for strategic initiatives at the Florida Semiconductor Institute. “And optical AI computing is next.”

Researchers warn of rise in AI-created non-consensual explicit images

A team of researchers, including Kevin Butler, Ph.D., a professor in the Department of Computer and Information Science and Engineering at the University of Florida, is sounding the alarm on a disturbing trend in artificial intelligence: the rapid rise of AI-generated sexually explicit images created without the subject’s consent. With funding from the National Science Foundation, Butler and colleagues from UF, Georgetown University and the University of Washington investigated a growing class of tools that allow users to generate realistic nude images from uploaded photos — tools that require little skill, cost virtually nothing and are largely unregulated. “Anybody can do this,” said Butler, director of the Florida Institute for Cybersecurity Research. “It’s done on the web, often anonymously, and there’s no meaningful enforcement of age or consent.” The team has coined the term SNEACI, short for synthetic non-consensual explicit AI-created imagery, to define this new category of abuse. The acronym, pronounced “sneaky,” highlights the secretive and deceptive nature of the practice. “SNEACI really typifies the fact that a lot of these are made without the knowledge of the potential victim and often in very sneaky ways,” said Patrick Traynor, a professor and associate chair of research in UF's Department of Computer and Information Science and Engineering and co-author of the paper. In their study, which will be presented at the upcoming USENIX Security Symposium this summer, the researchers conducted a systematic analysis of 20 AI “nudification” websites. These platforms allow users to upload an image, manipulate clothing, body shape and pose, and generate a sexually explicit photo — usually in seconds. Unlike traditional tools like Photoshop, these AI services remove nearly all barriers to entry, Butler said. “Photoshop requires skill, time and money,” he said. “These AI application websites are fast, cheap — from free to as little as six cents per image — and don’t require any expertise.” According to the team’s review, women are disproportionately targeted, but the technology can be used on anyone, including children. While the researchers did not test tools with images of minors due to legal and ethical constraints, they found “no technical safeguards preventing someone from doing so.” Only seven of the 20 sites they examined included terms of service that require image subjects to be over 18, and even fewer enforced any kind of user age verification. “Even when sites asked users to confirm they were over 18, there was no real validation,” Butler said. “It’s an unregulated environment.” The platforms operate with little transparency, using cryptocurrency for payments and hosting on mainstream cloud providers. Seven of the sites studied used Amazon Web Services, and 12 were supported by Cloudflare — legitimate services that inadvertently support these operations. “There’s a misconception that this kind of content lives on the dark web,” Butler said. “In reality, many of these tools are hosted on reputable platforms.” Butler’s team also found little to no information about how the sites store or use the generated images. “We couldn’t find out what the generators are doing with the images once they’re created” he said. “It doesn’t appear that any of this information is deleted.” High-profile cases have already brought attention to the issue. Celebrities such as Taylor Swift and Melania Trump have reportedly been victims of AI-generated non-consensual explicit images. Earlier this year, Trump voiced support for the Take It Down Act, which targets these types of abuses and was signed into law this week by President Donald Trump. But the impact extends beyond the famous. Butler cited a case in South Florida where a city councilwoman stepped down after fake explicit images of her — created using AI — were circulated online. “These images aren’t just created for amusement,” Butler said. “They’re used to embarrass, humiliate and even extort victims. The mental health toll can be devastating.” The researchers emphasized that the technology enabling these abuses was originally developed for beneficial purposes — such as enhancing computer vision or supporting academic research — and is often shared openly in the AI community. “There’s an emerging conversation in the machine learning community about whether some of these tools should be restricted,” Butler said. “We need to rethink how open-source technologies are shared and used.” Butler said the published paper — authored by student Cassidy Gibson, who was advised by Butler and Traynor and received her doctorate degree this month — is just the first step in their deeper investigation into the world of AI-powered nudification tools and an extension of the work they are doing at the Center for Privacy and Security for Marginalized Populations, or PRISM, an NSF-funded center housed at the UF Herbert Wertheim College of Engineering. Butler and Gibson recently met with U.S. Congresswoman Kat Cammack for a roundtable discussion on the growing spread of non-consensual imagery online. In a newsletter to constituents, Cammack, who serves on the House Energy and Commerce Committee, called the issue a major priority. She emphasized the need to understand how these images are created and their impact on the mental health of children, teens and adults, calling it “paramount to putting an end to this dangerous trend.” "As lawmakers take a closer look at these technologies, we want to give them technical insights that can help shape smarter regulation and push for more accountability from those involved," said Butler. “Our goal is to use our skills as cybersecurity researchers to address real-world problems and help people.”

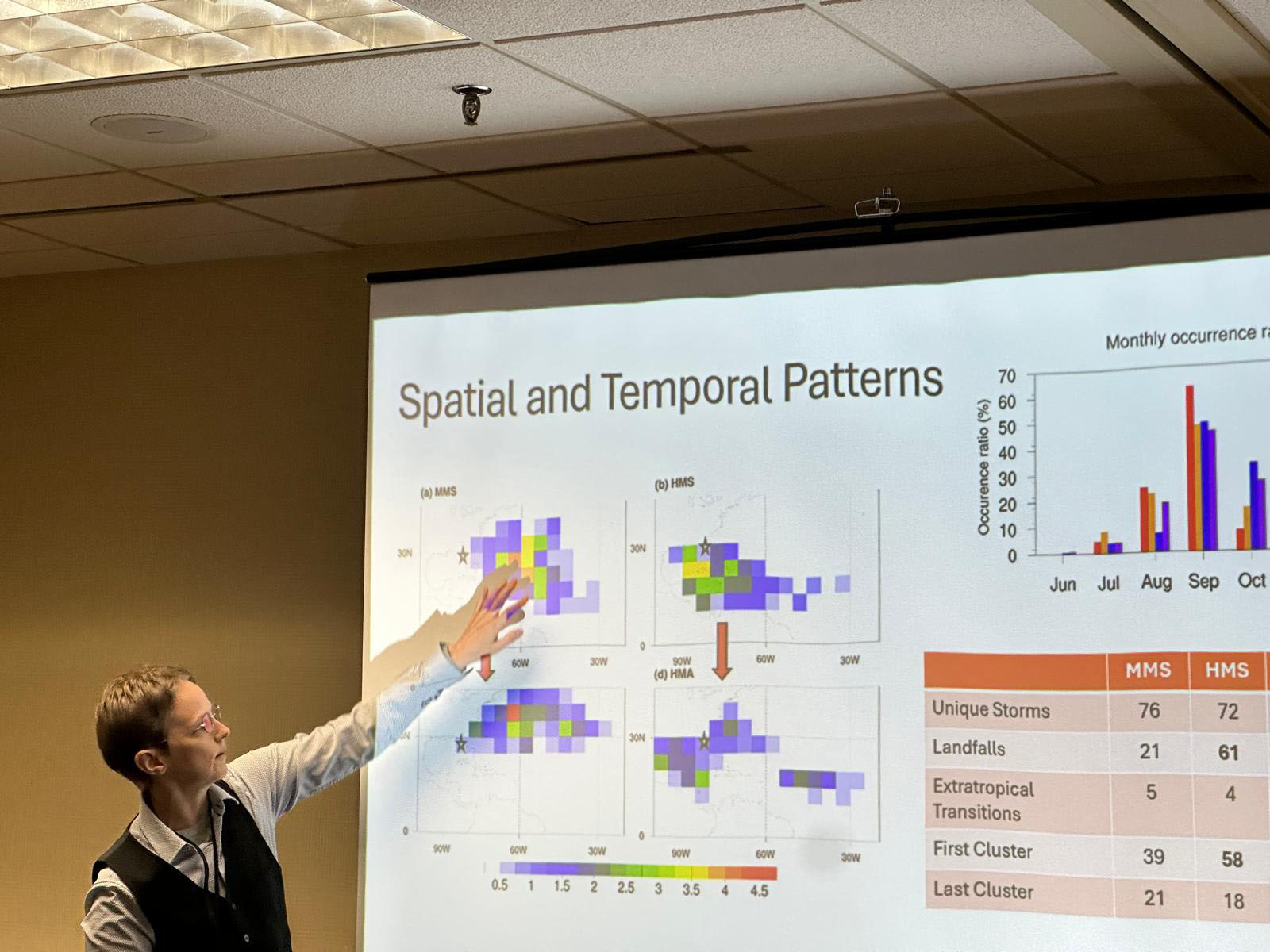

Tracking rain patterns will improve hurricane forecasting, UF researcher finds

Studying the precipitation patterns in hurricanes may be key to predicting future storm patterns and their potential strength, a University of Florida researcher has found. Supported by a four-year, $212,000 grant from the National Science Foundation, Professor of Geography Corene Matyas, Ph.D. has identified the patterns of rain rates within storms and studied the moisture surrounding these storms. “We are hoping that, if we have a better prediction of moisture availability, that might help us forecast rain events with greater accuracy,” Matyas said. “The more we know about how storms develop, the more we can predict their path and magnitude.” The ideal stage for the perfect storm The potential for devastating high winds, storm surge and flooding poses an annual threat to Florida and its residents. With 1,350 miles of coastline and relatively flat geography that juts out to separate the warm waters of the southeast Atlantic and the Gulf, Florida creates the ideal stage for the perfect storm. Last year broke records with 18 named storms, including 11 hurricanes in the Atlantic basin and three major hurricanes making landfall along Florida’s coast. Early predictions are crucial to hurricane preparedness, allowing for increased response time and resource allocation, and hurricane modeling is essential for understanding these somewhat unpredictable storms. Advances in technology, data collection and the use of artificial intelligence in hurricane modeling have significantly impacted the ability to predict a storm’s path and strength more accurately. Artificial intelligence helps researchers understand hurricanes Matyas has completed two studies on this topic. The first study processed 12,000 images of rain rates from tropical storms and hurricanes in the Atlantic, using a machine learning algorithm called a convolutional autoencoder. Similar in use to image recognition software, the encoder broke the rain rate images down and simplified the patterns. Six main types, or clusters, of rainfall patterns for tropical cyclones were identified. At a presentation of the work to forecasters at the National Weather Service office in Jacksonville, the forecasters confirmed that one of the patterns matches what they typically see when late-season storms make landfall over Florida’s Gulf Coast. The second study used the autoencoder to process 4,600 images that represent the amount of moisture in the atmosphere extending 1,000 kilometers away from each hurricane. “We looked for commonalities in the patterns and found four dominant patterns of moisture that accompany Atlantic basin hurricanes,” Matyas said. “We found the biggest storms with the most moisture make the most landfalls, typically in the Caribbean and even in southern Florida. They also have a large moisture pool, giving them a bigger chance of heavy rainfall.” According to Matyas, three of the moisture patterns found in the second study were strikingly like those found in the earlier study that used fewer observations in a statistical analysis. With this use of AI, researchers can now recognize and understand these moisture patterns better, which can improve predictions about a storm’s intensity, its size and the amount of rainfall that will result from it. Early, accurate storm predictions allow Floridians time to prepare Rapid intensification – when, in a 24-hour period, a storm experiences a sudden drop in pressure and a dramatic increase in wind speed – creates much more of a challenge for forecasters. “We tend to boil down a hurricane to a set of coordinates which track the middle of a storm,” Matyas said. “And the fastest winds do focus there, but the moisture gets pulled from thousands of kilometers away and the system forces the moisture up. That moisture must go somewhere. So, the outer edges of the storm need to be understood more as well.” Matyas hopes these studies will help scientists classify rain patterns more accurately and consistently. Continued funding for research at public universities from federal agencies, such as the National Science Foundation and the National Oceanic and Atmospheric Administration, is essential for helping researchers develop tools to detect and predict severe weather events. Matyas is one of two UF faculty members among 18 national researchers named to the 2025 class of fellows by the American Association of Geographers. Matyas and UF Geography Department Chair Jane Southworth, Ph.D. were honored by the organization for their contributions in biogeography, geospatial analytics, soil science, community geography, climatology and other areas related to geography. “I look forward to this opportunity to contribute to the mission of the AAG in a more formal capacity, continuing to research how weather shapes our spaces and share knowledge of earth systems beyond the classroom and the written word to promote an inclusive society,” Matyas said.

AI-driven software is 96% accurate at diagnosing Parkinson's

Existing research indicates that the accuracy of a Parkinson’s disease diagnosis hovers between 55% and 78% in the first five years of assessment. That’s partly because Parkinson’s sibling movement disorders share similarities, sometimes making a definitive diagnosis initially difficult. Although Parkinson’s disease is a well-recognized illness, the term can refer to a variety of conditions, ranging from idiopathic Parkinson’s, the most common type, to other movement disorders like multiple system atrophy Parkinsonian variant and progressive supranuclear palsy. Each shares motor and nonmotor features, like changes in gait — but possess a distinct pathology and prognosis. Roughly one in four patients, or even one in two patients, is misdiagnosed. Now, researchers at the University of Florida and the UF Health Norman Fixel Institute for Neurological Diseases have developed a new kind of software that will help clinicians differentially diagnose Parkinson’s disease and related conditions, reducing diagnostic time and increasing precision beyond 96%. The study was published recently in JAMA Neurology and was funded by the National Institutes of Health. “In many cases, MRI manufacturers don’t communicate with each other due to marketplace competition,” said David Vaillancourt, Ph.D., chair and a professor in the UF Department of Applied Physiology and Kinesiology. “They all have their own software and their own sequences. Here, we’ve developed novel software that works across all of them.” Although there is no substitute for the human element of diagnosis, even the most experienced physicians who specialize in movement disorder diagnoses can benefit from a tool to increase diagnostic efficacy between different disorders, Vaillancourt said. The software, Automated Imaging Differentiation for Parkinsonism, or AIDP, is an automated MRI processing and machine learning software that features a noninvasive biomarker technique. Using diffusion-weighted MRI, which measures how water molecules diffuse in the brain, the team can identify where neurodegeneration is occurring. Then, the machine learning algorithm, rigorously tested against in-person clinic diagnoses, analyzes the brain scan and provides the clinician with the results, indicating one of the different types of Parkinson’s. The study was conducted across 21 sites, 19 of them in the United States and two in Canada. “This is an instance where the innovation between technology and artificial intelligence has been proven to enhance diagnostic precision, allowing us the opportunity to further improve treatment for patients with Parkinson’s disease,” said Michael Okun, M.D., medical adviser to the Parkinson’s Foundation and director of the Norman Fixel Institute for Neurological Diseases at UF Health. “We look forward to seeing how this innovation can further impact the Parkinson’s community and advance our shared goal of better outcomes for all.” The team’s next step is obtaining approval from the U.S. Food and Drug Administration. “This effort truly highlights the importance of interdisciplinary collaboration,” said Angelos Barmpoutis, Ph.D., a professor at the Digital Worlds Institute at UF. “Thanks to the combined medical expertise, scientific expertise and technological expertise, we were able to accomplish a goal that will change the lives of countless individuals.” Vaillancourt and Barmpoutis are partial owners of a company called Neuropacs whose goal is to bring this software forward, improving both patient care and clinical trials where it might be used.

UF team develops AI tool to make genetic research more comprehensive

University of Florida researchers are addressing a critical gap in medical genetic research — ensuring it better represents and benefits people of all backgrounds. Their work, led by Kiley Graim, Ph.D., an assistant professor in the Department of Computer & Information Science & Engineering, focuses on improving human health by addressing "ancestral bias" in genetic data, a problem that arises when most research is based on data from a single ancestral group. This bias limits advancements in precision medicine, Graim said, and leaves large portions of the global population underserved when it comes to disease treatment and prevention. To solve this, the team developed PhyloFrame, a machine-learning tool that uses artificial intelligence to account for ancestral diversity in genetic data. With funding support from the National Institutes of Health, the goal is to improve how diseases are predicted, diagnosed, and treated for everyone, regardless of their ancestry. A paper describing the PhyloFrame method and how it showed marked improvements in precision medicine outcomes was published Monday in Nature Communications. Graim, a member of the UF Health Cancer Center, said her inspiration to focus on ancestral bias in genomic data evolved from a conversation with a doctor who was frustrated by a study's limited relevance to his diverse patient population. This encounter led her to explore how AI could help bridge the gap in genetic research. “If our training data doesn’t match our real-world data, we have ways to deal with that using machine learning. They’re not perfect, but they can do a lot to address the issue.” —Kiley Graim, Ph.D., an assistant professor in the Department of Computer & Information Science & Engineering and a member of the UF Health Cancer Center “I thought to myself, ‘I can fix that problem,’” said Graim, whose research centers around machine learning and precision medicine and who is trained in population genomics. “If our training data doesn’t match our real-world data, we have ways to deal with that using machine learning. They’re not perfect, but they can do a lot to address the issue.” By leveraging data from population genomics database gnomAD, PhyloFrame integrates massive databases of healthy human genomes with the smaller datasets specific to diseases used to train precision medicine models. The models it creates are better equipped to handle diverse genetic backgrounds. For example, it can predict the differences between subtypes of diseases like breast cancer and suggest the best treatment for each patient, regardless of patient ancestry. Processing such massive amounts of data is no small feat. The team uses UF’s HiPerGator, one of the most powerful supercomputers in the country, to analyze genomic information from millions of people. For each person, that means processing 3 billion base pairs of DNA. “I didn’t think it would work as well as it did,” said Graim, noting that her doctoral student, Leslie Smith, contributed significantly to the study. “What started as a small project using a simple model to demonstrate the impact of incorporating population genomics data has evolved into securing funds to develop more sophisticated models and to refine how populations are defined.” What sets PhyloFrame apart is its ability to ensure predictions remain accurate across populations by considering genetic differences linked to ancestry. This is crucial because most current models are built using data that does not fully represent the world’s population. Much of the existing data comes from research hospitals and patients who trust the health care system. This means populations in small towns or those who distrust medical systems are often left out, making it harder to develop treatments that work well for everyone. She also estimated 97% of the sequenced samples are from people of European ancestry, due, largely, to national and state level funding and priorities, but also due to socioeconomic factors that snowball at different levels – insurance impacts whether people get treated, for example, which impacts how likely they are to be sequenced. “Some other countries, notably China and Japan, have recently been trying to close this gap, and so there is more data from these countries than there had been previously but still nothing like the European data," she said. “Poorer populations are generally excluded entirely.” Thus, diversity in training data is essential, Graim said. "We want these models to work for any patient, not just the ones in our studies," she said. “Having diverse training data makes models better for Europeans, too. Having the population genomics data helps prevent models from overfitting, which means that they'll work better for everyone, including Europeans.” Graim believes tools like PhyloFrame will eventually be used in the clinical setting, replacing traditional models to develop treatment plans tailored to individuals based on their genetic makeup. The team’s next steps include refining PhyloFrame and expanding its applications to more diseases. “My dream is to help advance precision medicine through this kind of machine learning method, so people can get diagnosed early and are treated with what works specifically for them and with the fewest side effects,” she said. “Getting the right treatment to the right person at the right time is what we’re striving for.” Graim’s project received funding from the UF College of Medicine Office of Research’s AI2 Datathon grant award, which is designed to help researchers and clinicians harness AI tools to improve human health.

One AI-based advancement at a time, UF leaders are transforming the sports industry

As emerging technologies like AI reshape sport industries and professional demands evolve, it is essential for students to graduate with the expertise to thrive in their future careers. To ensure that these students are set up for success, the UF College of Health & Human Performance has launched a new sports analytics program. Led by Scott Nestler, Ph.D., CAP, PStat, a professor of practice in the Department of Sport Management and a national analytics and data science expert, the program ties back to the UF & Sport Collaborative – a five-part project intended to elevate UF’s presence on the global stage in sports performance, healthcare and communication. “Tools and insights that previously were only available to professional sports teams are now coming to the college level, and it makes sense for universities to begin using these data, technologies and new analytic methods,” Nestler said. The sports analytics program fosters collaboration between academic units, such as the Warrington College of Business and the University Athletic Association, helping bridge the gap between sport research and innovation and empowering students to address real-world challenges through data and AI. For example, the program offers opportunities to leverage technology and analytics for strategic decision making in player acquisition, team formation and in-game decisions. Beyond performance metrics, the program also explores marketing strategies and revenue analytics, providing a well-rounded understanding of the field. “When you have enough data and a large enough sample of individuals, AI can help make predictions that otherwise would take prohibitively longer for a human to accomplish with traditional methods,” said Garrett Beatty, Ph.D., the assistant dean for innovation and entrepreneurship and an instructional associate professor in the College of Health & Human Performance’s Department of Applied Physiology and Kinesiology. “Because those data volumes are getting so large, AI models, machine learning, deep learning and other strategies can be leveraged to make sense and glean insights from sport and human performance data in ways that have never been done before.” The program seeks to offer several educational opportunities, such as individual courses, certificate programs and potentially a full degree program. In the long term, Nestler envisions the program evolving into a center or institute, beginning with establishing a research lab in the spring. Additionally, the program will leverage the university’s supercomputer, HiPerGator, to analyze larger data sets and use newer predictive modeling machine learning algorithms. “As faculty and staff move from working with box score and play-by-play data to using tracking data, which contains coordinates of all players and the ball on the field or court tens of times per second, the size of data files in sports analytics has grown tremendously,” Nestler said. “HiPerGator, with its large storage capacity and multiple central processing units/graphic processing units, is ideal for using in sports analytics work in 2025.” Nestler also aims to increase student involvement by enhancing UF’s Sport Analytics Club and hiring research assistants to work on projects for the University Athletic Association. “We need to take a broader view of what AI is and realize that it incorporates a lot of what we’ve been calling data science and analytics in the form of machine learning models, which came more out of statistics and computer science. Those are types of AI and those that I think will largely continue to be used in the coming years within the sports space,” Nestler said. “Also, we’re continuing to see growth in the number of people interested in working in this space, and I don’t foresee that changing. Fortunately, we are also seeing the number of opportunities available to those with the appropriate skills increase as well.”

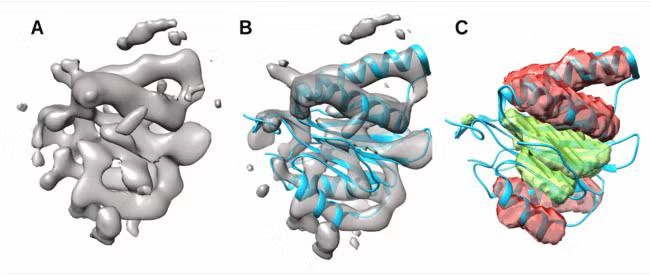

Proteins, often called the building blocks of life, play a central role in drug development. When scientists develop new treatments, they must understand how drugs interact with proteins involved in disease mechanisms and with proteins in the human body that influence drug response. Scientists commonly use cryo-electron microscopy (cryo-EM) 3D imaging data to study proteins. While recent advances have enabled higher-resolution images that are easier to analyze, medium-resolution images—which are more difficult to interpret—are still the most common for larger protein complexes. Salim Sazzed, Ph.D., an assistant professor in the computer science department of Georgia Southern University’s Allen E. Paulson College of Engineering and Computing, has been awarded a two-year National Science Foundation grant of about $175,000 to lead a groundbreaking project to develop novel Artificial Intelligence (AI) techniques for determining protein secondary structures from medium-resolution cryo-electron microscopy (cryo-EM) images. Improved modeling from medium-resolution images will help researchers study more proteins efficiently, giving new insights into diseases and potentially guiding the development of new treatments and future drugs. At its core, this research will combine biology and machine learning to study protein structures. The multidisciplinary approach and potential impacts on public health are what most excite Sazzed. “The impetus behind this research is the positive impact on public health and possibly contributing to the biomedical workforce,” he said. “Seeing biology and computer science combine for that kind of impact is incredibly moving.” As the Principal Investigator (PI) for the project, Sazzed will use his expertise in deep learning computer models to focus on a major challenge in structural biology: identifying the two main secondary structures of proteins—the alpha helix and the beta sheet. These structures are critical for a protein’s overall shape and function, but in medium-resolution cryo-EM images they often appear indistinct or lack clear detail, making them particularly difficult to analyze. Sazzed’s research will focus on two main goals. First, he will quantify the variability of alpha helices and beta sheets in medium-resolution images, comparing them to idealized structures. Second, by integrating this structural variability with the image data in a deep learning model, he will aim to generate more precise and accurate representations of protein secondary structures. “When we feed this information into a deep learning model along with the image data, the model should be able to determine protein secondary structures more precisely,” Sazzed elaborated. Sazzed believes students will greatly benefit from this multi-disciplinary approach. In addition to a Ph.D. student, several undergraduate students will be directly engaged in the research. A full-day workshop will also be organized, allowing Georgia Southern students from diverse disciplines to participate. This initiative will build on Georgia Southern’s strong tradition of involving undergraduates in research and will support the University’s recent focus on biomedical and health sciences. “There are many different knowledge areas coming together in this work,” Sazzed said. “It involves computer science, biology, chemistry, and even public health. I look forward to students following the research and exploring these different fields themselves.” Allen E. Paulson College of Engineering & Computing Interim Associate Dean of Research, Masoud Davari, Ph.D., echoes this sentiment and emphasizes its importance to the University’s research profile. “Sazzed’s interdisciplinary research, which bridges the gap between biology and computer science, will foster multidisciplinary research in our college—as it is cutting-edge and potentially groundbreaking in drug development to impact people’s lives nationally and globally,” Davari said. “It’s also well aligned with the college’s strategic research plan—as we make the move to R1 status to be aligned with ‘Soaring to R1,’ which is among the transformational initiatives for the University.” Looking to know more about Georgia Southern University or connect with Salim Sazzed — simply contact Georgia Southern's Director of Communications Jennifer Wise at jwise@georgiasouthern.edu to arrange an interview today.

The University of Florida’s ‘AI Queen’ is using AI technology to help prevent dementia

To help the 50 million people globally who live with dementia, the National Institute on Aging is finding researchers to develop tech-based breakthroughs that target the disease — researchers like the University of Florida’s “AI Queen.” It’s a fitting nickname for Aprinda Indahlastari Queen, Ph.D., who is applying artificial intelligence technology to study transcranial direct current stimulation, or tDCS — a technique that involves placing electrodes on the scalp to deliver a weak electrical current to the brain — as a possible way to prevent dementia. The assistant professor in the UF College of Public Health and Health Professions’ Department of Clinical and Health Psychology is using UF’s supercomputer, HiPerGator, to perform neuroimaging and machine learning analyses to study how anatomical differences may affect tDCS outcomes. “Investigating working memory in patients with mild cognitive impairment offers an opportunity to understand how cognitive processes are disrupted in the early stages of Alzheimer’s disease,” said Queen, whose study — funded by a National Institute on Aging research career development grant — integrates neuroimaging with information on brain structure that is unique to older adults and those with mild cognitive impairment. Refining the treatment with AI Using neuroimaging, Queen captures real-time changes during tDCS to the parts of the brain associated with working memory, which is the type of memory that allows humans to temporarily keep track of small amounts of information. Think of this as a mental “scratchpad.” Her study includes older adults with mild cognitive impairment as well as individuals who are cognitively healthy. In tDCS, a safe, weak electrical current passes through electrodes placed on a person’s head. The stimulation is being used in research and clinical settings for a variety of conditions and has shown partial success as a nonpharmaceutical intervention that can improve cognitive and mental health in older adults. But tDCS results can vary across individuals, and the suspected cause is both simple and complex: Everyone’s head is different. “One potential reason tDCS may not work for some individuals is the variation in head tissue anatomy, including differences in brain structure,” Queen said. “Since electrical stimulation must travel through multiple layers of tissue to reach the brain, and every individual’s anatomy is unique, these differences likely affect outcomes.” To address this further, Queen is using AI. “Artificial intelligence will play a major role in the modeling pipeline, including constructing individualized head models, conducting predictive analyses to identify which participants will respond to the stimulation, and disentangling multiple individual factors that may contribute to these outcomes,” Queen said. An estimated 10 to 20% of adults over age 65 have memory or thinking problems characterized as mild cognitive impairment. Their symptoms are not as severe as Alzheimer’s disease and other dementias, but they may be at increased risk for developing dementia. “The fact that not all individuals with mild cognitive impairment progress to Alzheimer’s disease emphasizes the need to identify effective interventions that can slow the progression to dementia,” Queen said. “This project presents an opportunity to differentiate between multiple types of mild cognitive impairment and investigate how tDCS affects the brain across these subtypes.” An AI visionary Queen, who joined the UF faculty under the university’s AI hiring initiative, is an instructor in the College of Public Health and Health Professions’ undergraduate certificate program in AI and public health and health care, and the co-chair of the college’s AI Workgroup. She is also the assistant director for computing and informatics at the UF Center for Cognitive Aging and Memory Clinical Translational Research and a member of UF’s McKnight Brain Institute. Queen received her Ph.D. training in engineering with a focus on building and running computational models to investigate medical devices. She experienced a career “a-ha” moment as a postdoc, when she was a co-investigator on a large clinical trial that paired brain stimulation with cognitive training to enhance cognition in older adults. “This experience was transformative for me. I had the chance to interact directly with participants, which was both fulfilling and eye-opening. These interactions allowed me to see the immediate, real-world implications of my work and sparked a passion for pursuing aging research,” Queen said. “I realized that, through this type of research, I could have a more direct impact on addressing age-related challenges, which prompted a shift in my career plans.” The new grant will help Queen further improve her understanding of the neurobiology and progression of Alzheimer’s disease and other dementias. “These experiences will ultimately prepare me to become a well-rounded aging investigator, capable of making meaningful contributions to the field of aging research,” Queen said. She also credits her mentors and collaborators — Ronald Cohen, Ph.D.; Adam Woods, Ph.D.; Steven DeKosky, M.D.; Ruogu Fang, Ph.D.; Joseph Gullett, Ph.D.; and Glenn Smith, Ph.D. — with supporting her as an early career scientist. “It really takes a village to get here!” Queen said.

Detecting Fraud Using Emerging Technology: Innovating Beyond Traditional Controls

Fraud and financial crime are evolving at a pace that challenges even the most established detection systems. From cyber-enabled schemes and complex financial misappropriations to subtle internal manipulations, traditional audit and compliance methods are often too slow or too narrow to keep up. In a world where billions of data points can hide a single irregularity, the investigative advantage now lies in speed, intelligence, and technological adaptability. J.S. Held’s Ken Feinstein recently authored an article exploring how artificial intelligence, machine learning, and advanced data analytics tools are transforming how organizations uncover and prevent fraud. In his piece, “Detecting Fraud Using Emerging Technology: Don’t Be Afraid to Innovate,” Feinstein illustrates how the integration of digital investigation techniques — from automation to predictive analytics — is reshaping the fraud-detection landscape, helping companies not just react to wrongdoing but anticipate and deter it. Ken Feinstein specializes in investigative data analytics and has over 25 years of experience. He provides data analytics solutions spanning multiple sectors, including retail and consumer products, life sciences, technology, financial services, and industrial products. His clients include law firms and Fortune 500 legal and compliance teams for whom he delivers large-scale, complex investigations, regulatory response matters, proactive anti‐fraud efforts, and compliance programs. View his profile here Why This Matters As fraudsters exploit digital tools and globalized networks, detection efforts must evolve in kind. Regulators expect faster, data-driven investigations, and boards demand real-time risk visibility. Those who innovate with AI-enabled detection and forensic analytics are better positioned to protect assets, reputation, and shareholder trust. Looking to know more? Connect with Ken Feinstein today by clicking on his icon below.

Ask an Expert: Augusta University's Gokila Dorai, PhD, talks Artificial Intelligence

Artificial Intelligence is dominating the news cycle. There's a lot to know, a lot to prepare for and also a lot of misinformation or assumptions that are making their way into the mainstream coverage. Recently, Augusta University's Gokila Dorai, PhD, took some time to answer some of the more important question's she's seeing being asked about Artificial Intelligence. Gokila Dorai, PhD, is an assistant professor in the School of Computer and Cyber Sciences at Augusta University. Dorai’s area of expertise is mobile/IoT forensics research. She is passionate about inventing digital tools to help victims and survivors of various digital crimes. View her profile here Q. What excites you most about your current research in digital forensics and AI? "I am most excited about using artificial intelligence to produce frameworks for practitioners make sense of complex digital evidence more quickly and fairly. My research combines machine learning with natural language processing incorporating a socio-technical framework, so that we don’t just get accurate results, but also understand how and why the system reached those results. This is especially important when dealing with sensitive investigations, where transparency builds trust." Q. How does your work help address today’s challenges around cybersecurity and data privacy? "Everyday life is increasingly digital, our phones, apps, and online accounts contain deeply personal information. My research looks at how we can responsibly analyze this data during investigations without compromising privacy. For example, I work on AI models that can focus only on what is legally relevant, while filtering out unrelated personal information. This balance between security and privacy is one of the biggest challenges today, and my work aims to provide practical solutions." Q. What role do you see artificial intelligence playing in shaping the future of digital investigations? "AI will be a critical partner in digital investigations. The volume of data investigators face is overwhelming, thousands of documents, chat messages, and app logs. AI can help organize and prioritize this information, spotting patterns that a human might miss. At the same time, I believe AI must be designed to be explainable and resilient against manipulation, so investigators and courts can trust its findings. The future isn’t about replacing human judgment, but about giving investigators smarter tools." Q. What is one misconception people often have about cybersecurity or digital forensics? "A common misconception is that digital forensics is like what you see on TV, instant results with a few keystrokes. In reality, it’s a painstaking process that requires both technical skill and ethical responsibility. Another misconception is that cybersecurity is only about protecting large organizations. In truth, individuals face just as many risks, from identity theft to app data leaks, and my research highlights how better tools can protect everyone." Are you a reporter covering Artificial intelligence and looking to know more? If so, then let us help with your stories. Gokila Dorai, PhD, is available for interviews. Simply click on her icon now to arrange a time today.