Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

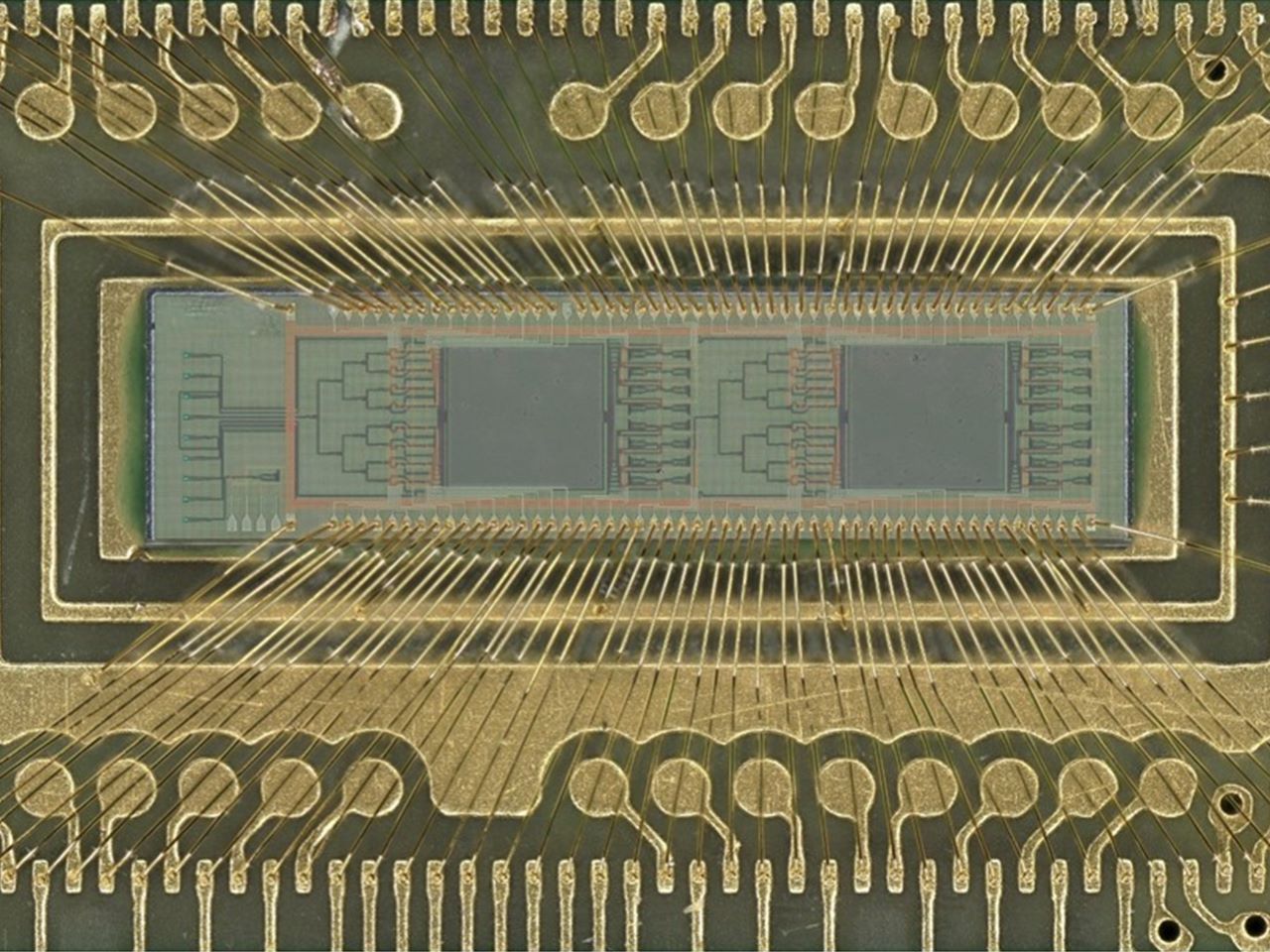

New light-based chip boosts power efficiency of AI tasks 100 fold

A team of engineers has developed a new kind of computer chip that uses light instead of electricity to perform one of the most power-intensive parts of artificial intelligence — image recognition and similar pattern-finding tasks. Using light dramatically cuts the power needed to perform these tasks, with efficiency 10 or even 100 times that of current chips performing the same calculations. Using this approach could help rein in the enormous demand for electricity that is straining power grids and enable higher performance AI models and systems. This machine learning task, called “convolution,” is at the heart of how AI systems process pictures, videos and even language. Convolution operations currently require large amounts of computing resources and time. These new chips, though, use lasers and microscopic lenses fabricated onto circuit boards to perform convolutions with far less power and at faster speeds. In tests, the new chip successfully classified handwritten digits with about 98% accuracy, on par with traditional chips “Performing a key machine learning computation at near zero energy is a leap forward for future AI systems,” said study leader Volker J. Sorger, Ph.D., the Rhines Endowed Professor in Semiconductor Photonics at the University of Florida. “This is critical to keep scaling up AI capabilities in years to come.” “This is the first time anyone has put this type of optical computation on a chip and applied it to an AI neural network,” said Hangbo Yang, Ph.D., a research associate professor in Sorger’s group at UF and co-author of the study. Sorger’s team collaborated with researchers at UF’s Florida Semiconductor Institute, the University of California, Los Angeles and George Washington University on study. The team published their findings, which were supported by the Office of Naval Research, Sept. 8 in the journal Advanced Photonics The prototype chip uses two sets of miniature Fresnel lenses using standard manufacturing processes. These two-dimensional versions of the same lenses found in lighthouses are just a fraction of the width of a human hair. Machine learning data, such as from an image or other pattern-recognition tasks, are converted into laser light on-chip and passed through the lenses. The results are then converted back into a digital signal to complete the AI task. This lens-based convolution system is not only more computationally efficient, but it also reduces the computing time. Using light instead of electricity has other benefits, too. Sorger’s group designed a chip that could use different colored lasers to process multiple data streams in parallel. “We can have multiple wavelengths, or colors, of light passing through the lens at the same time,” Yang said. “That’s a key advantage of photonics.” Chip manufacturers, such as industry leader NVIDIA, already incorporate optical elements into other parts of their AI systems, which could make the addition of convolution lenses more seamless. “In the near future, chip-based optics will become a key part of every AI chip we use daily,” said Sorger, who is also deputy director for strategic initiatives at the Florida Semiconductor Institute. “And optical AI computing is next.”

Delaware emerges as a test bed for the future of AI in health care

Delaware is positioning itself as a “living lab” where academia, health systems and government collaborate to shape the future of artificial-intelligence-enabled health care. The latest issue of the Delaware Journal of Public Health, guest edited by University of Delaware computer scientists Weisong Shi and Yixiang Deng, brings together 16 articles from researchers, clinicians, policymakers and industry leaders examining how AI and big data are reshaping health care. The issue, debuting this month, balances Delaware-specific topics with broader perspectives, highlighting three levels of impact: what Delaware can expect in the coming years, what other states can learn from Delaware’s approach and how UD research is advancing AI for health through collaborations. “At UD, we don’t work in isolation. We’re working closely with health care systems so that innovation happens together from the beginning,” says Shi, Alumni Distinguished Professor and Chair of UD’s Department of Computer and Information Sciences. Highlights from the issue include: The nation’s first nursing fellowship in robotics – ChristianaCare, Delaware’s largest health system, created an eight-month fellowship to train bedside nurses to conduct applied robotics research. Nurses who completed the program reported higher job satisfaction, improved well-being and greater professional confidence, suggesting programs like this may help retain the bedside workforce and reduce nationwide staffing shortages. Wheelchairs that navigate hospitals on their own – UD researchers developed a prototype autonomous wheelchair that combines onboard sensors and computing with software that interprets spoken directions from users, a step toward moving beyond systems that only work in controlled environments. To operate effectively in health care settings, the researchers say, wheelchairs must be able to navigate crowded hallways, interact with doors and elevators and recover safely when sensors or navigation systems fail. Smarter insulin dosing for type 1 diabetes – Researchers are developing computer models to predict blood sugar (glucose) trends and guide insulin delivery, but must address issues such as noisy data, reliable real-time prediction and the computational limits of wearable devices. A review by UD researchers and colleagues emphasizes the importance of interdisciplinary collaboration, standardized datasets, advances in computational infrastructure and clinical validation to turn these models into practical tools that improve patient care. To interview Shi about AI in health care and the new DJPH issue, click his profile or email MediaRelations@udel.edu. ABOUT WEISONG SHI Weisong Shi is an Alumni Distinguished Professor and Chair of the Department of Computer and Information Sciences at the University of Delaware. He leads the Connected and Autonomous Research Laboratory. He is an internationally renowned expert in edge computing, autonomous driving and connected health. His pioneering paper, “Edge Computing: Vision and Challenges,” has been cited over 10,000 times.

AI in the classroom: What parents need to know

As students return to classrooms, Maya Israel, professor of educational technology and computer science education at the University of Florida, shares insights on best practices for AI use for students in K-12. She also serves as the director of CSEveryone Center for Computer Science Education at UF, a program created to boost teachers’ capabilities around computer science and AI in education. Israel also leads the Florida K-12 Education Task Force, a group committed to empowering educators, students, families and administrators by harnessing the transformative potential of AI in K-12 classrooms, prioritizing safety, privacy, access and fairness. How are K–12 students using AI in classrooms? There is a wide range of approaches that students are using AI in classrooms. It depends on several factors including district policies, student age and the teacher’s instructional goals. Some districts restrict AI to only teacher use, such as creating custom reading passages for younger students. Others allow older students to use tools to check grammar, create visuals or run science simulations. Even then, skilled teachers frame AI as one tool, not a replacement for student thinking and effort. What are examples of age-appropriate tools that enhance learning? AI tools can be used to either enhance or erode learner agency and critical thinking. It is up to the educators to consider how these tools can be used appropriately. It is critical to use AI tools in a manner that supports learning, creativity and problem solving rather than bypass critical thinking. For example, Canva lets students create infographics, posters and videos to show understanding. Google’s Teachable Machine helps students learn AI concepts by training their own image-recognition models. These types of AI-augmented tools work best when they are embedded into activities such as project-based learning, where AI supports learning and critical thinking. How do teachers ensure AI supports core skills? While AI can be incredibly helpful in supporting learning, it should not be a shortcut that allows students to bypass learning. Teachers should design learning opportunities that integrate AI in a manner that encourages critical thinking. For example, if students are using AI to support their mathematical understanding, teachers should ask them to explain their reasoning, engage in discussions and attempt to solve problems in different ways. Teachers can ask students questions like, “Does that answer make sense based on what you know?” or “Why do you think [said AI tool] made that suggestion?” This type of reflection reinforces the message that learning does not happen through getting fast answers. Learning happens through exploration, productive struggle and collaboration. Many parents worry that using AI might make students too dependent on technology. How do educators address that concern? This is a very valid concern. Over-reliance on AI can erode independence and critical thinking, that’s why teachers should be intentional in how they use AI for teaching and learning. Educators can address this concern by communicating with parents their policies and approaches to using AI with students. This approach can include providing clear expectations of when AI is used, designing assignments that require critical thinking, personal reflection and reasoning and teaching students the metacognitive skills to self-assess how and when to use AI so that it is used to support learning rather than as a crutch. How do schools ensure that students still develop original thinking and creativity when using AI for assignments or projects? In the age of AI, there is the need to be even more intentional designing learning experiences where students engage in creative and critical thinking. One of the best practices that have shown to support this is the use of project-based learning, where students must create, iterate and evaluate ideas based on feedback from their peers and teachers. AI can help students gather ideas or organize research, but the students must ask the questions, synthesize information and produce original ideas. Assessment and rubrics should emphasize skills such as reasoning, process and creativity rather than just focusing on the final product. That way, although AI can play a role in instruction, the goal is to design instructional activities that move beyond what the AI can do. How do educators help students understand when it’s appropriate to use AI in their schoolwork? In the age of AI, educators should help students develop the skills to be original thinkers who can use AI thoughtfully and responsibly. Educators can help students understand when to use AI in their school work by directly embedding AI literacy into their instruction. AI literacy includes having discussions about the capabilities and limitations of AI, ethical considerations and the importance of students’ agency and original thoughts. Additionally, clear guidelines and policies help students navigate some of the gray areas of AI usage. What guidance should parents give at home? There are several key messages that parents should give their children about the use of AI. The most important message is that even though AI is powerful, it does not replace their judgement, creativity or empathy. Even though AI can provide fast answers, it is important for students to learn the skills themselves. Another key message is to know the rules about AI in the classroom. Parents should speak with their students about the mental health implications of over-reliance on AI. When students turn to AI-augmented tools for every answer or idea, they can gradually lose confidence in their own problem-solving abilities. Instead, students should learn how to use AI in ways that strengthen their skills and build independence.

AI gives rise to the cut and paste employee

Although AI tools can improve productivity, recent studies show that they too often intensify workloads instead of reducing them, in many cases even leading to cognitive overload and burnout. The University of Delaware's Saleem Mistry says this is creating employees who work harder, not smarter. Mistry, an associate professor of management in UD's Lerner College of Business & Economics, says his research confirms findings found in this Feb. 9, 2026 article in the Harvard Business Review. Driven by the misconception that AI is an accurate search engine rather than a predictive text tool, these "cut and paste" employees are using the applications to pump out deliverables in seconds just to keep up with increasing workloads. Mistry notes that this prioritization of speed over accuracy is happening at every level of the organization: • Junior staff: Blast out polished looking but unverified drafts. • Managers: Outsource their ability to deeply learn and critically think in order to summarize data, letting their analytical skills atrophy. • Power users: Build hidden, unapproved systems that bypass company oversight. A management problem, not a tech problem "When discussing this issue, I often hear leaders blame the technology. However, I believe that blaming the tech is missing the point; I see it as a failure of leadership," Mistry said. "When already overburdened employees who are constantly having to do more with less are handed vague mandates to just use AI without any training, they use it to look busy and produce volume-based work. Because many companies still reward the volume of work produced rather than the actual impact, employees naturally use these tools to generate slick but empty deliverables." "I believe that blaming the tech is missing the point; I see it as a failure of leadership. Because many companies still reward the volume of work produced rather than the actual impact, employees naturally use these tools to generate slick but empty deliverables." The real costs to organizations and incoming employees Mistry outlines three risks organizations face if they don’t intervene: 1. The workslop epidemic "These programs allow people to generate massive amounts of workslop, which is low-effort fluff that looks good but lacks substance. It takes seconds to create, but hours for someone else to decipher, fact-check, and fix," Mistry notes. "This drains money (up to $9 million annually for large companies) and destroys morale. As an educator, researcher, and a person brought into organizations to help fix problems, I for one do not want to be on the receiving end of a thoughtless, automated data dump, especially on tasks that require real skill and deep thinking." 2. Legal disaster He also states, "When the cut and paste mentality makes its way into professional submissions, the risks to the organization are real and oftentimes catastrophic. Courts have made it perfectly clear: ignorance is no excuse. If your name is on the document, you own the liability. Recently, attorneys have faced severe sanctions, hefty fines, and case dismissals for blindly submitting fake legal citations made up by computers." Click here for a list of cases. 3. A warning for incoming talent For new graduates entering this environment, Mistry offers a warning: Do not rely on AI to do your deep thinking. "If you simply use AI to blast out polished but unverified drafts, you become a replaceable 'cut and paste' employee," he says. “To truly stand out, new grads must prove they have the discernment to review, tweak, and challenge what the computer writes. The hiring edge is no longer just saying, 'I can do this task,' but 'I know how to leverage and correct AI to help me perform it.'" Four ideas to fix it To survive and indeed thrive with these new tools and avoid the unintended consequences of untrained staff, organizations should: 1. Reinforce the importance of fact-checking and editing: Adopt frameworks that teach employees how to show their work and log how they verified computer-generated facts. 2. Change the incentives: Stop rewarding busy work, useless reports, and massive slide decks. Evaluate employees on accuracy and results. 3. Eradicate superficial work: Don’t use automation to speed up ineffective legacy processes. Instead, use it to identify and eliminate them entirely. 4. Make time for editing: Give yourself and your employees the breathing room to actually review, tweak, and challenge what the computer writes instead of accepting the first draft. Mistry is available to discuss: Why AI is causing an epidemic of corporate "workslop" (and how to spot it). The leadership failure behind the "cut and paste" employee. How to rewrite corporate incentives to measure impact instead of volume in the AI era. Strategies for implementing safe, effective AI policies at work. How new college graduates can avoid the "workslop" trap in their first jobs. To reach Mistry directly and arrange an interview, visit his profile and click on the "contact" button. Interested reporters can also send an email to MediaRelations@udel.edu.

The AI In Action Symposium, hosted by the LSU E. J. Ourso College of Business, brings together expert voices at the heart of the AI revolution to explore how they have successfully navigated this evolving landscape. The 2026 symposium focuses on the practical implications of AI in business, including hiring AI-ready talent, ensuring responsible and ethical use, and exploring the challenges of implementing AI across both large enterprises and small startups. Speakers Attendees will hear from Louisiana leaders and national AI experts, including… Secretary Bruce Greenstein of the Louisiana Department of Health April Wiley, Senior Vice President at Community Coffee Robert Veit and Julian Tandler from Scale Team Six, a San Francisco-based business accelerator Dr. Tonya Jagneaux, who leads medical analytics at the Franciscan Missionaries of Our Lady Health System (FMOLHS) Hunter Thevis, president and co-founder of Lafayette-based S1 Technology …and many more! Details March 20, 2026, 8:00 a.m. – 1:00 p.m. Registration deadline is March 15. Held on the LSU A&M Campus, in the LSU Student Union Register at lsu.edu/business/ai-symposium Discount available for LSU System employees

Is writing with AI at work undermining your credibility?

With over 75% of professionals using AI in their daily work, writing and editing messages with tools like ChatGPT, Gemini, Copilot or Claude has become a commonplace practice. While generative AI tools are seen to make writing easier, are they effective for communicating between managers and employees? A new study of 1,100 professionals reveals a critical paradox in workplace communications: AI tools can make managers’ emails more professional, but regular use can undermine trust between them and their employees. “We see a tension between perceptions of message quality and perceptions of the sender,” said Anthony Coman, Ph.D., a researcher at the University of Florida's Warrington College of Business and study co-author. “Despite positive impressions of professionalism in AI-assisted writing, managers who use AI for routine communication tasks put their trustworthiness at risk when using medium- to high-levels of AI assistance." In the study published in the International Journal of Business Communication, Coman and his co-author, Peter Cardon, Ph.D., of the University of Southern California, surveyed professionals about how they viewed emails that they were told were written with low, medium and high AI assistance. Survey participants were asked to evaluate different AI-written versions of a congratulatory message on both their perception of the message content and their perception of the sender. While AI-assisted writing was generally seen as efficient, effective, and professional, Coman and Cardon found a “perception gap” in messages that were written by managers versus those written by employees. “When people evaluate their own use of AI, they tend to rate their use similarly across low, medium and high levels of assistance,” Coman explained. “However, when rating other’s use, magnitude becomes important. Overall, professionals view their own AI use leniently, yet they are more skeptical of the same levels of assistance when used by supervisors.” While low levels of AI help, like grammar or editing, were generally acceptable, higher levels of assistance triggered negative perceptions. The perception gap is especially significant when employees perceive higher levels of AI writing, bringing into question the authorship, integrity, caring and competency of their manager. The impact on trust was substantial: Only 40% to 52% of employees viewed supervisors as sincere when they used high levels of AI, compared to 83% for low-assistance messages. Similarly, while 95% found low-AI supervisor messages professional, this dropped to 69-73% when supervisors relied heavily on AI tools. The findings reveal employees can often detect AI-generated content and interpret its use as laziness or lack of caring. When supervisors rely heavily on AI for messages like team congratulations or motivational communications, employees perceive them as less sincere and question their leadership abilities. “In some cases, AI-assisted writing can undermine perceptions of traits linked to a supervisor’s trustworthiness,” Coman noted, specifically citing impacts on perceived ability and integrity, both key components of cognitive-based trust. The study suggests managers should carefully consider message type, level of AI assistance and relational context before using AI in their writing. While AI may be appropriate and professionally received for informational or routine communications, like meeting reminders or factual announcements, relationship-oriented messages requiring empathy, praise, congratulations, motivation or personal feedback are better handled with minimal technological intervention.

What the World Needs Now: How Art, Culture, and Nature Can Help Heal Communities in Difficult Times

In an era marked by political division, cultural fatigue, and rapid technological change, communities are increasingly searching for places that offer connection, restoration, and shared experience. Charles Burke, President & CEO of Frederik Meijer Gardens & Sculpture Park, brings a leadership perspective shaped by decades across the arts, civic engagement, and nonprofit strategy — focused on how cultural institutions can serve as stabilizing forces in uncertain times. Through the lens of Meijer Gardens, Burke examines how art, culture, and nature can work together to restore, unite, and inspire communities, offering spaces where people can slow down, reconnect, and engage with one another beyond polarization or distraction. Charles Burke is President & CEO of Frederik Meijer Gardens & Sculpture Park. Under his direction, the organization has been recognized as Best Sculpture Park in the United States by USA Today’s 10Best Readers’ Choice Awards in 2023, 2024, and 2025, and consecutively named one of the Best Places to Work in West Michigan, solidifying its reputation as a cultural landmark of international acclaim. View his profile Why This Matters Now In an era marked by political division, cultural fatigue, and rapid technological change, communities are increasingly searching for places that offer connection, restoration, and shared experience. Charles Burke, President & CEO of Frederik Meijer Gardens & Sculpture Park, brings a leadership perspective shaped by decades across the arts, civic engagement, and nonprofit strategy — focused on how cultural institutions can serve as stabilizing forces in uncertain times. Through the lens of Meijer Gardens, Burke examines how art, culture, and nature can work together to restore, unite, and inspire communities, offering spaces where people can slow down, reconnect, and engage with one another beyond polarization or distraction. An Expert Perspective on Healing Through Experience From Burke’s leadership vantage point, institutions like Meijer Gardens demonstrate how intentional design and programming can support community well-being. Examples include: Environments that encourage mental restoration, such as forested landscapes and immersive outdoor spaces Experiences that invite reflection and emotional engagement, rather than passive consumption Programming that brings together diverse audiences around shared encounters with beauty and creativity These experiences do not attempt to solve complex societal challenges directly. Instead, they create conditions for connection, empathy, and resilience, key foundations that healthy communities depend on. Civic Spaces as “Experiential Engines” A central concept in Burke’s work is the idea of cultural institutions as experiential engines — places designed not just to display art or plants, but to generate meaning, joy, and shared memory. When thoughtfully integrated, sculpture, horticulture, architecture, and programming can transform public spaces into environments that foster belonging and inclusion. This approach positions cultural institutions as active participants in civic life, contributing to community health and cohesion rather than operating at the margins of public discourse. Technology, Humanity, and the Future of Cultural Spaces As technology continues to shape how people interact with the world, Burke’s perspective emphasizes balance. Emerging tools — including artificial intelligence — can enhance accessibility, storytelling, and personalization when used intentionally. The challenge, and opportunity, lies in ensuring that technology deepens human connection rather than distracting from it. And while AI is ideal for aggregating information and should be integrated into , it isn't inherently creative. Burke believes that cultural institutions can uniquely unlock the power of human potential in creativity. And cultural institutions that integrate innovation thoughtfully can remain relevant while staying grounded in human experience. Meijer Gardens as a Living Model Over three decades, Meijer Gardens has evolved into a nationally recognized destination where beauty, experience, and mission align. Its integration of art, nature, education, and seasonal programming offers a real-world example of how cultural institutions can grow while remaining inclusive, restorative, and community-centered. Why Journalists and Conference Organizers Should Connect Charles Burke brings informed perspective on: The role of art and nature in public healing and mental wellness Cultural responsibility during periods of division and uncertainty Designing inclusive, joyful, and interactive civic spaces Balancing technology and humanity in cultural institutions How Meijer Gardens functions as a model for innovative integration and creativity Audience fit: museum and cultural leadership forums, civic innovation conferences, mental health and wellness discussions, placemaking initiatives, higher education leadership forums, philanthropic leadership events, sustainability and design summits.

Rethinking AI in the classroom: A literacy-first approach to generative technology

As schools nationwide navigate the rapid rise of generative artificial intelligence, educators are searching for guidance that goes beyond fear, hype or quick fixes. Rachel Karchmer-Klein, associate professor of literacy education at the University of Delaware, is helping lead that conversation. Her latest book, Putting AI to Work in Disciplinary Literacy: Shifting Mindsets and Guiding Classroom Instruction, offers research-based strategies for integrating AI into secondary classrooms without sacrificing critical thinking or deep learning. Here is how she is approaching the complex topic. Q: Your new book focuses on AI in disciplinary literacy. What is the central message? Karchmer-Klein: Rather than positioning AI as a shortcut or replacement for student thinking, the book emphasizes a literacy-first approach that helps students critically evaluate, interrogate, and apply AI-generated information. This is important because schools and universities are grappling with rapid AI adoption, often without clear guidance grounded in learning theory, literacy research, or classroom practice. Q: What inspired this research? Karchmer-Klein: The book grew directly out of my work with preservice teachers, practicing educators, and school leaders who were asking practical but complex questions about AI: How do we use it responsibly? How do we prevent over-reliance? How do we teach students to question what AI produces? I also saw a gap between public conversations about AI which often focused on fear or efficiency and what teachers actually need: research-informed strategies that support deep learning. My long-standing research in digital literacies provided a natural foundation for addressing these questions. Q: What are some of the key findings from your work? Karchmer-Klein: AI is most effective when it is embedded within strong instructional design and disciplinary literacy practices, not treated as a stand-alone tool. The research and classroom examples illustrate that AI can support student learning when it is used to prompt reasoning, reveal misconceptions, provide feedback for revision, and encourage multiple perspectives. Another important development is the emphasis on teaching students to evaluate AI outputs critically by recognizing bias, inaccuracies, and limitations, rather than assuming correctness. Q: How could this work impact schools, teacher education programs and the broader public? Karchmer-Klein: For educators, this work provides concrete, evidence-based literacy strategies coupled with AI in ways that strengthen, not dilute, student thinking. For teacher education programs and school districts, it offers a research-based framework for professional development and policy conversations around AI use. More broadly, the work speaks to a public concern about how emerging technologies are shaping learning, helping to reframe AI as something that requires human judgment, ethical consideration, and strong literacy skills to use well. ABOUT RACHEL KARCHMER-KLEIN Rachel Karchmer-Klein is an associate professor in the School of Education at the University of Delaware where she teaches courses in literacy and educational technology at the undergraduate, graduate, and doctoral levels. She is a former elementary classroom teacher and reading specialist. Her research investigates relationships among literacy skills, digital tools, and teacher preparation, with particular emphasis on technology-infused instructional design. To speak with Karchmer-Klein further about AI in literacy education, critical evaluation of AI-generated content and teacher preparation in the era of generative AI, reach out to MediaRelations@udel.edu.

When individuals sign up for direct-to-consumer genetic testing, the extent to which they ever think about their genetic data is likely in the context of the service for which they paid: information on predisposition to a genetic illness, or confirmation of an ethnic background, for example. But that data doesn’t just sit on a shelf, and while the most mainstream concern for such services is the privacy of your data, there is also the question of what else the companies do with it, and how. Ana Santos Rutschman, SJD, LLM, professor and faculty director of the Health Innovation Lab at Villanova University Charles Widger School of Law, is particularly interested in the latter. In June 2025, she co-authored an amicus brief centered on data protection and patient’s interests amid genetic testing company 23andMe’s bankruptcy proceedings. In December, many of those same co-authors published a paper in Nature Genetics, highlighting 23andMe’s bankruptcy as “an inflection point for the direct-to-consumer genetics market,” especially as it pertains to the broader corporate use of individuals’ scientific data. The reason? “How that data is used all depends on the policies of the individual companies,” she said. Genetic Testing Companies Use Your Data For More Than The Services You Pay For Those who utilize genetic testing companies—for any reason—are likely also consenting, often unknowingly, to other unrelated items. This includes acknowledgment of information related to how your data might be further used or monetized. “Most people don't think about secondary and tertiary uses of their data,” said Professor Rutschman. “[What they consent to] is displayed on the website somewhere, but it’s not easily understandable and accessible. It’s fine print.” Such companies often operate beyond the traditional “fee for a service” relationship with consumers. Yes, they will give you the information you paid for—finding out whether you have German ancestry or are predisposed to certain genetic disease—but instead of that genetic data just being stored somewhere, it’s often sold for research purposes. Today, in the age of AI big data, that might look something like this: The company puts your data in a box with parameters, along with thousands of others. Perhaps they are then able to observe a pattern that, until all that data was compiled, was previously unknown. They come up with a diagnostic or a medicine and patent it. That patent is licensed to somebody else, and the company makes money on the product. The use of that data for scientific purposes—even ones that turn a profit— is not problematic in itself, says Professor Rutschman. “Some people may even choose a company that allows scientific research over one that doesn’t. Many people may not care, but some will. The uses are not common knowledge, and that is worrisome. The public should be well-informed about what’s happening.” Deeper problems may arise when they aren’t informed of those potential uses of their data. Professor Rutschman cited the infamous Henrietta Lacks case, in which Lacks’ cells were, and continue to be, one of the most valuable cell lines in cancer research. Neither Lacks nor her family were paid for the widespread use of her genetic material until a settlement was reached long after her death. “When you have biologics involved, a concern is that if you have something potentially valuable, you may not see any money from it.” Bankruptcy Can Cause Policy Upheaval To understand the role bankruptcy can play in all of this, one needs to refer back to the power of individual company policy in this space. There are no external laws that dictate how these companies can further monetize their data, says Professor Rutschman, as long as they don’t violate other laws, such as privacy laws. That means that when a company like 23andMe goes bankrupt, as was the case in 2025, new ownership could enact completely different corporate policies for use of their property. In their specific case, the company was essentially bought back by 23andMe founder and CEO Anne Wojcicki’s non-profit, all but ensuring policies would remain the same. But that is exactly why Professor Rutschman and others are highlighting this specific case. “Bankruptcy is bad in the sense that there's a lot of uncertainty,” she said. “In this instance, the person coming in was the person who was there before, so the policy is likely to continue. But that's very rare. There are a roster of companies with access to biological materials. 23andMe is a good example of something not going horribly wrong, but with the understanding that it absolutely could.” Ways in which that could happen could be new ownership undermining the original intent of the data use by cessation of the company’s previous policies, or charging exorbitant prices to other entities to use that data for scientific research. “Because there is no law, these new owners can essentially do as they please with their proprietary data, unless they do something incredibly careless that amounts to the level of illegal,” Professor Rutschman said. “And that is concerning.” Onus Falls to Companies to Enact Safeguards To ensure a worst-case scenario for such companies does not unfold in a bankruptcy situation, Professor Rutschman points to a number of safeguards they could enact to protect their original commitments, ensure equitable access to data for scientific research and promote fair trade. One of which is implementing a company policy stating that commitments from a previous iteration of the company need to be honored if ownership is transferred. Those could include, as the authors recommend, policies “honoring original research-oriented commitments under which the data were collected,” as well as not “enclosing the dataset for exclusive commercial use.” She also highlights the need for Fair, Reasonable, and Non-Discriminatory (FRAND) voluntary licensing commitments, which are inherently more science and market friendly. “Companies in many sectors have committed to this approach, and we are saying it should apply in this space as well. You’ll charge your royalty, but it can’t be a billion dollars for a data set, nor would it be done by exclusively selling to one entity. You can get that billion dollars by selling to 15, 50 or 100 companies, and from a scientific research perspective, that’s what we want. Otherwise, you have a monopoly or duopoly. “There are a lot of different models that can be used, but ultimately what we are arguing is leaving this unaddressed is a really bad idea. It leaves everything exposed, and something bad is more likely to happen.”

The Secret to Happiness? Feeling Loved.

After more than 50 years studying close relationships, University of Rochester psychologist Harry Reis has reached a deceptively simple conclusion: Happy people feel loved. That conclusion became the jumping-off point for a new book Reis co-wrote, “How to Feel Loved: The Five Mindsets That Get You More of What Matters Most” (Harper 2026), which blends decades of research on happiness and human connection. In it, Reis and his co-author, Sonja Lyubomirsky, a psychologist at the University of California, Riverside, outline five research-backed mindsets that strengthen connection: sharing authentically, listening to people, practicing radical curiosity, approaching others with an open heart, and recognizing human complexity. The book was recently featured in The New York Times, which noted that the authors contend giving and receiving love function together like a seesaw: You lift a person up with the weight of your curiosity and attentiveness — and they do the same in turn. “The other side is very important also,” Reis told The Times. “To be sharing what’s important to you, to be sharing what you’re concerned about, so it can really become a two-way street.” Reis, who leads groundbreaking research on close relationships, is available to discuss: • The science of feeling loved vs. being loved • How digital distraction undermines connection • AI companionship and its psychological limits • Practical ways to build stronger, more resilient relationships • The link between love, happiness, and health Journalists writing about love and relationships can contact Reis by clicking on his profile.