Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

Pope Leo XIV Faces Both Historic and Novel Challenges as He Enters the Second Year of His Papacy

In his first appearance on the balcony of St. Peter’s Basilica, Pope Leo XIV shared with the world a message of hope, communion and reconciliation, emphasizing the need to “build bridges with dialogue and encounter so we can all be one people always in peace.” Throughout the last 12 months, the Pontiff has placed these values at the forefront of his work and ministry, pairing active collaboration with prayerful contemplation in his leadership of the world’s 1.4 billion Catholics. In the coming years, that emphasis is likely to continue, as the Pope addresses longstanding rifts and evolving challenges within the Church and beyond. Asked to consider the most striking aspects of his early papacy, and to reflect on the most pressing issues he currently faces, Villanova faculty members studying the pontificate had a wide variety of responses. Jaisy A. Joseph, PhD Assistant Professor of Theology and Religious Studies For Dr. Joseph, Pope Leo’s first year has been defined by a spiritual vision centered on unity, listening and shared responsibility. “From the beginning of his papacy, Leo emphasized that we are a synodal Church working towards peace and moving forward together. Leo’s Augustinian formation will absolutely leave its imprint on what Pope Francis started. While the two have distinct personalities and styles, there is a fundamental continuity with Francis that Leo has signaled. Leo stresses that at the core of the Church is a deeper desire for a spirituality of ‘we’—a Church rooted in deep listening and bold speaking. This is where the Augustinian charism makes itself known. “This unity does not erase differences. Instead, it asks, ‘How do we create friendships that are strong enough to bear the tensions of our differences?’ In a world shaped by ‘us versus them,’ Leo insists on recognizing Christ in the completely different ‘other.’ “Finally, his leadership style is marked by discernment. Listening is so critical to him, and any caution he displays is not out of fear but wanting to listen before speaking. In a noisy world, he insists that we just need silence—trusting that through shared listening, the Church can move forward together.” Luca Cottini, PhD Professor of Italian Studies For Dr. Cottini, Pope Leo’s first year has been marked by a clear effort to position the Church in active dialogue with the modern world—especially in response to emerging global challenges, migration and an increasingly interconnected faith community. He draws parallels to the priorities of Leo XIV’s namesake, Pope Leo XIII. “Catholic social doctrine is a doctrine that the Church established to address subjects that are not directly written about in the Gospel. This doctrine was important for Pope Leo XIII and is increasingly important for Leo XIV as well. ‘Leo’ is a name that relates back to Catholic social doctrine and the need to read the changing signs of the times. By choosing the name ‘Leo,’ the Pope signaled his desire to respond to contemporary issues. “Leo XIV has also harkened back to Leo XIII in his first year by viewing migration and immigration not as a plight, but rather as an opportunity to enter into contact with new worlds. This approach connects to Leo XIV’s own background and perspective, which includes both proximity to and distance from the United States, giving him both an outsider and insider perspective as well as a critical thinking lens on these issues. “Lastly, Leo XIV has used his first year to elevate this idea of a universal Church that is much needed, shaped by his global exposure and an ability to see the world through the lens of others. He sees that we can dialogue with the world, approaching modernity not as an enemy but as something to engage with.” Patrick McKinley Brennan, JD John F. Scarpa Chair in Catholic Legal Studies According to Professor Brennan, “One of the issues that is on the Pope’s radar and has been from before the conclave is the question of the traditional Latin Mass,” a cause championed by various cardinals, bishops, priests and lay faithful around the globe. As he shares, it is a matter of great interest to a small but growing number of Catholics who recall Pope Benedict XVI’s statement that the traditional Mass—the Mass as it was celebrated by most Catholics since 1570—was “never juridically abrogated” following the Second Vatican Council. “Pope John Paul II in the 1980s, and then Pope Benedict XVI in 2007, liberalized access around the world to the traditional Mass. But Pope Francis revoked most of those permissions, citing ‘facts’ that have subsequently been called into question by investigative journalists and others. Pope Francis issued a document called Traditionis custodes, which [went against] the permissions that Benedict XVI gave in a document called Summorum pontificum in July 2007. “Now, the leadership of the Society of St. Pius X [an anti-modernist priestly fraternity] have announced that they’re going to ordain new bishops, the exact thing that got some of their predecessors excommunicated in 1988, so that the traditional Mass can continue to be celebrated and other sacraments can continue to be provided to Catholics according to the traditional rites. Reading between the lines, I think the Society of St. Pius X is trying to force Pope Leo’s hand on the Latin Mass. He’s been biding his time, working out how to respond to this hard question, and I think they’ve just decided that it’s an all-or-nothing situation. “It’s an example of how Pope Leo inherited some big problems, and I think most of the cardinals who elected him thought that they had chosen someone who, because he can listen and is committed to unity, will try his very best to find a solution that remains faithful to Catholic doctrine while bringing in as many voices as possible. Ironically, Pope Francis reduced legitimate diversity in Catholic liturgy, and while Pope Leo has a chance to restore that diversity, he has to do so in a way that addresses the irregular situation of the Society of St. Pius X.” Ilia Delio, OSF, PhD Josephine C. Connelly Endowed Chair in Christian Theology Looking ahead, Sister Delio says one of the most significant social developments Pope Leo must face is the rise of advanced technologies—in particular, increasingly sophisticated artificial intelligence models. “Our theological anthropology needs a bit of updating, as it does not currently meet the needs of our very complex world today. There are a lot of discussions on artificial intelligence and advanced technology, but the problem is that these technologies are already here and rapidly advancing. “So, we have to face this reality, not by asking ‘What is happening to us?’ but ‘What are we becoming with our technologies?’ and ‘How best can we remain human in an AI world?’ I think Pope Leo is asking similar questions, considering what makes the human person the image of God, what makes us distinct and whether there are human values that cannot be downloaded or reproduced in a digital medium. “At the same time, we must ask: Can technology deepen the human spirit by enabling a new level of collective life? Can AI technology empower the Body of Christ?” To speak with any of these faculty experts, please contact mediaexperts@villanova.edu.

New AI tool matches students with high-impact internships

Finding the right internship can be an important step for students, but it’s not always clear which opportunities will lead to the strongest growth. To help solve that problem, University of Florida researchers have developed an AI-powered tool that helps students identify internships most likely to accelerate their technical and professional development. Unlike traditional recommendation engines, Pro-CaRE not only predicts which opportunities will lead to stronger outcomes, it also explains why each suggestion is a good fit. In testing data collected from the students, Pro-CaRE’s predictions proved highly accurate, accounting for more than 72% of the differences in learning gains among participants. While the pilot is being tested in engineering, the tool could be adopted for other disciplines. “Internships are one of the most critical parts of an engineering education, but students often struggle to know which experiences will actually help them grow,” said Jinnie Shin, assistant professor of research and evaluation methodology in the UF College of Education. “What makes Pro-CaRE unique is that it doesn’t just offer a list of options. It provides personalized recommendations backed by data and it tells students clearly why an opportunity is a good match for them.” Pro-CaRE creates matches by analyzing each student’s coursework, major, background and self-reported interest, confidence and self-efficacy in engineering skills. It then compares that profile with a carefully chosen set of similar peers to refine suggestions. The result is more precise guidance that adapts to students at different stages of their degree programs. “Students shouldn’t have to guess or hope that an internship will be worthwhile,” Shin said. “With Pro-CaRE, they can approach opportunities knowing they’re backed by evidence, whether the role is onsite, hybrid or remote and whether it’s at a startup or a Fortune 500 company.” The system is designed to work across a wide range of companies and contexts, giving students flexibility while ensuring their choices align with their personal and professional goals. Each recommendation comes with a clear “why this?” explanation, so students can make confident decisions and discuss options more effectively with advisors. Pro-CaRE was developed by a cross-disciplinary UF team combining expertise in education and engineering. Alongside Shin, the project’s co-principal investigators include Kent Crippen in the College of Education and Bruce Carroll in the Herbert Wertheim College of Engineering. The team is exploring external funding opportunities to expand the usage and test the efficacy on a larger scale. “Ultimately, our goal is to empower students to invest their time in experiences that will have the greatest impact,” Shin said. “Pro-CaRE bridges the gap between what students hope to gain and what internships can truly deliver.”

Using AI tools empowers and burdens users in online Q&A communities

Whether you’ve searched for cooking tips on Reddit, troubleshooted tech problems on community forums or asked questions on platforms like Quora, you’ve benefited from online help communities. These digital spaces rely on people across the world to contribute their knowledge for free, and have become an essential tool for solving problems and learning new skills. New research reveals that generative artificial intelligence tools like ChatGPT are creating a double-edge effect on users in these communities, simultaneously making them more helpful while potentially overwhelming them to the point of decreasing their responses. “On the positive side, AI helps users learn to write more organized and readable answers, leading to a noticeable increase in the number of responses,” explained Liangfei Qiu, Ph.D., study coauthor and PricewaterhouseCoopers Professor at the University of Florida Warrington College of Business. “However, when users rely too heavily on AI, the mental effort required to process and refine AI outputs can actually reduce participation. In other words, AI both empowers and burdens contributors: it enables more engagement and better readability, but too much reliance can slow people down.” The study examined Stack Overflow, one of the world’s largest question-and-answer coding platforms for computer programmers, to investigate the impact of generative AI on both the quality and quantity of user contributions. Qiu and his coauthor Guohou Shan of Northeastern University’s D’Amore-McKim School of Business measured the impact of AI on users’ number of answers generated per day, answer length and readability. Specifically, they found that users who used AI tools to generate their responses contributed almost 17% more answers per day compared to those who didn’t use AI. The answers generated with AI were both shorter by about 23% and easier to read. However, when people relied too heavily on AI tools, their participation decreased. Qiu and Shan noted that the additional cognitive burden associated with heavier AI usage negatively affected the impact on a user’s answer quality. For online help communities grappling with AI policies, this research provides valuable insight into how these policies can be updated in the current AI environment. While some communities, like Stack Overflow, have banned AI tools, this research suggests that a more nuanced approach could be a better solution. Instead of banning AI entirely, the researchers suggest striking a balance between allowing AI usage while promoting responsible and moderated use. This approach, they argue, would enable users to benefit from efficiency and learning opportunities, while not compromising quality content and user cognition. “For platform leaders, the takeaway is clear: AI can boost participation if thoughtfully integrated, but its cognitive demands must be managed to sustain long-term user contributions,” Qiu said.

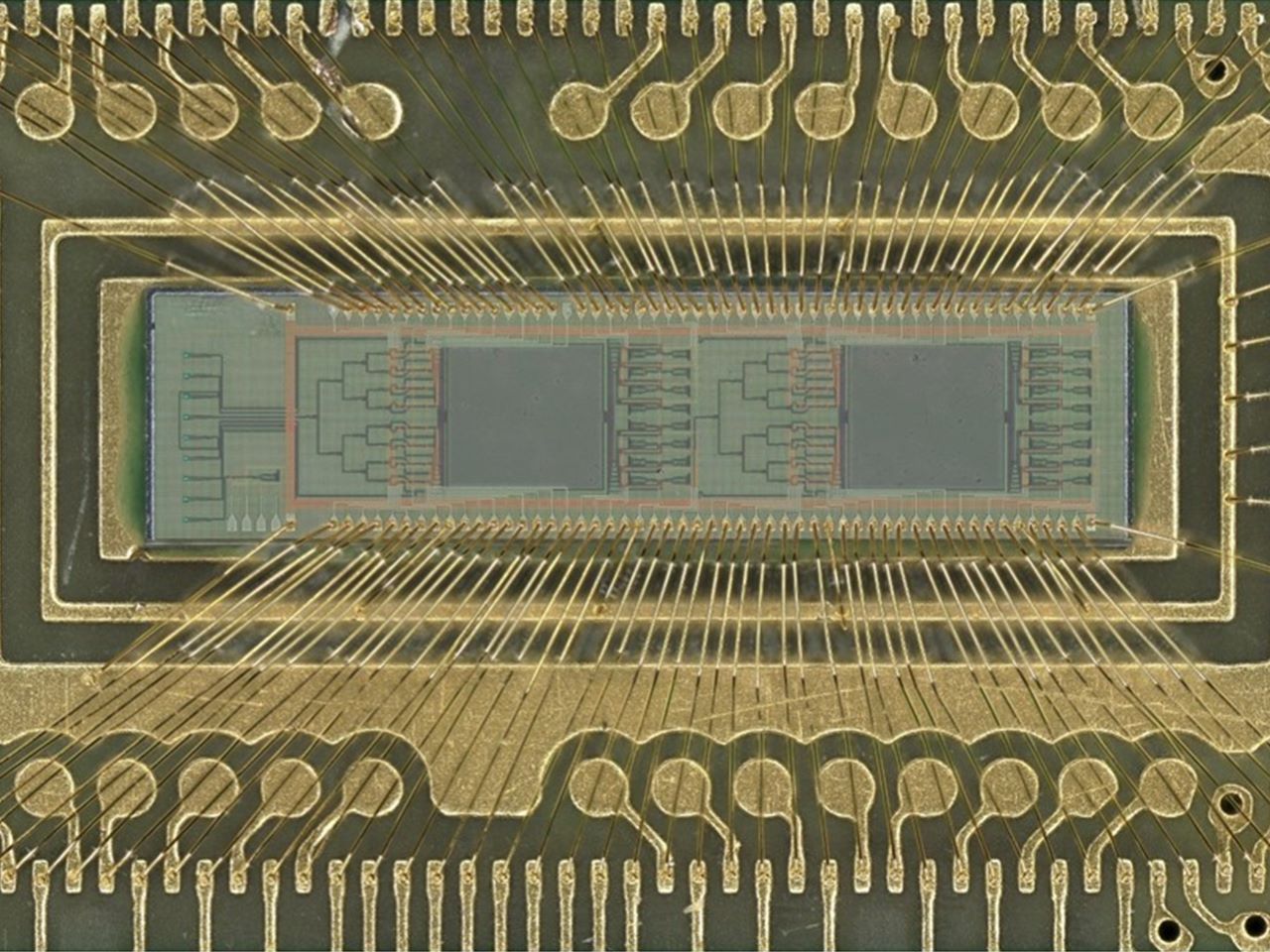

New light-based chip boosts power efficiency of AI tasks 100 fold

A team of engineers has developed a new kind of computer chip that uses light instead of electricity to perform one of the most power-intensive parts of artificial intelligence — image recognition and similar pattern-finding tasks. Using light dramatically cuts the power needed to perform these tasks, with efficiency 10 or even 100 times that of current chips performing the same calculations. Using this approach could help rein in the enormous demand for electricity that is straining power grids and enable higher performance AI models and systems. This machine learning task, called “convolution,” is at the heart of how AI systems process pictures, videos and even language. Convolution operations currently require large amounts of computing resources and time. These new chips, though, use lasers and microscopic lenses fabricated onto circuit boards to perform convolutions with far less power and at faster speeds. In tests, the new chip successfully classified handwritten digits with about 98% accuracy, on par with traditional chips “Performing a key machine learning computation at near zero energy is a leap forward for future AI systems,” said study leader Volker J. Sorger, Ph.D., the Rhines Endowed Professor in Semiconductor Photonics at the University of Florida. “This is critical to keep scaling up AI capabilities in years to come.” “This is the first time anyone has put this type of optical computation on a chip and applied it to an AI neural network,” said Hangbo Yang, Ph.D., a research associate professor in Sorger’s group at UF and co-author of the study. Sorger’s team collaborated with researchers at UF’s Florida Semiconductor Institute, the University of California, Los Angeles and George Washington University on study. The team published their findings, which were supported by the Office of Naval Research, Sept. 8 in the journal Advanced Photonics The prototype chip uses two sets of miniature Fresnel lenses using standard manufacturing processes. These two-dimensional versions of the same lenses found in lighthouses are just a fraction of the width of a human hair. Machine learning data, such as from an image or other pattern-recognition tasks, are converted into laser light on-chip and passed through the lenses. The results are then converted back into a digital signal to complete the AI task. This lens-based convolution system is not only more computationally efficient, but it also reduces the computing time. Using light instead of electricity has other benefits, too. Sorger’s group designed a chip that could use different colored lasers to process multiple data streams in parallel. “We can have multiple wavelengths, or colors, of light passing through the lens at the same time,” Yang said. “That’s a key advantage of photonics.” Chip manufacturers, such as industry leader NVIDIA, already incorporate optical elements into other parts of their AI systems, which could make the addition of convolution lenses more seamless. “In the near future, chip-based optics will become a key part of every AI chip we use daily,” said Sorger, who is also deputy director for strategic initiatives at the Florida Semiconductor Institute. “And optical AI computing is next.”

Delaware emerges as a test bed for the future of AI in health care

Delaware is positioning itself as a “living lab” where academia, health systems and government collaborate to shape the future of artificial-intelligence-enabled health care. The latest issue of the Delaware Journal of Public Health, guest edited by University of Delaware computer scientists Weisong Shi and Yixiang Deng, brings together 16 articles from researchers, clinicians, policymakers and industry leaders examining how AI and big data are reshaping health care. The issue, debuting this month, balances Delaware-specific topics with broader perspectives, highlighting three levels of impact: what Delaware can expect in the coming years, what other states can learn from Delaware’s approach and how UD research is advancing AI for health through collaborations. “At UD, we don’t work in isolation. We’re working closely with health care systems so that innovation happens together from the beginning,” says Shi, Alumni Distinguished Professor and Chair of UD’s Department of Computer and Information Sciences. Highlights from the issue include: The nation’s first nursing fellowship in robotics – ChristianaCare, Delaware’s largest health system, created an eight-month fellowship to train bedside nurses to conduct applied robotics research. Nurses who completed the program reported higher job satisfaction, improved well-being and greater professional confidence, suggesting programs like this may help retain the bedside workforce and reduce nationwide staffing shortages. Wheelchairs that navigate hospitals on their own – UD researchers developed a prototype autonomous wheelchair that combines onboard sensors and computing with software that interprets spoken directions from users, a step toward moving beyond systems that only work in controlled environments. To operate effectively in health care settings, the researchers say, wheelchairs must be able to navigate crowded hallways, interact with doors and elevators and recover safely when sensors or navigation systems fail. Smarter insulin dosing for type 1 diabetes – Researchers are developing computer models to predict blood sugar (glucose) trends and guide insulin delivery, but must address issues such as noisy data, reliable real-time prediction and the computational limits of wearable devices. A review by UD researchers and colleagues emphasizes the importance of interdisciplinary collaboration, standardized datasets, advances in computational infrastructure and clinical validation to turn these models into practical tools that improve patient care. To interview Shi about AI in health care and the new DJPH issue, click his profile or email MediaRelations@udel.edu. ABOUT WEISONG SHI Weisong Shi is an Alumni Distinguished Professor and Chair of the Department of Computer and Information Sciences at the University of Delaware. He leads the Connected and Autonomous Research Laboratory. He is an internationally renowned expert in edge computing, autonomous driving and connected health. His pioneering paper, “Edge Computing: Vision and Challenges,” has been cited over 10,000 times.

AI in the classroom: What parents need to know

As students return to classrooms, Maya Israel, professor of educational technology and computer science education at the University of Florida, shares insights on best practices for AI use for students in K-12. She also serves as the director of CSEveryone Center for Computer Science Education at UF, a program created to boost teachers’ capabilities around computer science and AI in education. Israel also leads the Florida K-12 Education Task Force, a group committed to empowering educators, students, families and administrators by harnessing the transformative potential of AI in K-12 classrooms, prioritizing safety, privacy, access and fairness. How are K–12 students using AI in classrooms? There is a wide range of approaches that students are using AI in classrooms. It depends on several factors including district policies, student age and the teacher’s instructional goals. Some districts restrict AI to only teacher use, such as creating custom reading passages for younger students. Others allow older students to use tools to check grammar, create visuals or run science simulations. Even then, skilled teachers frame AI as one tool, not a replacement for student thinking and effort. What are examples of age-appropriate tools that enhance learning? AI tools can be used to either enhance or erode learner agency and critical thinking. It is up to the educators to consider how these tools can be used appropriately. It is critical to use AI tools in a manner that supports learning, creativity and problem solving rather than bypass critical thinking. For example, Canva lets students create infographics, posters and videos to show understanding. Google’s Teachable Machine helps students learn AI concepts by training their own image-recognition models. These types of AI-augmented tools work best when they are embedded into activities such as project-based learning, where AI supports learning and critical thinking. How do teachers ensure AI supports core skills? While AI can be incredibly helpful in supporting learning, it should not be a shortcut that allows students to bypass learning. Teachers should design learning opportunities that integrate AI in a manner that encourages critical thinking. For example, if students are using AI to support their mathematical understanding, teachers should ask them to explain their reasoning, engage in discussions and attempt to solve problems in different ways. Teachers can ask students questions like, “Does that answer make sense based on what you know?” or “Why do you think [said AI tool] made that suggestion?” This type of reflection reinforces the message that learning does not happen through getting fast answers. Learning happens through exploration, productive struggle and collaboration. Many parents worry that using AI might make students too dependent on technology. How do educators address that concern? This is a very valid concern. Over-reliance on AI can erode independence and critical thinking, that’s why teachers should be intentional in how they use AI for teaching and learning. Educators can address this concern by communicating with parents their policies and approaches to using AI with students. This approach can include providing clear expectations of when AI is used, designing assignments that require critical thinking, personal reflection and reasoning and teaching students the metacognitive skills to self-assess how and when to use AI so that it is used to support learning rather than as a crutch. How do schools ensure that students still develop original thinking and creativity when using AI for assignments or projects? In the age of AI, there is the need to be even more intentional designing learning experiences where students engage in creative and critical thinking. One of the best practices that have shown to support this is the use of project-based learning, where students must create, iterate and evaluate ideas based on feedback from their peers and teachers. AI can help students gather ideas or organize research, but the students must ask the questions, synthesize information and produce original ideas. Assessment and rubrics should emphasize skills such as reasoning, process and creativity rather than just focusing on the final product. That way, although AI can play a role in instruction, the goal is to design instructional activities that move beyond what the AI can do. How do educators help students understand when it’s appropriate to use AI in their schoolwork? In the age of AI, educators should help students develop the skills to be original thinkers who can use AI thoughtfully and responsibly. Educators can help students understand when to use AI in their school work by directly embedding AI literacy into their instruction. AI literacy includes having discussions about the capabilities and limitations of AI, ethical considerations and the importance of students’ agency and original thoughts. Additionally, clear guidelines and policies help students navigate some of the gray areas of AI usage. What guidance should parents give at home? There are several key messages that parents should give their children about the use of AI. The most important message is that even though AI is powerful, it does not replace their judgement, creativity or empathy. Even though AI can provide fast answers, it is important for students to learn the skills themselves. Another key message is to know the rules about AI in the classroom. Parents should speak with their students about the mental health implications of over-reliance on AI. When students turn to AI-augmented tools for every answer or idea, they can gradually lose confidence in their own problem-solving abilities. Instead, students should learn how to use AI in ways that strengthen their skills and build independence.

AI gives rise to the cut and paste employee

Although AI tools can improve productivity, recent studies show that they too often intensify workloads instead of reducing them, in many cases even leading to cognitive overload and burnout. The University of Delaware's Saleem Mistry says this is creating employees who work harder, not smarter. Mistry, an associate professor of management in UD's Lerner College of Business & Economics, says his research confirms findings found in this Feb. 9, 2026 article in the Harvard Business Review. Driven by the misconception that AI is an accurate search engine rather than a predictive text tool, these "cut and paste" employees are using the applications to pump out deliverables in seconds just to keep up with increasing workloads. Mistry notes that this prioritization of speed over accuracy is happening at every level of the organization: • Junior staff: Blast out polished looking but unverified drafts. • Managers: Outsource their ability to deeply learn and critically think in order to summarize data, letting their analytical skills atrophy. • Power users: Build hidden, unapproved systems that bypass company oversight. A management problem, not a tech problem "When discussing this issue, I often hear leaders blame the technology. However, I believe that blaming the tech is missing the point; I see it as a failure of leadership," Mistry said. "When already overburdened employees who are constantly having to do more with less are handed vague mandates to just use AI without any training, they use it to look busy and produce volume-based work. Because many companies still reward the volume of work produced rather than the actual impact, employees naturally use these tools to generate slick but empty deliverables." "I believe that blaming the tech is missing the point; I see it as a failure of leadership. Because many companies still reward the volume of work produced rather than the actual impact, employees naturally use these tools to generate slick but empty deliverables." The real costs to organizations and incoming employees Mistry outlines three risks organizations face if they don’t intervene: 1. The workslop epidemic "These programs allow people to generate massive amounts of workslop, which is low-effort fluff that looks good but lacks substance. It takes seconds to create, but hours for someone else to decipher, fact-check, and fix," Mistry notes. "This drains money (up to $9 million annually for large companies) and destroys morale. As an educator, researcher, and a person brought into organizations to help fix problems, I for one do not want to be on the receiving end of a thoughtless, automated data dump, especially on tasks that require real skill and deep thinking." 2. Legal disaster He also states, "When the cut and paste mentality makes its way into professional submissions, the risks to the organization are real and oftentimes catastrophic. Courts have made it perfectly clear: ignorance is no excuse. If your name is on the document, you own the liability. Recently, attorneys have faced severe sanctions, hefty fines, and case dismissals for blindly submitting fake legal citations made up by computers." Click here for a list of cases. 3. A warning for incoming talent For new graduates entering this environment, Mistry offers a warning: Do not rely on AI to do your deep thinking. "If you simply use AI to blast out polished but unverified drafts, you become a replaceable 'cut and paste' employee," he says. “To truly stand out, new grads must prove they have the discernment to review, tweak, and challenge what the computer writes. The hiring edge is no longer just saying, 'I can do this task,' but 'I know how to leverage and correct AI to help me perform it.'" Four ideas to fix it To survive and indeed thrive with these new tools and avoid the unintended consequences of untrained staff, organizations should: 1. Reinforce the importance of fact-checking and editing: Adopt frameworks that teach employees how to show their work and log how they verified computer-generated facts. 2. Change the incentives: Stop rewarding busy work, useless reports, and massive slide decks. Evaluate employees on accuracy and results. 3. Eradicate superficial work: Don’t use automation to speed up ineffective legacy processes. Instead, use it to identify and eliminate them entirely. 4. Make time for editing: Give yourself and your employees the breathing room to actually review, tweak, and challenge what the computer writes instead of accepting the first draft. Mistry is available to discuss: Why AI is causing an epidemic of corporate "workslop" (and how to spot it). The leadership failure behind the "cut and paste" employee. How to rewrite corporate incentives to measure impact instead of volume in the AI era. Strategies for implementing safe, effective AI policies at work. How new college graduates can avoid the "workslop" trap in their first jobs. To reach Mistry directly and arrange an interview, visit his profile and click on the "contact" button. Interested reporters can also send an email to MediaRelations@udel.edu.

VCU College of Engineering receives $600,000 for AI-driven cybersecurity research

To advance AI-enabled cybersecurity research, the National Science Foundation (NSF) presented Kemal Akkaya, Ph.D., professor and chair of the Department of Computer Science, with a $600,000 grant through the organization’s Cybersecurity Innovation for Cyberinfrastructure program. Akkaya’s three-year project will explore how large language models (LLMs) can automate packet labeling for intrusion detection systems. “From transportation and healthcare to finance, improving the accuracy of machine learning algorithms used to defend the networks that underpin these sectors’ cyberinfrastructure is critical for protecting them from cyberattacks. Strengthening these defenses helps ensure the reliability and security of the essential services people rely on every day,” said Akkaya. Intrusion detection systems monitor network traffic to identify suspicious or malicious activity. These systems rely on machine learning models trained on large volumes of accurately labeled data. Producing those datasets, however, is time intensive and often requires expert cybersecurity knowledge. As digital systems increasingly power transportation, health care, finance and communication, the volume and sophistication of cyber attacks continue to grow. At the same time, artificial intelligence is reshaping how both attackers and defenders operate. Improving how quickly and accurately security systems can be trained is critical to protecting the infrastructure that supports daily life. Akkaya’s project will investigate how generative AI can help address this challenge. The team will fine tune open-source large language models using network data, threat signatures and expert annotations. Model accuracy will be strengthened through retrieval-augmented refinement, ensemble modeling and human-in-the-loop verification. Labeled datasets will be released in stages to support the development and evaluation of cybersecurity models. Using data from AmLight, an international research and education network operated by Florida International University (FIU), the project includes collaboration with researchers from FIU. The award strengthens VCU’s growing leadership in AI-enabled cybersecurity research and provides hands-on research training for graduate students. Resulting datasets from this work will support machine learning education for undergraduate students.

The AI In Action Symposium, hosted by the LSU E. J. Ourso College of Business, brings together expert voices at the heart of the AI revolution to explore how they have successfully navigated this evolving landscape. The 2026 symposium focuses on the practical implications of AI in business, including hiring AI-ready talent, ensuring responsible and ethical use, and exploring the challenges of implementing AI across both large enterprises and small startups. Speakers Attendees will hear from Louisiana leaders and national AI experts, including… Secretary Bruce Greenstein of the Louisiana Department of Health April Wiley, Senior Vice President at Community Coffee Robert Veit and Julian Tandler from Scale Team Six, a San Francisco-based business accelerator Dr. Tonya Jagneaux, who leads medical analytics at the Franciscan Missionaries of Our Lady Health System (FMOLHS) Hunter Thevis, president and co-founder of Lafayette-based S1 Technology …and many more! Details March 20, 2026, 8:00 a.m. – 1:00 p.m. Registration deadline is March 15. Held on the LSU A&M Campus, in the LSU Student Union Register at lsu.edu/business/ai-symposium Discount available for LSU System employees

Is writing with AI at work undermining your credibility?

With over 75% of professionals using AI in their daily work, writing and editing messages with tools like ChatGPT, Gemini, Copilot or Claude has become a commonplace practice. While generative AI tools are seen to make writing easier, are they effective for communicating between managers and employees? A new study of 1,100 professionals reveals a critical paradox in workplace communications: AI tools can make managers’ emails more professional, but regular use can undermine trust between them and their employees. “We see a tension between perceptions of message quality and perceptions of the sender,” said Anthony Coman, Ph.D., a researcher at the University of Florida's Warrington College of Business and study co-author. “Despite positive impressions of professionalism in AI-assisted writing, managers who use AI for routine communication tasks put their trustworthiness at risk when using medium- to high-levels of AI assistance." In the study published in the International Journal of Business Communication, Coman and his co-author, Peter Cardon, Ph.D., of the University of Southern California, surveyed professionals about how they viewed emails that they were told were written with low, medium and high AI assistance. Survey participants were asked to evaluate different AI-written versions of a congratulatory message on both their perception of the message content and their perception of the sender. While AI-assisted writing was generally seen as efficient, effective, and professional, Coman and Cardon found a “perception gap” in messages that were written by managers versus those written by employees. “When people evaluate their own use of AI, they tend to rate their use similarly across low, medium and high levels of assistance,” Coman explained. “However, when rating other’s use, magnitude becomes important. Overall, professionals view their own AI use leniently, yet they are more skeptical of the same levels of assistance when used by supervisors.” While low levels of AI help, like grammar or editing, were generally acceptable, higher levels of assistance triggered negative perceptions. The perception gap is especially significant when employees perceive higher levels of AI writing, bringing into question the authorship, integrity, caring and competency of their manager. The impact on trust was substantial: Only 40% to 52% of employees viewed supervisors as sincere when they used high levels of AI, compared to 83% for low-assistance messages. Similarly, while 95% found low-AI supervisor messages professional, this dropped to 69-73% when supervisors relied heavily on AI tools. The findings reveal employees can often detect AI-generated content and interpret its use as laziness or lack of caring. When supervisors rely heavily on AI for messages like team congratulations or motivational communications, employees perceive them as less sincere and question their leadership abilities. “In some cases, AI-assisted writing can undermine perceptions of traits linked to a supervisor’s trustworthiness,” Coman noted, specifically citing impacts on perceived ability and integrity, both key components of cognitive-based trust. The study suggests managers should carefully consider message type, level of AI assistance and relational context before using AI in their writing. While AI may be appropriate and professionally received for informational or routine communications, like meeting reminders or factual announcements, relationship-oriented messages requiring empathy, praise, congratulations, motivation or personal feedback are better handled with minimal technological intervention.