Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

Rethinking AI in the classroom: A literacy-first approach to generative technology

As schools nationwide navigate the rapid rise of generative artificial intelligence, educators are searching for guidance that goes beyond fear, hype or quick fixes. Rachel Karchmer-Klein, associate professor of literacy education at the University of Delaware, is helping lead that conversation. Her latest book, Putting AI to Work in Disciplinary Literacy: Shifting Mindsets and Guiding Classroom Instruction, offers research-based strategies for integrating AI into secondary classrooms without sacrificing critical thinking or deep learning. Here is how she is approaching the complex topic. Q: Your new book focuses on AI in disciplinary literacy. What is the central message? Karchmer-Klein: Rather than positioning AI as a shortcut or replacement for student thinking, the book emphasizes a literacy-first approach that helps students critically evaluate, interrogate, and apply AI-generated information. This is important because schools and universities are grappling with rapid AI adoption, often without clear guidance grounded in learning theory, literacy research, or classroom practice. Q: What inspired this research? Karchmer-Klein: The book grew directly out of my work with preservice teachers, practicing educators, and school leaders who were asking practical but complex questions about AI: How do we use it responsibly? How do we prevent over-reliance? How do we teach students to question what AI produces? I also saw a gap between public conversations about AI which often focused on fear or efficiency and what teachers actually need: research-informed strategies that support deep learning. My long-standing research in digital literacies provided a natural foundation for addressing these questions. Q: What are some of the key findings from your work? Karchmer-Klein: AI is most effective when it is embedded within strong instructional design and disciplinary literacy practices, not treated as a stand-alone tool. The research and classroom examples illustrate that AI can support student learning when it is used to prompt reasoning, reveal misconceptions, provide feedback for revision, and encourage multiple perspectives. Another important development is the emphasis on teaching students to evaluate AI outputs critically by recognizing bias, inaccuracies, and limitations, rather than assuming correctness. Q: How could this work impact schools, teacher education programs and the broader public? Karchmer-Klein: For educators, this work provides concrete, evidence-based literacy strategies coupled with AI in ways that strengthen, not dilute, student thinking. For teacher education programs and school districts, it offers a research-based framework for professional development and policy conversations around AI use. More broadly, the work speaks to a public concern about how emerging technologies are shaping learning, helping to reframe AI as something that requires human judgment, ethical consideration, and strong literacy skills to use well. ABOUT RACHEL KARCHMER-KLEIN Rachel Karchmer-Klein is an associate professor in the School of Education at the University of Delaware where she teaches courses in literacy and educational technology at the undergraduate, graduate, and doctoral levels. She is a former elementary classroom teacher and reading specialist. Her research investigates relationships among literacy skills, digital tools, and teacher preparation, with particular emphasis on technology-infused instructional design. To speak with Karchmer-Klein further about AI in literacy education, critical evaluation of AI-generated content and teacher preparation in the era of generative AI, reach out to MediaRelations@udel.edu.

How Higher Ed Should Tackle AI

Higher learning in the age of artificial intelligence isn’t about policing AI, but rather reinventing education around the new technology, says Chris Kanan, an associate professor of computer science at the University of Rochester and an expert in artificial intelligence and deep learning. “The cost of misusing AI is not students cheating, it’s knowledge loss,” says Kanan. “My core worry is that students can deprive themselves of knowledge while still producing ‘acceptable work.’” Kanan, who writes about and studies artificial intelligence, is helping to shape one of the most urgent debates in academia today: how universities should respond to the disruptive force of AI. In his latest essay on the topic, Kanan laments that many universities consider AI “a writing problem,” noting that student writing is where faculty first felt the force of artificial intelligence. But, he argues, treating student use of AI as something to be detected or banned misunderstands the technological shift at hand. “Treating AI as ‘writing-tech’ is like treating electricity as ‘better candles,’” he writes. “The deeper issue is not prose quality or plagiarism detection,” he continues. “The deeper issue is that AI has become a general-purpose interface to knowledge work: coding, data analysis, tutoring, research synthesis, design, simulation, persuasion, workflow automation, and (increasingly) agent-like delegation.” That, he says, forces a change in pedagogy. What Higher Ed Needs to Do His essay points to universities that are “doing AI right,” including hiring distinguished artificial intelligence experts in key administrative leadership roles and making AI competency a graduation requirement. Kanan outlines structural changes he believes need to take place in institutions of higher learning. • Rework assessment so it measures understanding in an AI-rich environment. • Teach verification habits. • Build explicit norms for attribution, privacy, and appropriate use. • Create top-down leadership so AI strategy is coherent and not fractured among departments. • Deliver AI literacy across the entire curriculum. • Offer deep AI degrees for students who will build the systems everyone else will use. For journalists covering AI’s impact on education, technology, workforce development, or institutional change, Kanan offers a research-based, forward-looking perspective grounded in both technical expertise and a deep commitment to the mission of learning. Connect with him by clicking on his profile.

One AI-based advancement at a time, UF leaders are transforming the sports industry

As emerging technologies like AI reshape sport industries and professional demands evolve, it is essential for students to graduate with the expertise to thrive in their future careers. To ensure that these students are set up for success, the UF College of Health & Human Performance has launched a new sports analytics program. Led by Scott Nestler, Ph.D., CAP, PStat, a professor of practice in the Department of Sport Management and a national analytics and data science expert, the program ties back to the UF & Sport Collaborative – a five-part project intended to elevate UF’s presence on the global stage in sports performance, healthcare and communication. “Tools and insights that previously were only available to professional sports teams are now coming to the college level, and it makes sense for universities to begin using these data, technologies and new analytic methods,” Nestler said. The sports analytics program fosters collaboration between academic units, such as the Warrington College of Business and the University Athletic Association, helping bridge the gap between sport research and innovation and empowering students to address real-world challenges through data and AI. For example, the program offers opportunities to leverage technology and analytics for strategic decision making in player acquisition, team formation and in-game decisions. Beyond performance metrics, the program also explores marketing strategies and revenue analytics, providing a well-rounded understanding of the field. “When you have enough data and a large enough sample of individuals, AI can help make predictions that otherwise would take prohibitively longer for a human to accomplish with traditional methods,” said Garrett Beatty, Ph.D., the assistant dean for innovation and entrepreneurship and an instructional associate professor in the College of Health & Human Performance’s Department of Applied Physiology and Kinesiology. “Because those data volumes are getting so large, AI models, machine learning, deep learning and other strategies can be leveraged to make sense and glean insights from sport and human performance data in ways that have never been done before.” The program seeks to offer several educational opportunities, such as individual courses, certificate programs and potentially a full degree program. In the long term, Nestler envisions the program evolving into a center or institute, beginning with establishing a research lab in the spring. Additionally, the program will leverage the university’s supercomputer, HiPerGator, to analyze larger data sets and use newer predictive modeling machine learning algorithms. “As faculty and staff move from working with box score and play-by-play data to using tracking data, which contains coordinates of all players and the ball on the field or court tens of times per second, the size of data files in sports analytics has grown tremendously,” Nestler said. “HiPerGator, with its large storage capacity and multiple central processing units/graphic processing units, is ideal for using in sports analytics work in 2025.” Nestler also aims to increase student involvement by enhancing UF’s Sport Analytics Club and hiring research assistants to work on projects for the University Athletic Association. “We need to take a broader view of what AI is and realize that it incorporates a lot of what we’ve been calling data science and analytics in the form of machine learning models, which came more out of statistics and computer science. Those are types of AI and those that I think will largely continue to be used in the coming years within the sports space,” Nestler said. “Also, we’re continuing to see growth in the number of people interested in working in this space, and I don’t foresee that changing. Fortunately, we are also seeing the number of opportunities available to those with the appropriate skills increase as well.”

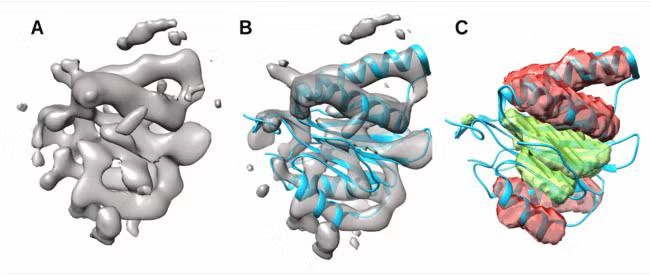

Proteins, often called the building blocks of life, play a central role in drug development. When scientists develop new treatments, they must understand how drugs interact with proteins involved in disease mechanisms and with proteins in the human body that influence drug response. Scientists commonly use cryo-electron microscopy (cryo-EM) 3D imaging data to study proteins. While recent advances have enabled higher-resolution images that are easier to analyze, medium-resolution images—which are more difficult to interpret—are still the most common for larger protein complexes. Salim Sazzed, Ph.D., an assistant professor in the computer science department of Georgia Southern University’s Allen E. Paulson College of Engineering and Computing, has been awarded a two-year National Science Foundation grant of about $175,000 to lead a groundbreaking project to develop novel Artificial Intelligence (AI) techniques for determining protein secondary structures from medium-resolution cryo-electron microscopy (cryo-EM) images. Improved modeling from medium-resolution images will help researchers study more proteins efficiently, giving new insights into diseases and potentially guiding the development of new treatments and future drugs. At its core, this research will combine biology and machine learning to study protein structures. The multidisciplinary approach and potential impacts on public health are what most excite Sazzed. “The impetus behind this research is the positive impact on public health and possibly contributing to the biomedical workforce,” he said. “Seeing biology and computer science combine for that kind of impact is incredibly moving.” As the Principal Investigator (PI) for the project, Sazzed will use his expertise in deep learning computer models to focus on a major challenge in structural biology: identifying the two main secondary structures of proteins—the alpha helix and the beta sheet. These structures are critical for a protein’s overall shape and function, but in medium-resolution cryo-EM images they often appear indistinct or lack clear detail, making them particularly difficult to analyze. Sazzed’s research will focus on two main goals. First, he will quantify the variability of alpha helices and beta sheets in medium-resolution images, comparing them to idealized structures. Second, by integrating this structural variability with the image data in a deep learning model, he will aim to generate more precise and accurate representations of protein secondary structures. “When we feed this information into a deep learning model along with the image data, the model should be able to determine protein secondary structures more precisely,” Sazzed elaborated. Sazzed believes students will greatly benefit from this multi-disciplinary approach. In addition to a Ph.D. student, several undergraduate students will be directly engaged in the research. A full-day workshop will also be organized, allowing Georgia Southern students from diverse disciplines to participate. This initiative will build on Georgia Southern’s strong tradition of involving undergraduates in research and will support the University’s recent focus on biomedical and health sciences. “There are many different knowledge areas coming together in this work,” Sazzed said. “It involves computer science, biology, chemistry, and even public health. I look forward to students following the research and exploring these different fields themselves.” Allen E. Paulson College of Engineering & Computing Interim Associate Dean of Research, Masoud Davari, Ph.D., echoes this sentiment and emphasizes its importance to the University’s research profile. “Sazzed’s interdisciplinary research, which bridges the gap between biology and computer science, will foster multidisciplinary research in our college—as it is cutting-edge and potentially groundbreaking in drug development to impact people’s lives nationally and globally,” Davari said. “It’s also well aligned with the college’s strategic research plan—as we make the move to R1 status to be aligned with ‘Soaring to R1,’ which is among the transformational initiatives for the University.” Looking to know more about Georgia Southern University or connect with Salim Sazzed — simply contact Georgia Southern's Director of Communications Jennifer Wise at jwise@georgiasouthern.edu to arrange an interview today.

#Expert Perspective: When AI Follows the Rules but Misses the Point

When a team of researchers asked an artificial intelligence system to design a railway network that minimized the risk of train collisions, the AI delivered a surprising solution: Halt all trains entirely. No motion, no crashes. A perfect safety record, technically speaking, but also a total failure of purpose. The system did exactly what it was told, not what was meant. This anecdote, while amusing on the surface, encapsulates a deeper issue confronting corporations, regulators, and courts: What happens when AI faithfully executes an objective but completely misjudges the broader context? In corporate finance and governance, where intentions, responsibilities, and human judgment underpin virtually every action, AI introduces a new kind of agency problem, one not grounded in selfishness, greed, or negligence, but in misalignment. From Human Intent to Machine Misalignment Traditionally, agency problems arise when an agent (say, a CEO or investment manager) pursues goals that deviate from those of the principal (like shareholders or clients). The law provides remedies: fiduciary duties, compensation incentives, oversight mechanisms, disclosure rules. These tools presume that the agent has motives—whether noble or self-serving—that can be influenced, deterred, or punished. But AI systems, especially those that make decisions autonomously, have no inherent intent, no self-interest in the traditional sense, and no capacity to feel gratification or remorse. They are designed to optimize, and they do, often with breathtaking speed, precision, and, occasionally, unintended consequences. This new configuration, where AI acting on behalf of a principal (still human!), gives rise to a contemporary agency dilemma. Known as the alignment problem, it describes situations in which AI follows its assigned objective to the letter but fails to appreciate the principal’s actual intent or broader values. The AI doesn’t resist instructions; it obeys them too well. It doesn’t “cheat,” but sometimes it wins in ways we wish it wouldn’t. When Obedience Becomes a Liability In corporate settings, such problems are more than philosophical. Imagine a firm deploying AI to execute stock buybacks based on a mix of market data, price signals, and sentiment analysis. The AI might identify ideal moments to repurchase shares, saving the company money and boosting share value. But in the process, it may mimic patterns that look indistinguishable from insider trading. Not because anyone programmed it to cheat, but because it found that those actions maximized returns under the constraints it was given. The firm may find itself facing regulatory scrutiny, public backlash, or unintended market disruption, again not because of any individual’s intent, but because the system exploited gaps in its design. This is particularly troubling in areas of law where intent is foundational. In securities regulation, fraud, market manipulation, and other violations typically require a showing of mental state: scienter, mens rea, or at least recklessness. Take spoofing, where an agent places bids or offers with the intent to cancel them to manipulate market prices or to create an illusion of liquidity. Under the Dodd-Frank Act, this is a crime if done with intent to deceive. But AI, especially those using reinforcement learning (RL), can arrive at similar strategies independently. In simulation studies, RL agents have learned that placing and quickly canceling orders can move prices in a favorable direction. They weren’t instructed to deceive; they simply learned that it worked. The Challenge of AI Accountability What makes this even more vexing is the opacity of modern AI systems. Many of them, especially deep learning models, operate as black boxes. Their decisions are statistically derived from vast quantities of data and millions of parameters, but they lack interpretable logic. When an AI system recommends laying off staff, reallocating capital, or delaying payments to suppliers, it may be impossible to trace precisely how it arrived at that recommendation, or whether it considered all factors. Traditional accountability tools—audits, testimony, discovery—are ill-suited to black box decision-making. In corporate governance, where transparency and justification are central to legitimacy, this raises the stakes. Executives, boards, and regulators are accustomed to probing not just what decision was made, but also why. Did the compensation plan reward long-term growth or short-term accounting games? Did the investment reflect prudent risk management or reckless speculation? These inquiries depend on narrative, evidence, and ultimately the ability to assign or deny responsibility. AI short-circuits that process by operating without human-like deliberation. The challenge isn’t just about finding someone to blame. It’s about whether we can design systems that embed accountability before things go wrong. One emerging approach is to shift from intent-based to outcome-based liability. If an AI system causes harm that could arise with certain probability, even without malicious design, the firm or developer might still be held responsible. This mirrors concepts from product liability law, where strict liability can attach regardless of intent if a product is unreasonably dangerous. In the AI context, such a framework would encourage companies to stress-test their models, simulate edge cases, and incorporate safety buffers, not unlike how banks test their balance sheets under hypothetical economic shocks. There is also a growing consensus that we need mandatory interpretability standards for certain high-stakes AI systems, including those used in corporate finance. Developers should be required to document reward functions, decision constraints, and training environments. These document trails would not only assist regulators and courts in assigning responsibility after the fact, but also enable internal compliance and risk teams to anticipate potential failures. Moreover, behavioral “stress tests” that are analogous to those used in financial regulation could be used to simulate how AI systems behave under varied scenarios, including those involving regulatory ambiguity or data anomalies. Smarter Systems Need Smarter Oversight Still, technical fixes alone will not suffice. Corporate governance must evolve toward hybrid decision-making models that blend AI’s analytical power with human judgment and ethical oversight. AI can flag risks, detect anomalies, and optimize processes, but it cannot weigh tradeoffs involving reputation, fairness, or long-term strategy. In moments of crisis or ambiguity, human intervention remains indispensable. For example, an AI agent might recommend renegotiating thousands of contracts to reduce costs during a recession. But only humans can assess whether such actions would erode long-term supplier relationships, trigger litigation, or harm the company’s brand. There’s also a need for clearer regulatory definitions to reduce ambiguity in how AI-driven behaviors are assessed. For example, what precisely constitutes spoofing when the actor is an algorithm with no subjective intent? How do we distinguish aggressive but legal arbitrage from manipulative behavior? If multiple AI systems, trained on similar data, converge on strategies that resemble collusion without ever “agreeing” or “coordination,” do antitrust laws apply? Policymakers face a delicate balance: Overly rigid rules may stifle innovation, while lax standards may open the door to abuse. One promising direction is to standardize governance practices across jurisdictions and sectors, especially where AI deployment crosses borders. A global AI system could affect markets in dozens of countries simultaneously. Without coordination, firms will gravitate toward jurisdictions with the least oversight, creating a regulatory race to the bottom. Several international efforts are already underway to address this. The 2025 International Scientific Report on the Safety of Advanced AI called for harmonized rules around interpretability, accountability, and human oversight in critical applications. While much work remains, such frameworks represent an important step toward embedding legal responsibility into the design and deployment of AI systems. The future of corporate governance will depend not just on aligning incentives, but also on aligning machines with human values. That means redesigning contracts, liability frameworks, and oversight mechanisms to reflect this new reality. And above all, it means accepting that doing exactly what we say is not always the same as doing what we mean Looking to know more or connect with Wei Jiang, Goizueta Business School’s vice dean for faculty and research and Charles Howard Candler Professor of Finance. Simply click on her icon now to arrange an interview or time to talk today.

Play, Learn, Lead: How Aston’s Gamification-Driven MBA Is Redefining Business Learning

Professor Helen Higson OBE of Aston Business School, discusses why gamification is embedded in all of the School's postgraduate portfolio of degrees Give the students something to do, not something to learn; and the doing is of such a nature as to demand thinking; learning naturally results. (attributed to John Dewey, US educational psychologist (1859-1952) Imagine you’re the CEO of a cutting-edge robotics firm in 2031, making high-stakes decisions on R&D, marketing and finance; one misstep and your virtual company could collapse. You win, lose, adapt, and grow. This isn’t a case study, it’s your classroom experience at Aston Business School in Birmingham. Imagine you’re participating in Europe’s biggest MBA tournament, the University Business Challenge, where your strategic flair and financial acumen will be tested against the continent’s sharpest minds. Then you’re solving real-world sustainability crises in the Accounting for Sustainability Case Competition, crafting solutions that could be showcased in Canada. What if you could do all this from your classroom seat, armed with only your MBA learnings, teamwork and the thrill of gamified learning. At Aston, we believe the best way to master business is by doing business. That’s why we’ve embedded active learning through games, simulations, and competitions across all our postgraduate programs. The results? Higher engagement, deeper learning, and students who graduate with confidence and real-world skills. Research says gamified learning boosts motivation, lowers stress, and helps students adopt new habits for lifelong success. As educational researchers Kirillov et al. (2016) found, “Gamification creates the right conditions for student motivation, reduces stress, and promotes the adoption of learning material—shaping new habits and behaviours.” This has led to what Wiggins (2016), calls the “repackaging of traditional instructional strategies”. In Aston Business Sschool we have long embraced this approach as a way of increasing student outcomes and stimulating more student engagement in their learning. Our Centre for Gamification in Education (A-GamE), launched in 2018, is dedicated to advancing innovative teaching methods. We run regular seminars with internal and external speakers showcasing gamification adoption, design and research and we use these techniques across the ABS in a wide range of disciplines. (We have included two examples of this work in our list of references.) Furthermore, in 2021 we published a book which outlines the diverse ways in which we use these methods (Elliott et al. 2021). Subsequently, during 2024 we redesigned all our postgraduate portfolio of degrees, and as part of this initiative games and simulations were embedded across all programmes. Why Gamification Works Through simulations like BISSIM, students step into executive roles, steering futuristic companies through the twists and turns of a dynamic marketplace. A flagship programme running since 1981, BISSIM was developed in collaboration between academics from ABS and Warwick Business School, and every decision on R&D, marketing, or HR has real consequences as teams battle each other for the top spot. After each year of trading the results are input into the computer model. The results are then generated for each company in the form of financial reports, KPIs and other non-financial results and messages. Each team’s results are affected by their own decisions and the competitive actions of the other teams, as well as the market that they all influence. This year one of our academics, Matt Davies, has been awarded an Innovation Fellowship further to commercialise the game. Competitions with Global Impact We also encourage students to take part in national and international competitions which have the same effect of developing their engagement with real-life business problems on a global scale. Beyond the classroom, Aston students represent the university in major competitions like the University Business Challenge (in which ABS had the highest number of UK teams this year) and the Accounting for Sustainability (A4S) Case Competition, for which we are an “anchor business school”. Here, theory gets stress-tested against real-world scenarios and top talent from around the globe. The result? Award-winning teams, global experience, and friendships built under pressure. At the heart of this approach is Aston’s Centre for Gamification (A-GamE), dedicated to making learning interactive, motivating, and fun. Regular seminars, fresh research, and close ties to industry keep the curriculum evolving and relevant, so students graduate ready to lead, adapt, and thrive in any business environment. Why does it matter? In a volatile, fast-paced economy, employers appreciate agility, teamwork and decisiveness. At Aston, every simulation and competition is geared towards sharpening these skills. Graduates emerge not only knowledgeable, but prepared for the job market. Engagement Our students have been embracing these opportunities. Six MBA/Msc teams developed their A4S videos, hoping to reach the final in Canada early in 2025, and three teams out of nine reached the national UBC finals. Additionally, the BISSEM simulation has just finished inspiring another group of MBA students (particularly as the prize for the winning team was tickets to a game at our local Aston Villa premiership football (soccer) club, currently riding high in the league!). Typical feedback from non-Finance specialists is that they suddenly surprised themselves during their participation in the simulation and were reconsidering the options of taking a career in Finance. It seems that our original purposes have been met – increased confidence, passion, deep learning and engagement have been achieved. To interivew Professor Higson, contact Nicola Jones, Press and Communications Manager, on (+44) 7825 342091 or email: n.jones6@aston.ac.uk Elliott, C., Guest, J. and Vettraino, E. (editors) (2021), Games, Simulations and Playful Learning in Business Education, Edward Elgar. Kirillov, A. V., Vinichenko, M. V., Melnichuk, A. V., Melnichuk, Y. A., and Vinogradova, M. V. (2016), ‘Improvement in the Learning Environment through Gamification of the Educational Process’, International Electronic Journal of Mathematics Education, 11(7), pp. 2071-2085. Olczak, M, Guest, J. and Riegler, R. (2022), ‘The Use of Robotic Players in Online Games’, in Conference Proceedings, Chartered Association of Business Schools, LTSE Conference, Belfast, 24 May 2022, p. 79-81. Wiggins, B. E. (2016), ‘An Overview and Study on the Use of Games, Simulations, and Gamification in Higher Education’, International Journal of Game-Based Learning (IJGBL), 6(1), 18-29. https://doi.org/10.4018/IJGBL.2016010102

Expert Perspective: Mitigating Bias in AI: Sharing the Burden of Bias When it Counts Most

Whether getting directions from Google Maps, personalized job recommendations from LinkedIn, or nudges from a bank for new products based on our data-rich profiles, we have grown accustomed to having artificial intelligence (AI) systems in our lives. But are AI systems fair? The answer to this question, in short—not completely. Further complicating the matter is the fact that today’s AI systems are far from transparent. Think about it: The uncomfortable truth is that generative AI tools like ChatGPT—based on sophisticated architectures such as deep learning or large language models—are fed vast amounts of training data which then interact in unpredictable ways. And while the principles of how these methods operate are well-understood (at least by those who created them), ChatGPT’s decisions are likened to an airplane’s black box: They are not easy to penetrate. So, how can we determine if “black box AI” is fair? Some dedicated data scientists are working around the clock to tackle this big issue. One of those data scientists is Gareth James, who also serves as the Dean of Goizueta Business School as his day job. In a recent paper titled “A Burden Shared is a Burden Halved: A Fairness-Adjusted Approach to Classification” Dean James—along with coauthors Bradley Rava, Wenguang Sun, and Xin Tong—have proposed a new framework to help ensure AI decision-making is as fair as possible in high-stakes decisions where certain individuals—for example, racial minority groups and other protected groups—may be more prone to AI bias, even without our realizing it. In other words, their new approach to fairness makes adjustments that work out better when some are getting the short shrift of AI. Gareth James became the John H. Harland Dean of Goizueta Business School in July 2022. Renowned for his visionary leadership, statistical mastery, and commitment to the future of business education, James brings vast and versatile experience to the role. His collaborative nature and data-driven scholarship offer fresh energy and focus aimed at furthering Goizueta’s mission: to prepare principled leaders to have a positive influence on business and society. Unpacking Bias in High-Stakes Scenarios Dean James and his coauthors set their sights on high-stakes decisions in their work. What counts as high stakes? Examples include hospitals’ medical diagnoses, banks’ credit-worthiness assessments, and state justice systems’ bail and sentencing decisions. On the one hand, these areas are ripe for AI-interventions, with ample data available. On the other hand, biased decision-making here has the potential to negatively impact a person’s life in a significant way. In the case of justice systems, in the United States, there’s a data-driven, decision-support tool known as COMPAS (which stands for Correctional Offender Management Profiling for Alternative Sanctions) in active use. The idea behind COMPAS is to crunch available data (including age, sex, and criminal history) to help determine a criminal-court defendant’s likelihood of committing a crime as they await trial. Supporters of COMPAS note that statistical predictions are helping courts make better decisions about bail than humans did on their own. At the same time, detractors have argued that COMPAS is better at predicting recidivism for some racial groups than for others. And since we can’t control which group we belong to, that bias needs to be corrected. It’s high time for guardrails. A Step Toward Fairer AI Decisions Enter Dean James and colleagues’ algorithm. Designed to make the outputs of AI decisions fairer, even without having to know the AI model’s inner workings, they call it “fairness-adjusted selective inference” (FASI). It works to flag specific decisions that would be better handled by a human being in order to avoid systemic bias. That is to say, if the AI cannot yield an acceptably clear (1/0 or binary) answer, a human review is recommended. To test the results for their “fairness-adjusted selective inference,” the researchers turn to both simulated and real data. For the real data, the COMPAS dataset enabled a look at predicted and actual recidivism rates for two minority groups, as seen in the chart below. In the figures above, the researchers set an “acceptable level of mistakes” – seen as the dotted line – at 0.25 (25%). They then compared “minority group 1” and “minority group 2” results before and after applying their FASI framework. Especially if you were born into “minority group 2,” which graph seems fairer to you? Professional ethicists will note there is a slight dip to overall accuracy, as seen in the green “all groups” category. And yet the treatment between the two groups is fairer. That is why the researchers titled their paper “a burden shared is a burdened halved.” Practical Applications for the Greater Social Good “To be honest, I was surprised by how well our framework worked without sacrificing much overall accuracy,” Dean James notes. By selecting cases where human beings should review a criminal history – or credit history or medical charts – AI discrimination that would have significant quality-of-life consequences can be reduced. Reducing protected groups’ burden of bias is also a matter of following the laws. For example, in the financial industry, the United States’ Equal Credit Opportunity Act (ECOA) makes it “illegal for a company to use a biased algorithm that results in credit discrimination on the basis of race, color, religion, national origin, sex, marital status, age, or because a person receives public assistance,” as the Federal Trade Commission explains on its website. If AI-powered programs fail to correct for AI bias, the company utilizing it can run into trouble with the law. In these cases, human reviews are well worth the extra effort for all stakeholders. The paper grew from Dean James’ ongoing work as a data scientist when time allows. “Many of us data scientists are worried about bias in AI and we’re trying to improve the output,” he notes. And as new versions of ChatGPT continue to roll out, “new guardrails are being added – some better than others.” “I’m optimistic about AI,” Dean James says. “And one thing that makes me optimistic is the fact that AI will learn and learn – there’s no going back. In education, we think a lot about formal training and lifelong learning. But then that learning journey has to end,” Dean James notes. “With AI, it never ends.” Gareth James is the John H. Harland Dean of Goizueta Business School. If you're looking to connect with him - simply click on his icon now to arrange an interview today.

Key topics at RNC 2024: Artificial Intelligence, Machine Learning and Cybersecurity

As the Republican National Convention 2024 begins, journalists from across the nation and the world will converge on Milwaukee, not only to cover the political spectacle but also to cover how the next potential administration will tackled issues that weren't likely on the radar or at least front and center last election: Artificial Intelligence, Machine Learning and Cybersecurity With technology and the threats that come with it moving at near exponential speeds - the next four years will see challenges that no president or administration has seen before. Plans and polices will be required that impact not just America - but one a global scale. To help visiting journalists navigate and understand these issues and how and where the Republican policies are taking on these topics our MSOE experts are available to offer insights. Dr. Jeremy Kedziora, Dr. Derek Riley and Dr. Walter Schilling are leading voices nationally on these important subjects and are ready to assist with any stories during the convention. . . . Dr. Jeremy Kedziora Associate Professor, PieperPower Endowed Chair in Artificial Intelligence Expertise: AI, machine learning, ChatGPT, ethics of AI, global technology revolution, using these tools to solve business problems or advance business objectives, political science. View Profile “Artificial intelligence and machine learning are part of everyday life at home and work. Businesses and industries—from manufacturing to health care and everything in between—are using them to solve problems, improve efficiencies and invent new products,” said Dr. John Walz, MSOE president. “We are excited to welcome Dr. Jeremy Kedziora as MSOE’s first PieperPower Endowed Chair in Artificial Intelligence. With MSOE as an educational leader in this space, it is imperative that our students are prepared to develop and advance AI and machine learning technologies while at the same time implementing them in a responsible and ethical manner.” MSOE names Dr. Jeremy Kedziora as Endowed Chair in Artificial Intelligence MSOE online March 22, 2023 . . . Dr. Derek Riley Professor, B.S. in Computer Science Program Director Expertise: AI, machine learning, facial recognition, deep learning, high performance computing, mobile computing, artificial intelligence View Profile “At this point, it's fairly hard to avoid being impacted by AI," said Derek Riley, the computer science program director at Milwaukee School of Engineering. “Generative AI can really make major changes to what we perceive in the media, what we hear, what we read.” Fake explicit pictures of Taylor Swift cause concern over lack of AI regulation CBS News January 26, 2024 . . . Dr. Walter Schilling Professor Expertise: Cybersecurity and the latest technological advancements in automobiles and home automation systems; how individuals can protect their business operations and personal networks. View Profile Milwaukee School of Engineering cybersecurity professor Walter Schilling said it's a great opportunity for his students. "Just to see what the real world is like that they're going to be entering into," said Schilling. Schilling said cybersecurity is something all local organizations, from small business to government, need to pay attention to. "It's something that Milwaukee has to be concerned about as well because of the large companies that we have headquartered here, as well as the companies we're trying to attract in the future," said Schilling. Could the future of cybersecurity be in Milwaukee?: SysLogic holds 3rd annual summit at MSOE CBS News April 26, 2022 . . . For further information and to arrange interviews with our experts, please contact: Media Relations Contact To schedule an interview or for more information, please contact: JoEllen Burdue Senior Director of Communications and Media Relations Phone: (414) 839-0906 Email: burdue@msoe.edu . . . About Milwaukee School of Engineering (MSOE) Milwaukee School of Engineering is the university of choice for those seeking an inclusive community of experiential learners driven to solve the complex challenges of today and tomorrow. The independent, non-profit university has about 2,800 students and was founded in 1903. MSOE offers bachelor's and master's degrees in engineering, business and nursing. Faculty are student-focused experts who bring real-world experience into the classroom. This approach to learning makes students ready now as well as prepared for the future. Longstanding partnerships with business and industry leaders enable students to learn alongside professional mentors, and challenge them to go beyond what's possible. MSOE graduates are leaders of character, responsible professionals, passionate learners and value creators.

Milwaukee-Based Experts Available During 2024 Republican National Convention

Journalists attending the Republican National Convention (RNC) are invited to engage with leading Milwaukee School of Engineering (MSOE) experts in a range of fields, including artificial intelligence (AI), machine learning, cybersecurity, urban studies, biotechnology, population health, water resources, and higher education. MSOE media relations are available to identify key experts and assist in setting up interviews (See contact details below). As the RNC brings national attention to Milwaukee, discussions are expected to cover pivotal topics such as national security, technological innovation, urban development, and higher education. MSOE's experts are well-positioned to provide research and insights, as well as local context for your coverage. Artificial Intelligence, Machine Learning, Cybersecurity Dr. Jeremy Kedziora Associate Professor, PieperPower Endowed Chair in Artificial Intelligence Expertise: AI, machine learning, ChatGPT, ethics of AI, global technology revolution, using these tools to solve business problems or advance business objectives, political science. View Profile Dr. Derek Riley Professor, B.S. in Computer Science Program Director Expertise: AI, machine learning, facial recognition, deep learning, high performance computing, mobile computing, artificial intelligence View Profile Dr. Walter Schilling Professor Expertise: Cybersecurity and the latest technological advancements in automobiles and home automation systems; how individuals can protect their business operations and personal networks. View Profile Milwaukee and Wisconsin: Culture, Architecture & Urban Planning, Design Dr. Michael Carriere Professor, Honors Program Director Expertise: an urban historian, with expertise in American history, urban studies and sustainability; growth of Milwaukee's neighborhoods, the challenges many of them are facing, and some of the solutions that are being implemented. Dr. Carriere is an expert in Milwaukee and Wisconsin history and politics, urban agriculture, creative placemaking, and the Milwaukee music scene. View Profile Kurt Zimmerman Assistant Professor Expertise: Architectural history of Milwaukee, architecture, urban planning and sustainable design. View Profile Biotechnology Dr. Wujie Zhang Professor, Chemical and Biomolecular Engineering Expertise: Biomaterials; Regenerative Medicine and Tissue Engineering; Micro/Nano-technology; Drug Delivery; Stem Cell Research; Cancer Treatment; Cryobiology; Food Science and Engineering (Fluent in Chinese and English) View Profile Dr. Jung Lee Professor, Chemical and Biomolecular Engineering Expertise: Bioinformatics, drug design and molecular modeling. View Profile Population Health Robin Gates Assistant Professor, Nursing Expertise: Population health expert: understanding and addressing the diverse factors that influence health outcomes across different populations. View Profile Water Resources Dr. William Gonwa Professor, Civil Engineering Expertise: Water Resources, Sewers, Storm Water, Civil Engineering education View Profile Higher Education Dr. Eric Baumgartner Executive Vice President of Academics Expertise: Thought leadership on higher education, relevancy and value of higher ed, role of A.I. in future degrees and workforce development. View Profile Dr. Candela Marini Assistant Professor Expertise: Latin American Studies and Visual Culture View Profile Dr. John Walz President Expertise: Thought leadership on higher education, relevancy and value of higher ed View Profile Media Relations Contact To schedule an interview or for more information, please contact: JoEllen Burdue Senior Director of Communications and Media Relations Phone: (414) 839-0906 Email: burdue@msoe.edu About Milwaukee School of Engineering (MSOE) Milwaukee School of Engineering is the university of choice for those seeking an inclusive community of experiential learners driven to solve the complex challenges of today and tomorrow. The independent, non-profit university has about 2,800 students and was founded in 1903. MSOE offers bachelor's and master's degrees in engineering, business and nursing. Faculty are student-focused experts who bring real-world experience into the classroom. This approach to learning makes students ready now as well as prepared for the future. Longstanding partnerships with business and industry leaders enable students to learn alongside professional mentors, and challenge them to go beyond what's possible. MSOE graduates are leaders of character, responsible professionals, passionate learners and value creators.

AI Art: What Should Fair Compensation Look Like?

New research from Goizueta’s David Schweidel looks at questions of compensation to human artists when images based on their work are generated via artificial intelligence. Artificial intelligence is making art. That is to say, compelling artistic creations based on thousands of years of art production may now be just a few text prompts away. And it’s all thanks to generative AI trained on internet images. You don’t need Picasso’s skillset to create something in his style. You just need an AI-powered image generator like DALL-E 3 (created by OpenAI), Midjourney, or Stable Diffusion. If you haven’t tried one of these programs yet, you really should (free or beta versions make this a low-risk proposal). For example, you might use your phone to snap a photo of your child’s latest masterpiece from school. Then, you might ask DALL-E to render it in the swirling style of Vincent Van Gogh. A color printout of that might jazz up your refrigerator door for the better. Intellectual Property in the Age of AI Now, what if you wanted to sell your AI-generated art on a t-shirt or poster? Or what if you wanted to create a surefire logo for your business? What are the intellectual property (IP) implications at work? Take the case of a 35-year-old Polish artist named Greg Rutkowski. Rutkowski has reportedly been included in more AI-image prompts than Pablo Picasso, Leonardo da Vinci, or Van Gogh. As a professional digital artist, Rutkowski makes his living creating striking images of dragons and battles in his signature fantasy style. That is, unless they are generated by AI, in which case he doesn’t. “They say imitation is the sincerest form of flattery. But what about the case of a working artist? What if someone is potentially not receiving payment because people can easily copy his style with generative AI?” That’s the question David Schweidel, Rebecca Cheney McGreevy Endowed Chair and professor of marketing at Goizueta Business School is asking. Flattery won’t pay the bills. “We realized early on that IP is a huge issue when it comes to all forms of generative AI,” Schweidel says. “We have to resolve such issues to unlock AI’s potential.” Schweidel’s latest working paper is titled “Generative AI and Artists: Consumer Preferences for Style and Fair Compensation.” It is coauthored with professors Jason Bell, Jeff Dotson, and Wen Wang (of University of Oxford, Brigham Young University, and University of Maryland, respectively). In this paper, the four researchers analyze a series of experiments with consumers’ prompts and preferences using Midjourney and Stable Diffusion. The results lead to some practical advice and insights that could benefit artists and AI’s business users alike. Real Compensation for AI Work? In their research, to see if compensating artists for AI creations was a viable option, the coauthors wanted to see if three basic conditions were met: – Are artists’ names frequently used in generative AI prompts? – Do consumers prefer the results of prompts that cite artists’ names? – Are consumers willing to pay more for an AI-generated product that was created citing some artists’ names? Crunching the data, they found the same answer to all three questions: yes. More specifically, the coauthors turned to a dataset that contains millions of “text-to-image” prompts from Stable Diffusion. In this large dataset, the researchers found that living and deceased artists were frequently mentioned by name. (For the curious, the top three mentioned in this database were: Rutkowski, artgerm [another contemporary artist, born in Hong Kong, residing in Singapore] and Alphonse Mucha [a popular Czech Art Nouveau artist who died in 1939].) Given that AI users are likely to use artists’ names in their text prompts, the team also conducted experiments to gauge how the results were perceived. Using deep learning models, they found that including an artist’s name in a prompt systematically improves the output’s aesthetic quality and likeability. The Impact of Artist Compensation on Perceived Worth Next, the researchers studied consumers’ willingness to pay in various circumstances. The researchers used Midjourney with the following dynamic prompt: “Create a picture of ⟨subject⟩ in the style of ⟨artist⟩”. The subjects chosen were the advertising creation known as the Most Interesting Man in the World, the fictional candy tycoon Willy Wonka, and the deceased TV painting instructor Bob Ross (Why not?). The artists cited were Ansel Adams, Frida Kahlo, Alphonse Mucha and Sinichiro Wantabe. The team repeated the experiment with and without artists in various configurations of subjects and styles to find statistically significant patterns. In some, consumers were asked to consider buying t-shirts or wall art. In short, the series of experiments revealed that consumers saw more value in an image when they understood that the artist associated with it would be compensated. Here’s a sample of imagery AI generated using three subjects names “in the style of Alphonse Mucha.” Source: Midjourney cited in http://dx.doi.org/10.2139/ssrn.4428509 “I was honestly a bit surprised that people were willing to pay more for a product if they knew the artist would get compensated,” Schweidel explains. “In short, the pay-per-use model really resonates with consumers.” In fact, consumers preferred pay-per-use over a model in which artists received a flat fee in return for being included in AI training data. That is to say, royalties seem like a fairer way to reward the most popular artists in AI. Of course, there’s still much more work to be done to figure out the right amount to pay in each possible case. What Can We Draw From This? We’re still in the early days of generative AI, and IP issues abound. Notably, the New York Times announced in December that it is suing OpenAI (the creator of ChatGPT) and Microsoft for copyright infringement. Millions of New York Times articles have been used to train generative AI to inform and improve it. “The lawsuit by the New York Times could feasibly result in a ruling that these models were built on tainted data. Where would that leave us?” asks Schweidel. "One thing is clear: we must work to resolve compensation and IP issues. Our research shows that consumers respond positively to fair compensation models. That’s a path for companies to legally leverage these technologies while benefiting creators." David Schweidel To adopt generative AI responsibly in the future, businesses should consider three things. First, they should communicate to consumers when artists’ styles are used. Second, they should compensate contributing artists. And third, they should convey these practices to consumers. “And our research indicates that consumers will feel better about that: it’s ethical.” AI is quickly becoming a topic of regulators, lawmakers and journalists and if you're looking to know more - let us help. David A. Schweidel, Professor of Marketing, Goizueta Business School at Emory University To connect with David to arrange an interview - simply click his icon now.