Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

New book from Aston University academic shows that Christmas tasks mostly fall on women

New book by Dr Emily Christopher shows differences in how household tasks are divided by men and women Book highlights that women tend to buy the Christmas presents and send cards Men often see women as being more thoughtful or having better knowledge of what people would like. A new book from Aston University’s Dr Emily Christopher reveals that when it comes to sending Christmas cards and buying Christmas presents, women are still mostly doing the work as they are perceived to have better knowledge of what people would like. Dr Emily Christopher, a lecturer in sociology and policy at Aston School of Law and Social Sciences, has recently published her book Couples at Work: Negotiating Paid Employment, Housework and Childcare, which look at how household tasks are divided by men and women and the reasons behind these divisions. The data for the book has been collated over an eight-year period with couples being interviewed twice to provide a robust set of results. It looks at how different sex parent couples combined paid work, housework and childcare. The research revealed how gender norms continue to shape how certain daily household jobs are divided. Women were more likely than men to clean the house, especially bathrooms, wash clothes and put clothes away. Men still tend to do tasks like mowing the lawn and DIY but now are also more likely to do the cooking and the grocery shopping. The research shows that the key to understanding how household tasks are divided lies in the meaning they hold for partners. With the festive season upon us, the book reveals that woman are largely responsible for the Christmas present buying and sending cards with 100% of those taking part in the research saying that women mostly carried out these tasks. This also included buying for the male partner's relatives. In instances where men had a 'helping' role in these tasks, this included being involved in the discussion or consulting on choice of presents, especially for children, with only a small minority buying presents for their own family. The data revealed that where women didn't choose and buy presents for their partners family, they were still involved in reminding their partners that this needed to be done or advising on choice of gifts, showing that women were still taking on the mental load of planning for the festive season. The book reveals that when men were questioned about why they didn't get involved in present buying, they drew on gender norms. Women were often described, by the men, as being more thoughtful or having better knowledge of what people would like. Men often described how family members wouldn’t receive presents at all if it relied on them. Although much of the gift giving and organising represented love and affection for the women, which many found enjoyable, many still saw it as work and expressed that they would like their partners' to be more involved. Dr Christopher said: “This book takes an in-depth look at the way in which everyday roles around the household are divided between men and women. “The research shows that over a period of eight years fathers increased their role in childcare tasks but this did not always extend to housework. “The pandemic was an opportunity to change how couples share housework but women were still more likely to carry out tasks like cleaning, washing clothes and putting clothes away and overwhelmingly remained responsible for the mental orchestration of family work.”

With OpenAI’s latest release, GPT-5.2, AI has crossed an important threshold in performance on professional knowledge-work benchmarks. Peter Evans, Co-Founder & CEO of ExpertFile, outlines how these technologies will fundamentally improve research communications and shares tips and prompts for PR pros. OpenAI has just launched GPT-5.2, describing it as its most capable AI model yet for professional knowledge work — with significantly improved accuracy on tasks like creating spreadsheets, building presentations, interpreting images, and handling complex multistep workflows. And based on our internal testing, we're really impressed. For communications professionals in higher education, non-profits, and R&D-focused industries, this isn’t just another tech upgrade — it’s a meaningful step forward in addressing the “research translation gap” that can slow storytelling and media outreach. According to OpenAI, GPT-5.2 represents measurable gains on benchmarks designed to mirror real work tasks. In many evaluations, it matches or exceeds the performance of human professionals. Also, before you hit reply with “Actually, the best model is…” — yes, we know. ChatGPT-5.2 isn’t the only game in town, and it’s definitely not the only tool we use. Our ExpertFile platform uses AI throughout, and I personally bounce between Claude 4.5, Gemini, Perplexity, NotebookLM, and more specialized models depending on the job to be done. LLM performance right now is a full-contact horserace — today’s winner can be tomorrow’s “remember when,” so we’re not trying to boil the ocean with endless comparisons. We’re spotlighting GPT-5.2 because it marks a meaningful step forward in the exact areas research comms teams care about: reliability, long-document work, multi-step tasks, and interpreting visuals and data. Most importantly, we want this info in your hands because a surprising number of comms pros we meet still carry real fear about AI — and long term, that’s not a good thing. Used responsibly, these tools can help you translate research faster, find stronger story angles, and ship more high-quality work without burning out. When "Too Much" AI Power Might Be Exactly What You Need AI expert Allie K. Miller's candid but positive review of an early testing version of ChatGPT 5.2 highlights what she sees as drawbacks for casual users: "outputs that are too long, too structured, and too exhaustive." She goes on to say that in her tests, she observed that ChatGPT-5,2 "stays with a line of thought longer and pushes into edge cases instead of skating on the surface." Fair enough. All good points that Allie Miller makes (see above). However, for communications professionals, these so-called "downsides" for casual users are precisely the capabilities we need. When you're assessing complex research and developing strategic messaging for a variety of important audiences, you want an AI that fits Miller's observation that GPT-5.2 feels like "AI as a serious analyst" rather than "a friendly companion." That's not a critique of our world—it's a job description for comms pros working in sectors like higher education and healthcare. Deep research tools that refuse to take shortcuts are exactly what research communicators need. So let's talk more specifically about how comms pros can think about these new capabilities: 1. AI is Your New Speed-Reading Superpower for Research That means you can upload an entire NIH grant, a full clinical trial protocol, or a complex environmental impact study and ask the model to highlight where key insights — like an unexpected finding — are discussed. It can do this in a fraction of the time it would take a human reader. This isn’t about being lazy. It’s about using AI to assemble a lot of tedious information you need to craft compelling stories while teams still parse dense text manually. 2. The Chart Whisperer You’ve Been Waiting For We’ve all been there — squinting at a graph of scientific data that looks like abstract art, waiting for the lead researcher to clarify what those error bars actually mean. Recent improvements in how GPT-5.2 handles scientific figures and charts show stronger performance on multimodal reasoning tasks, indicating better ability to interpret and describe visual information like graphs and diagrams. With these capabilities, you can unlock the data behind visuals and turn them into narrative elements that resonate with audiences. 3. A Connection Machine That Finds Stories Where Others See Statistics Great science communication isn’t about dumbing things down — it’s about building bridges between technical ideas and the broader public. GPT-5.2 shows notable improvements in abstract reasoning compared with earlier versions, based on internal evaluations on academic reasoning benchmarks. For example, teams working on novel materials science or emerging health technologies can use this reasoning capability to highlight connections between technical results and real-world impact — something that previously required hours of interpretive work. These gains help the AI spot patterns and relationships that can form the basis of compelling storytelling. 4. Accuracy That Gives You More Peace of Mind...When Coupled With Human Oversight Let’s address the elephant in the room: AI hallucinations. You’ve probably heard the horror stories — press releases that cited a study that didn’t exist, or a “quote” that was never said by an expert. GPT-5.2 has meaningfully reduced error rates compared with its predecessor, by a substantial margin, according to OpenAI Even with all these improvements, human review with your experts and careful editing remain essential, especially for anything that will be published or shared externally. 5. The Speed Factor: When “Urgent” Actually Means Urgent With the speed of media today, being second often means being irrelevant. GPT-5.2’s performance on workflow-oriented evaluations suggests it can synthesize information far more quickly than manual review, freeing up a lot more time for strategic work. While deeper reasoning and longer contexts — the kinds of tasks that matter most in research translation — require more processing time and costs continue to improve. Savvy communications teams will adopt a tiered approach: using faster models of AI for simple tasks such as social posts and routine responses, and using reasoning-optimized settings for deep research. Your Action Plan: The GPT-5.2 Playbook for Comms Pros Here’s a tactical checklist to help your team capitalize on these advances. #1 Select the Right AI Model for the Job: Lowers time and costs • Use fast, general configurations for routine content • Use reasoning-optimized configurations for complex synthesis and deep document understanding • Use higher-accuracy configurations for high-stakes projects #2 Find Hidden Ideas Beyond the Abstract: Deeper Reasoning Models do the Heavy Work • Upload complete PDFs — not just the 2-page summary you were given • Use deeper reasoning configurations to let the model work through the material Try these prompts in ChatGPT5.2 “What exactly did the researchers say about this unexpected discovery that would be of interest to my <target audience>? Provide quotes and page references where possible.” “Identify and explain the research methodology used in this study, with references to specific sections.” “Identify where the authors discuss limitations of the study.” “Explain how this research may lead to further studies or real-world benefits, in terms relatable to a general audience.” #3 Unlock Your Story Leverage improvements in pattern recognition and reasoning. Try these prompts: “Using abstract reasoning, find three unexpected analogies that explain this complex concept to a general audience.” “What questions could the researchers answer in an interview that would help us develop richer story angles?” #4 Change the Way You Write Captions Take advantage of the way ChatGPT-5.2 translates processes and reasons about images, charts, diagrams, and other visuals far more effectively. Try these prompts: Clinical Trial Graphs: “Analyze this uploaded trial results graph upload image. Identify key trends, and comparisons to controls, then draft a 150-word donor summary with plain-language explanations and suggested captions suitable for donor communications.” Medical Diagrams: “Interpret these uploaded images. Extract diagnostic insights, highlight innovations, and generate a patient-friendly explainer: bullet points plus one visual caption.” A Word of Caution: Keep Experts in the Loop to Verify Information Even with improved reliability, outputs should be treated as drafts. If your team does not yet have formal AI use policies, it's time to get started, because governance will be critical as AI use scales in 2026 and beyond. A trust-but-verify policy with experts treats AI as a co-pilot — helpful for heavy lifting — while humans remain accountable for approval and publication. The Importance of Humans (aka The Good News) Remember: the future of research communication isn’t about AI taking over — it’s about AI empowering us to do the strategic, human work that machines cannot. That includes: • Building relationships across your institution • Engaging researchers in storytelling • Discovering narrative opportunities • Turning discoveries into compelling narratives that influence audiences With improvements in speed, reasoning, and reliability, the question isn’t whether AI can help — it’s what research stories you’ll uncover next to shape public understanding and impact. FAQ How is AI changing expectations for accuracy in research and institutional communications? AI is shifting expectations from “fast output” to defensible accuracy. Better reasoning means fewer errors in research summaries, policy briefs, and expert content—especially when you’re working from long PDFs, complex methods, or dense results. The new baseline is: clear claims, traceable sources, and human review before publishing. ⸻ Why does deeper AI reasoning matter for communications teams working with experts and research content? Comms teams translate multi-disciplinary research into messaging that must withstand scrutiny. Deeper reasoning helps AI connect findings to real-world relevance, flag uncertainty, and maintain nuance instead of flattening meaning. The result is work that’s easier to defend with media, leadership, donors, and the public—when paired with expert verification. ⸻ When should communications professionals use advanced AI instead of lightweight AI tools? Use lightweight tools for brainstorming, social drafts, headlines, and quick rewrites. Use advanced, reasoning-optimized AI for high-stakes deliverables: executive briefings, research positioning, policy-sensitive messaging, media statements, and anything where a mistake could create reputational, compliance, or scientific credibility risk. Treat advanced AI as your “analyst,” not your autopilot. ⸻ How can media relations teams use AI to find stronger story angles beyond the abstract? AI can scan full papers, grants, protocols, and appendices to surface where the real story lives: unexpected findings, practical implications, limitations, and unanswered questions that prompt great interviews. Ask it to map angles by audience (public, policy, donors, clinicians) and to point to the exact sections that support each angle. ⸻ How should higher-ed comms teams use AI without breaking embargoes or media timing? AI can speed prep work—backgrounders, Q&A, lay summaries, caption drafts—before embargo lifts. The rule is simple: treat embargoed material like any sensitive document. Use approved tools, restrict sharing, and avoid pasting embargoed text into unapproved systems. Use AI to build assets early, then finalize post-approval at release time. ⸻ What’s the best way to keep faculty “in the loop” while still moving fast with AI? Use AI to produce review-friendly drafts that reduce load on researchers: short summaries, suggested quotes clearly marked as drafts, and a checklist of claims needing verification (numbers, methods, limitations). Then route to the expert with specific questions, not a wall of text. This keeps approvals faster while protecting scientific accuracy and trust. ⸻ How should teams handle charts, figures, and visual data in research communications? AI can turn “chart confusion” into narrative—if you prompt for precision. Ask it to identify trends, group comparisons, and what the figure does not show (limitations, missing context). Then verify with the researcher, especially anything involving significance, controls, effect size, or causality. Use the output to write captions that are accurate and accessible. ⸻ Do we need an AI Use policy in comms and media relations—and what should it include? Yes—because adoption scales faster than risk awareness. A practical policy should define: approved tools, what data is restricted, required human review steps, standards for citing sources/page references, rules for drafting quotes, and escalation paths for sensitive topics (health, legal, crisis). Clear guardrails reduce fear and prevent preventable reputational mistakes. If you’re using AI to move faster on research translation, the next bottleneck is usually the same one for many PR and Comm Pros: making your experts more discoverable in Generative Search, your website, and other media. ExpertFile helps media relations and digital teams organize their expert content by topics, keep detailed profiles current, and respond faster to source requests—so you can boost your AI citations and land more coverage with less work. For more information visit us at www.expertfile.com

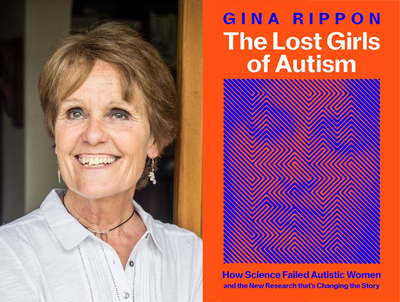

Gina Rippon, professor emeritus of cognitive neuroimaging at Aston University, has won an award for her book, The Lost Girls of Autism The book won the 2025 British Psychological Society Popular Science Award It explores the emerging science of female autism, and examines why it has been systematically ignored and misunderstood for so long. The Lost Girls of Autism, the latest book from Gina Rippon, professor emeritus of cognitive neuroimaging at Aston University Institute of Health and Neurodevelopment (IHN), has won the 2025 British Psychological Society (BPS) Popular Science Award. The annual BPS Book Awards recognise exceptional published works in the field of psychology. There are four categories – popular science, textbook, academic monograph and practitioner text. With the subtitle ‘How Science Failed Autistic Women and the New Research that’s Changing the Story’, The Lost Girls of Autism explores the emerging science of female autism, and examines why it has been systematically ignored and misunderstood for so long. Historically, clinicians believed that autism was a male condition, and simply did not look for it in girls and women. This has meant that autistic girls visiting a doctor have been misdiagnosed with anxiety, depression or personality disorders, or are missed altogether. Many women only discover they have the condition when they are much older. Professor Rippon said: “It's such a pleasure and an honour to receive this award from the BPS. It’s obviously flattering to join the great company of previous winners, but I’m also extremely grateful for the attention drawn to the issues raised in the book. “Over many decades, due to autism’s ‘male spotlight’ problem, autistic girls and women have been overlooked, deprived of the help they needed, and even denied access to the very research studies that could widen our understanding of autism. This book tells the stories of these girls and women, and I’m thrilled to accept this prize on their behalf.”

Farm but no fowl: How Florida aquaculture is growing the economy

Florida’s thriving aquaculture industry is a vital part of the state’s economy, generating more than $165 million in sales annually and supporting jobs across rural and coastal communities. Recognized as agriculture by the Florida Legislature in 1993, aquaculture contributes to food security, environmental sustainability and economic resilience. “Just like terrestrial, land-based agriculture, aquaculture is the process of growing or raising a product,” said Shirley Baker, UF/IFAS professor of aquaculture and associate director of the School of Forest, Fisheries and Geomatics Sciences. “The people who do the work consider themselves farmers. Their products are simply plants and animals grown or raised underwater.” Overseen by the Florida Department of Agriculture & Consumer Services (FDACS), the industry includes an estimated 1,500 varieties of food fish, bait fish, mollusks, aquatic plants, alligators, turtles, crustaceans, amphibians, caviar and ornamental fish. With proper regulatory support, aquaculture can continue to be a driving force in Florida’s economy and environmental stewardship. The hallmark of Florida aquaculture is ornamental, or tropical fish, the saltwater and freshwater species bred for aquariums. In 2023, the sector generated more than $57 million, making the state the country’s top pet fish producer. In fact, 95% of ornamental fish in the United States come from the Sunshine State. About 90% of Florida’s ornamental fish are freshwater varieties. Farmers primarily raise live-bearing species in sterilized earthen ponds dug into loam or bedrock. They fill ponds with sexually mature fish called broodstock and harvest offspring using baited traps. Most egg-laying fish are grown in commercial hatcheries. Like ornamental fish, the demand for farmed seafood has grown as wild-caught sources are increasingly depleted. Globally, more than 50% of all seafood for human consumption is produced through aquaculture. “Seafood is considered one of the most in-demand sources of lean protein in the world, and it has to come from somewhere,” said Matthew DiMaggio, director of the UF/IFAS Tropical Aquaculture Laboratory in Ruskin. “The ocean can't produce any more than it already has, so aquaculture has to make up the deficit.” In Florida, as the number of fish farms and the scale of their operations have grown, the value of food fish sales has skyrocketed. Between 2018 and 2023, sales rose from $4 million to $26 million, a 550% increase. Some of the most common Florida food fish are tilapia, striped bass, cobia, pompano and red drum. They’re housed in various ways. Operations can include fiberglass ponds, vats and tanks inside greenhouses and recirculating systems occupying entire warehouses. Farmers typically start with fingerlings, or juvenile fish, purchased from reputable suppliers. Aquaculture farmers share their experiences Evans Farm of Pierson, Florida, is among the pioneering food fish farms in the state. Originally cattle farmers, the company expanded to sell tilapia, striped bass and caviar harvested from sturgeon. Fish are kept in filtered, recirculating ponds and long tanks known as raceways. They’re transported live to grocery stores and markets in vans outfitted with tanks and filtration systems. “Our fish are thriving, and they’re healthy. We grow them with great water quality, and we feed them excellent food,” said Jane Davis, who owns the business with her mother and brothers. “Although they’re raised in water, they’re no different than other agriculture crops, whether it's cattle, chickens or anything else.” Mollusks are another significant contributor to Florida aquaculture. While the sector includes oysters and scallops, clams are the dominating commodity; in 2023, they brought in $32 million of the state’s $43 million in mollusk sales. Clam farmers generally obtain grain-sized seed clams from hatcheries. The smallest varieties are initially cared for in nursery systems. Once the shells become large enough, they’re transferred to bags submerged off the coast. Cedar Key resident Heath Davis, no relation to Jane Davis, transitioned from fishing to clam farming in the mid-1990s. He and his father, Mike Davis, own Cedar Key Seafarms, one of the state’s leading wholesale clam distributors. “Before, as fishermen, we would go out and place nets wherever we thought the fish were,” Heath Davis said. “But clamming is like farming. We lease a 2-acre, underwater plot from the state and harvest the product from our designated field.” The Florida Aquaculture Plan In November, the Florida Aquaculture Review Council, the official conduit between FDACS and farmers, published the latest revision of the Florida Aquaculture Plan, a detailed list of research and development priorities. Florida’s climate, infrastructure, streamlined regulations and positive business environment have positioned the state to become the national leader in aquaculture, but innovation is required to remain competitive, according to the document. It’s a message Heath Davis echoed. “Aquaculture farming is such a huge part of Florida’s economy,” he said. “It could hold some of the answers needed to sustain the growing number of people living on this peninsula.”

Artificial intelligence is a resource-intensive technology. A paper recently published in Nano Letters by collaborators at the Virginia Commonwealth University (VCU) College of Engineering and Georgetown University hopes to improve AI’s ability to parse the vast amounts of information it creates by applying magneto-ionics to the established concept of physical reservoir computing (PRC). “Demonstrating we can make solid-state devices with magneto-ionic materials is an important step into further energy-efficient computing research, and this Nano Letters publication reinforces that,” said Muhammad (Md.) Mahadi Rajib, Ph.D., a postdoc with Jayasimha Atulasimha, Ph.D., Engineering Foundation Professor in the Department of Mechanical & Nuclear Engineering. What makes a decision? Our brains make countless complex decisions everyday. Input comes in, we weigh options and decide what to do. Within that simple path are countless identical loops of input, consideration and output as neurons fire in a chain that takes you from cause to effect. For artificial intelligence, nodes within a neural network receive inputs and provide output, much like the neurons in our brains. These outputs can be sent to other nodes for continued processing, but those outputs need weight to have value. For AI, weight signifies one input or connection is more important than another. Traditional neural networks have multiple layers consisting of countless nodes like this. Each node requires training in order to weigh things properly. Training consumes processing power, and processing power takes time and energy. Making tasks like analysis and prediction more efficient is how to continuously improve AI technology. Less training, more efficiency. Physical reservoir computing reduces the number of nodes an AI needs to train. Only the final output layer needs training in PRC, using a simple method for classification or prediction tasks. A physical “black box” replaces neural network nodes and synapses, like the ones used for AI inference, in PRC and processes inputs by implementing a nonlinear mathematical function with temporal memory. To explain the inner workings of the black box, imagine two stones thrown into still water. One stone is thrown with high force and the other with low force, creating big and small ripples respectively. If the stones are thrown so the second stone lands before the previous ripples have dissipated, the new ripple is affected by the earlier one. This illustrates the concept of temporal memory. In this analogy, if multiple stones are thrown one after another into still water according to some complex trend, observing the ripples over time allows you to understand the trend and train a simple set of weights to predict the force of the next stone throw from the ripple pattern. Repeatedly performing this cycle of input, interaction and observation is PRC. It reveals patterns over time that can predict chaotic systems, like market trends or the weather, using techniques like linear regression modeling to plot each output as a single point. The magneto-ionic approach. Using this same example above, the “water” in a magneto-ionic PRC is represented by a positive and negative electrode with solid-state electrolyte between them through which ions move when voltage is applied. The application of voltage is equivalent to throwing a stone and the ripple effect is comparable to the movement of oxygen ions in the system. “In addition to its energy efficiency, a useful feature of the magnetoionic system is that time scales for ion diffusion can be controlled from microseconds to minutes,” Atulasimha said. “This leads to simple experimental demonstration, as no megahertz and gigahertz measurements are needed. One can work at the natural time scales of the target application in practical systems and remove the need for complex frequency conversion, which takes both energy and space due to complex electronics.” Atulasimha imagines these energy-efficient reservoir systems have applications in edge computing devices like drones, automated vehicles and surveillance cameras. Tasks such as household energy load forecasting, weather prediction or processing hourly readings from wearable devices, which operate on hour-scale data, can also be performed using magneto-ionic PRC without additional preprocessing. “We showed that the magneto-ionic physical reservoir has both memory and nonlinear behavior, two important properties necessary for using it as a reservoir block,” Rajib said. “Our system stands out because voltage-controlled ion migration is a highly energy efficient method of manipulating magnetization. We demonstrated the required reservoir properties in a physical system and did so using a very energy efficient approach.” Two labs came together in order to pursue this research. Virginia Commonwealth University collaborators included Atulasimha, Rajib, and VCU Ph.D. students Fahim Chowdhury and Shouvik Sarker. The Georgetown University team included Kai Liu, Ph.D., Professor and McDevitt Chair in Physics, Dhritiman Bhattacharya, Ph.D., Christopher Jensen, Ph.D. and Gong Chen, Ph.D. Atulasimha’s group illustrated physical reservoir computing using numerical models of spintronic devices and sought a material system to experimentally demonstrate PRC. Liu’s team worked with magneto-ionic materials and was intrigued by the possibility of using them for computing applications.

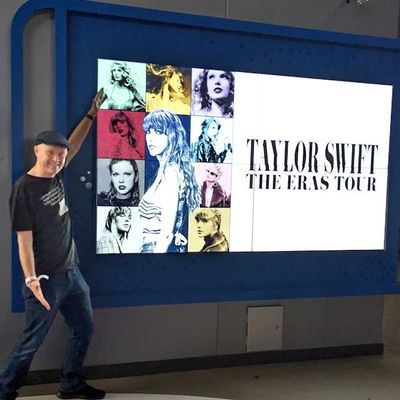

At Texas Christian University, Dr. Andrew Ledbetter, Chair of the Communication Studies Department, is turning his scholarly attention to one of pop culture’s biggest phenomena: Taylor Swift. His research uses data-driven analysis to reveal how Swift’s albums and songs build an interconnected narrative universe — what he calls her “Taylorverse.” Ledbetter ran lyrics across ten albums through semantic-network software to show how certain songs act as linchpins connecting themes of fame, womanhood, love and storytelling. “I was interested in interconnections among the song lyrics,” says Ledbetter. “The songs that are most central have a lot of overlap with other songs, might tend to be songs that are the most popular.” November 03 0 NBC News The work stands out not just for its pop-culture relevance, but for its academic innovation: combining computational text-analysis with narrative theory to unlock why certain tracks resonate more deeply than others. For journalists, cultural commentators or anyone covering the evolving intersection of music, identity and media, Dr. Ledbetter is a go-to expert. He can speak to how storytelling in music shapes audience engagement, how media fandom becomes scholarship, and why Swift’s songwriting continues to spark new research just as much as chart-topping hits. Andrew Ledbetter is available for interviews - Simply click on his icon now to arrange an interview today.

Fueling the Future of Cancer Immunotherapy: Gang Zhou’s Research Takes a Major Step Forward

Cancer immunotherapy has transformed how clinicians approach the treatment of certain blood cancers, but major limitations remain — especially when it comes to sustaining strong, long-lasting immune responses. Gang Zhou, PhD, a leading cancer immunologist at Augusta University’s Georgia Cancer Center and the Immunology Center of Georgia, is tackling these challenges head-on. Zhou’s work focuses on how T cells behave inside the body and how their performance can be enhanced to improve patient outcomes. His lab studies the forces that strengthen or weaken T cell responses, including their functional status, their ability to self-renew and the environmental pressures they face inside tumors. This deep understanding positions him as a key figure in the effort to advance next-generation immunotherapies. Recently, Zhou and his research team were awarded the first Ignite Grant from the Immunology Center of Georgia — a seed program designed to support bold, high-impact translational ideas. Their funded project aims to make CAR-T therapy more effective. CAR-T is a type of immunotherapy in which a patient’s own T cells are genetically modified to recognize and attack cancer cells. While this approach has revolutionized the treatment of certain blood cancers, it still faces obstacles such as limited cell persistence and reduced strength over time. “Our ultimate goal is to engineer T cells that not only survive longer but also remain highly functional, giving patients more durable protection against their disease.” Zhou’s team is addressing this issue by studying how a modified form of STAT5, a transcription factor that plays a key role in T cell survival and function, may help engineered T cells last longer and perform better. The ultimate goal is to create CAR-T therapies that maintain potency, withstand the harsh tumor microenvironment, and offer durable results for patients. The Ignite Grant recognizes not only the promise of this specific project, but also Zhou’s broader expertise in understanding how T cells can be guided, supported, and strengthened to fight cancer more effectively. His research contributes to a growing wave of scientific innovation aimed at improving immunotherapy outcomes for patients with both blood cancers and, potentially, solid tumors — an area where current treatments face significant barriers. As immunotherapy continues to evolve, the work led by Gang Zhou stands as a compelling example of how foundational science, translational research, and clinical ambition can work together to push the field forward. To connect with Dr. Gang Zhou - simply contact AU's External Communications Team mediarelations@augusta.edu to arrange an interview today.

Study: Lessons learned from 20 years of snakebites

The best way to avoid getting bitten by a venomous snake is to not go looking for one in the first place. Like eating well and exercising to feel better, the avoidance approach is fully backed by science. A new study from University of Florida Health researchers analyzed 20 years of snakebites cases seen at UF Health Shands Hospital in Gainesville. “This is the first time we’ve evaluated two decades of venomous snakebites here,” said senior author and assistant professor of medicine Norman L. Beatty, M.D., FACP. Researchers analyzed 546 de-identified patient records from 2002 to 2022 and highlighted notable conclusions — for instance, that a third of the snakebites analyzed were preventable and caused by people intentionally engaging with wild snakes. “Typically, people’s experiences with getting bitten are due to an interaction that was inadvertent — they stumble upon a snake or reach for something without seeing one camouflaged,” Beatty said. “In this case, people were seeking them out. There were a few individuals who were bitten on more than one occasion.” Most (77.8%) of the snakebites occurred in adult men while they were handling wild snakes, and most of the bites were perpetrated by the diminutive pygmy rattlesnake and the cottonmouth. The latter is named for the white lining of its mouth, which it displays when threatened. “I was less surprised to see those species emerge as some of the most common ones people were bitten by, but the robust presence of other, less common species in the data — like the eastern coral snake, southern copperhead, timber rattlesnake and the eastern diamondback rattlesnake, was interesting,” Beatty said. The eastern diamondback rattlesnake is one of the most venomous snakes in North America. Most patients were bitten on their hands and fingers and around 10% of them attempted outdated self-treatments no longer recommended by doctors — like sucking out the venom. Initially, the study began as a medical student research project, thanks to a handful of medical students who worked with Beatty to review the cases. The intention was to dive deep into the circumstances of each encounter and learn more about the treatment given, as well as the outcomes. Fourth-year medical student River Grace, the paper’s first author, said the work struck a personal note. “My dad is a reptile biologist, so I’ve grown up around snakes my whole life,” Grace said. “He was bitten by a venomous snake many years ago and ended up hospitalized for multiple weeks, so it was interesting to keep that experience in mind while going over the data.” Grace noted that it typically took those bitten over an hour on average to travel from where the bite occurred to the hospital. “It seems like the reason for that was people not knowing exactly what to do once they’d been bitten, or underestimating the severity of the bite,” he said. “Some would just sit at home for hours.” Floridians share their home with a variety of scaly neighbors who don’t always welcome visitors — accidental or not. Ultimately, thanks to the timely care of providers, only three snake bites were fatal. However, antivenom is no panacea. Those who are lucky enough to receive it in time can still incur complications from the original snake bites, like tissue damage, or even a fatal allergic reaction to the antivenom itself. Consequently, researchers look toward improving the processes used to triage snake bites in the emergency room, ensuring that providers are equipped with the knowledge and the know-how to shorten time to treatment. “In the future, we think we’d love to get involved in enhancing provider education so everyone in the health care setting is confident in being able to identify and administer antivenom as quickly and safely as possible,” Grace said.

UF team develops AI tool to make genetic research more comprehensive

University of Florida researchers are addressing a critical gap in medical genetic research — ensuring it better represents and benefits people of all backgrounds. Their work, led by Kiley Graim, Ph.D., an assistant professor in the Department of Computer & Information Science & Engineering, focuses on improving human health by addressing "ancestral bias" in genetic data, a problem that arises when most research is based on data from a single ancestral group. This bias limits advancements in precision medicine, Graim said, and leaves large portions of the global population underserved when it comes to disease treatment and prevention. To solve this, the team developed PhyloFrame, a machine-learning tool that uses artificial intelligence to account for ancestral diversity in genetic data. With funding support from the National Institutes of Health, the goal is to improve how diseases are predicted, diagnosed, and treated for everyone, regardless of their ancestry. A paper describing the PhyloFrame method and how it showed marked improvements in precision medicine outcomes was published Monday in Nature Communications. Graim, a member of the UF Health Cancer Center, said her inspiration to focus on ancestral bias in genomic data evolved from a conversation with a doctor who was frustrated by a study's limited relevance to his diverse patient population. This encounter led her to explore how AI could help bridge the gap in genetic research. “If our training data doesn’t match our real-world data, we have ways to deal with that using machine learning. They’re not perfect, but they can do a lot to address the issue.” —Kiley Graim, Ph.D., an assistant professor in the Department of Computer & Information Science & Engineering and a member of the UF Health Cancer Center “I thought to myself, ‘I can fix that problem,’” said Graim, whose research centers around machine learning and precision medicine and who is trained in population genomics. “If our training data doesn’t match our real-world data, we have ways to deal with that using machine learning. They’re not perfect, but they can do a lot to address the issue.” By leveraging data from population genomics database gnomAD, PhyloFrame integrates massive databases of healthy human genomes with the smaller datasets specific to diseases used to train precision medicine models. The models it creates are better equipped to handle diverse genetic backgrounds. For example, it can predict the differences between subtypes of diseases like breast cancer and suggest the best treatment for each patient, regardless of patient ancestry. Processing such massive amounts of data is no small feat. The team uses UF’s HiPerGator, one of the most powerful supercomputers in the country, to analyze genomic information from millions of people. For each person, that means processing 3 billion base pairs of DNA. “I didn’t think it would work as well as it did,” said Graim, noting that her doctoral student, Leslie Smith, contributed significantly to the study. “What started as a small project using a simple model to demonstrate the impact of incorporating population genomics data has evolved into securing funds to develop more sophisticated models and to refine how populations are defined.” What sets PhyloFrame apart is its ability to ensure predictions remain accurate across populations by considering genetic differences linked to ancestry. This is crucial because most current models are built using data that does not fully represent the world’s population. Much of the existing data comes from research hospitals and patients who trust the health care system. This means populations in small towns or those who distrust medical systems are often left out, making it harder to develop treatments that work well for everyone. She also estimated 97% of the sequenced samples are from people of European ancestry, due, largely, to national and state level funding and priorities, but also due to socioeconomic factors that snowball at different levels – insurance impacts whether people get treated, for example, which impacts how likely they are to be sequenced. “Some other countries, notably China and Japan, have recently been trying to close this gap, and so there is more data from these countries than there had been previously but still nothing like the European data," she said. “Poorer populations are generally excluded entirely.” Thus, diversity in training data is essential, Graim said. "We want these models to work for any patient, not just the ones in our studies," she said. “Having diverse training data makes models better for Europeans, too. Having the population genomics data helps prevent models from overfitting, which means that they'll work better for everyone, including Europeans.” Graim believes tools like PhyloFrame will eventually be used in the clinical setting, replacing traditional models to develop treatment plans tailored to individuals based on their genetic makeup. The team’s next steps include refining PhyloFrame and expanding its applications to more diseases. “My dream is to help advance precision medicine through this kind of machine learning method, so people can get diagnosed early and are treated with what works specifically for them and with the fewest side effects,” she said. “Getting the right treatment to the right person at the right time is what we’re striving for.” Graim’s project received funding from the UF College of Medicine Office of Research’s AI2 Datathon grant award, which is designed to help researchers and clinicians harness AI tools to improve human health.

LSU Experts Break Down Artificial Intelligence Boom Behind Holiday Shopping Trends

Consumers are increasingly turning to artificial intelligence tools for holiday shopping—especially Gen Z shoppers, who are using platforms like ChatGPT and social media not only for gift inspiration but also to find the best prices. Andrew Schwarz, professor in the LSU Stephenson Department of Entrepreneurship & Information Systems, and Dan Rice, associate professor and Director of the E. J. Ourso College of Business Behavioral Research Lab, share their insights on this emerging trend. AI is the new front door for search: Schwarz: We’re seeing a fundamental change in how consumers find information. Instead of browsing multiple pages of results, users—especially Gen Z—are skipping to conversational AI for curated answers. That dramatically shortens the shopping journey. For years, companies optimized for SEO to appear on the first page of Google; now they’ll have to think about how their products surface in AI-generated recommendations. This may lead to a new form of “AIO”—AI Information Optimization—where retailers tailor product descriptions, metadata, and partnerships specifically for AI visibility. The companies that adapt early will have a distinct advantage in capturing consumer attention. Rice: This issue of people being satisfied with the AI results (like a summary at the top of the Google results) and then not clicking on any of the paid or organic links leads to a huge increase in what we call “zero click search” (for obvious reasons). For some providers, this is leading to significant drops in web traffic from search results, which can be disconcerting due to the potential loss of leads. However, to Andrew’s point of shortening the journey, it means that the consumers who do come through are much more likely to buy (quickly) because they are “better” leads. This translates to seemingly paradoxical situations for providers: they see drops in click-through rates and visitors/leads, yet revenue increases because the visitors are “better.” There is a rise in personalized shopping journeys: Schwarz: AI essentially acts as a personal shopper—one that can instantly analyze preferences, budget, personality traits, or past behavior to produce tailored gift lists. This shifts power toward “delegated decision-making,” in which consumers allow AI to narrow their choices. Younger consumers are already comfortable outsourcing this cognitive load. However, as ads enter the picture, these personalized journeys could be shaped by incentives that aren’t always transparent. That creates a new responsibility for platforms to disclose when suggestions are sponsored and for users to develop a more critical lens when interacting with AI-driven recommendations. Rice: This is also a great point. The “tools” marketers use to attract customers are constantly evolving, but this seems in many ways to be the next iteration of the Amazon.com suggestions that you find at the bottom of the product page for something you click on when searching Amazon (“buy all x for $” or “consumers also looked at…,” etc.), based on past histories of search and purchase, etc. One of the main differences is that you can now create virtually limitless ways to compare products, making comparisons less taxing (reducing cognitive load and stress), which may, in some cases, increase the likelihood of purchase. These idiosyncratic comparisons and prompts lead to the truly unique journeys Andrew is discussing. You no longer have to be beholden to a retailer-specified price range. You could choose your own, or instead ask an AI to list the products representing the best “value” based on consumer reviews, perhaps by asking to list the top ten products by cost per star rating, etc. Advertising is becoming more subtle and conversational: Schwarz: With ads woven directly into AI responses, the traditional boundary between content and advertising blurs. Instead of banner ads, pop-ups, or clearly labeled sponsored posts, recommendations in a conversational thread may feel more like advice than marketing. This has enormous implications for consumer trust. Retailers will likely see higher engagement through these context-aware ad placements, but regulatory scrutiny may also increase as policymakers evaluate how clearly sponsored content is identified. The risk is that advertising becomes invisible—something both platform designers and regulators will need to monitor carefully. Rice: This is definitely true. I was recently exploring an AI-based tool for choosing downhill skis, but the tool was subtly provided by a single ski brand. I’m not sure the distribution of ski brands covered was truly delivering the “best overall fit” for a potential buyer, rather than the best possible ski in that brand. At least in that case, it was somewhat disclosed. It does, however, become an issue if consumers feel misled, but they’d have to notice it first. Still, the advantages are big for retailers, and the numbers don't lie. According to some preliminary Black Friday data, shoppers using an AI assistant were 60% more likely to make a purchase. Schwarz: This shift is going to reshape multiple layers of the retail ecosystem: Retailers will need to rethink how they show up in AI-driven environments. Traditional SEO, ad bids, and social media strategies won’t be enough. Partnerships with AI platforms may become as important as being carried by major retailers today. Because AI tools can instantly compare prices across dozens of retailers, consumers will become more price-sensitive. Retailers may face increasing pressure to offer competitive pricing or unique value propositions, as AI reduces friction in comparison shopping. Retailers who integrate AI into their own websites—chat-based shopping assistants, personalized gift advisors, automated bundling—will gain an edge. Consumers are increasingly expecting conversational interfaces, and companies that delay will quickly feel outdated. As AI tools influence purchasing decisions, consumers and regulators alike will demand clarity around how recommendations are generated. Retailers will need to navigate this carefully to maintain What I think we are going to see accelerate as we move forward: AI-powered concierge shopping will become mainstream. Within a couple of years, using AI to generate shopping lists, compare prices, and find deals will be as common as using Amazon today. Retailers will create AI-specific marketing strategies. Instead of optimizing for keywords, they’ll optimize for prompts: how consumers might ask for products and how an AI system interprets those requests. More platforms will introduce advertising into AI models. ChatGPT is simply the first mover. Once the revenue potential becomes clear, others will follow with their own ad integrations. Greater scrutiny from policymakers. As conversational advertising grows, transparency rules and labeling requirements will almost certainly. A new era of “conversational commerce.” Buying directly through AI—“ChatGPT, order this for me”—will become increasingly common, merging search, recommendation, and transaction into a single seamless experience. I can speak to this on a personal level. My college-aged son is interested in college football, and I wanted to get him a streaming subscription to watch the games. However, the football landscape is fragmented across multiple, expensive platforms. I asked ChatGPT to generate a series of options. Hulu is $100/month for Live TV, but ChatGPT recommended a combination of ESPN+, Peacock, and Paramount+ for $400/year and identified which conferences would not be covered. What would have taken me hours only took me a few minutes! Rice: On the other hand, AI isn’t infallible, and it can lead to sub-optimal results, hallucinations, and questionable recommendations. From my recent ski shopping experience, I encountered several pitfalls. First, for very specific questions about a specific model, I sometimes received answers for a different ski model in the same brand, or for a different ski altogether, which was not particularly helpful, or specs I knew were just plain wrong. Secondly, regarding Andrew’s point about the conversational tone, I asked questions intended to push the limits of what could be considered reliable. For example, I asked the AI to describe the difference in “feel” of the ski for the skier among several models and brands. While the AI gave very detailed and plausible comparisons that were very much like an in-store discussion with a salesperson or area expert, I’m not sure I fully trust when an AI tells me that you can really feel the power of a ski push you out of a turn, this ski has great edge hold, etc. It sounds great, but where is the AI sourcing this information? I’m not convinced it’s fully accurate. It also seems we’re starting to see Google shift toward a more AI-centric approach (e.g., AI summaries and full AI Mode). At the same time, we’re also starting to see AI migrate closer to Google as people use it for product-related chats, and companies like Amazon and Walmart have developed their own AI that is specifically focused on the consumer experience. I can’t imagine it will be long before companies like OpenAI and their competitors start “selling influence” in AI discussions to monetize the influence their engines will have.