Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

Major trial shows increasing bone density fails to cut fracture risk in brittle bone disease

An international clinical trial involving Aston University researchers has challenged long held assumptions about how brittle bone disease is treated in adults, after finding that substantially increasing bone density did not reduce the risk of fractures. The study, published in the Journal of the American Medical Association (JAMA), examined whether a two stage treatment using the bone building drug teriparatide followed by the bone preserving drug zoledronic acid could reduce fractures in adults with osteogenesis imperfecta, often referred to as brittle bone disease, a rare genetic condition that causes bones to break easily throughout life. Researchers followed 349 adults treated at 27 specialist centres across the UK and Europe. While the treatment led to clear increases in bone density in the spine and hip, fracture rates were no lower than among patients receiving standard care, suggesting that bone quality may matter more than bone density alone in preventing fractures in people with the condition. The findings underline a key distinction between brittle bone disease and more common bone conditions such as osteoporosis, where increasing bone density is known to reduce fracture risk. In osteogenesis imperfecta, the study suggests that bones can become denser without becoming less likely to break, indicating that the underlying quality and structure of bone tissue may play a greater role in fracture risk than density alone. Dr Zaki Hassan Smith, an endocrinologist at Aston Medical School who contributed to the research, said: “This study shows that in osteogenesis imperfecta, simply increasing bone density doesn’t necessarily translate into fewer fractures. That’s important, because it tells us that the disease is more complex than what we see on a scan. The findings help shift the focus towards understanding bone quality and how bones behave in real life, which is essential if we are to develop more effective treatments that genuinely reduce harm for patients.” Osteogenesis imperfecta is a genetic condition that affects collagen, leaving bones fragile and prone to fracture throughout life. There is currently no licensed treatment specifically approved to prevent fractures in adults with the condition, and patients often experience repeated fractures, chronic pain and long term disability. The trial tested a sequential treatment strategy commonly used in osteoporosis, where a bone building drug is followed by a treatment designed to preserve gains in bone strength. Although this approach successfully increased bone density in people with osteogenesis imperfecta, it did not reduce fracture rates, suggesting that treatment strategies effective in osteoporosis may not directly translate to rare bone diseases. Researchers did observe improvements in some quality of life measures among participants receiving the treatment, including reduced pain interference and improved mobility. However, fracture prevention remained unchanged, reinforcing the need for new approaches that target the fundamental properties of bone in osteogenesis imperfecta rather than density alone. The study was led by the University of Edinburgh and funded by the Medical Research Council and the National Institute for Health and Care Research. Aston University contributed clinical and academic expertise through Aston Medical School as part of the large international collaboration, which involved specialist centres across the UK and Europe. The study was led by the University of Edinburgh, with Aston University contributing clinical and academic expertise as part of a wider international collaboration involving multiple specialist centres across the UK and Europe. The research was funded by the Medical Research Council and the National Institute for Health and Care Research. Researchers say the findings provide important guidance for future research, helping to steer efforts towards treatments that focus on bone quality, strength and resilience in everyday life. They also highlight the value of large scale clinical trials in rare diseases, where learning what does not reduce harm is an essential step towards better care. The paper, Teriparatide Plus Zoledronic Acid for Osteogenesis Imperfecta, is published in JAMA. https://doi.org/10.1001/jama.2026.6889

Expert Insight: The Hidden Costs of Staying Neutral

Considering the number of hot-button issues and divisiveness in American culture, choosing a middle-of-the-road attitude might be seen as the best way to navigate an often volatile environment. But what about those individuals who choose neutrality as a means of staying below the radar and, thereby, avoiding the need to take any action? This is the question that Laura Wallace, assistant professor of organization and management at Emory’s Goizueta Business School, and coauthors ask in their new paper, The Preference for Attitude Neutrality. Published in the Journal of Experimental Psychology: General, the researchers explore individuals with a preference for neutrality and how their uncompromising commitment to neutral opinions, not only discourages rigorous debate but could have a deleterious impact on society. Emory Business recently caught up with Wallace to discuss her research. Emory Business: What sparked your interest in the preference for neutrality? Wallace: When we think about the problems in the world, often people point to too many extreme opinions as the source of much social ill, and, of course, they can be. But, when I thought about a lot of the issues that I cared about, like addressing climate change or gun violence, I felt that sometimes the issue was too much neutrality in the face of issues that were themselves pretty extreme. When I talk about this work, people can often picture someone who seems like a “Pref Neutral,” as we have affectionately nick-named them, that is someone who in the face of information suggesting that there is an extreme problem is not moved to address the issue. I could think of people in my life who had these reactions, and I was interested in understanding more about them. Emory Business: How did you identify these individuals? Wallace: We developed a scale to assess the extent to which people view neutrality as truer, more socially desirable, and more moral. For example, we ask people how much they agree with items like, “If you have all the facts about a topic, your opinion will generally end up somewhere neutral” and “There is something noble about remaining in the middle about controversial topics.” The more someone agrees with these items, the more we would say they have a preference for neutrality. Emory Business: How does this study fit in with your larger body of work? Wallace: I generally think of my program of research as studying the “psychology of social change.” Within that broad category, I study 1) how to change minds and build trust and 2) how to address societal disadvantage. I view this work as fitting in the first bucket about how we change people’s minds. What interests me about people who are high in the preference for neutrality is the fact that they seem to NOT change their minds in the face of extreme information suggesting that they should. These individuals represent a significant barrier to our ability to address pressing issues, so I view this work as very much tied into the overarching goal of my research program to understand social change (or the lack thereof). Laura Wallace is an assistant professor of organization and management at Emory University’s Goizueta Business School. Wallace studies how to build trust with implications for addressing societal disadvantage, changing minds, and fostering growth. View her profile Emory Business: Would you describe a preference for neutrality to be a mindset, strategy, or attitude/value? Wallace: I think of the preference for neutrality like an ideology or value system that guides people’s reactions across many issues and situations. Emory Business: Talk about the study design. It’s quite detailed and multilayered, with eight hypotheses and six different measures to account for potential bias that were then randomized to create different questionnaires given to a large pool of individuals. How did the coauthors agree on the structure? Wallace: First, I should take the opportunity to shout out Thomas Vaughan-Johnston, who led this work. He is a faculty member at Cardiff University and is just a very thoughtful, interesting researcher, and he’s great to work with. Second, there are a number of studies in the paper. For each, our research team worked together to design and interpret the studies. The paper paints a relatively negative view of Pref Neutrals. We did take measures to resist bias in our design. For instance, we didn’t just ask people how much they dislike extremists (which would have been biased towards making those with a preference for neutrality look bad), but also asked about attitudes towards neutrals (where those with a preference for neutrality may seem like “the nice people”). We are now starting research on contexts where a preference for neutrality can offer some advantages, hopefully without artificially striking a false balance. For instance, we are considering whether they can help reduce group polarization effects, especially where groups drift towards radicalism in conversation. Also, we have some preliminary data where they seem to be a bit more accurate when detecting neutral emotions and attitudes in others, which is a remarkable plus side. Basically, we think the preference for neutrality is a social concern, but we are trying to be fair-minded when considering why they think this about neutrality and when this trait is useful for the world. Emory Business: In the study, you note that preference for neutrality can be a sign of arrogance and that Pref Neutrals are uninterested in learning more or changing their stance. How is this arrogance exhibited? Wallace: I would say that they are more close-minded than arrogant and that they don’t seem to be particularly thoughtful. One way we have assessed this is by measuring their “intellectual humility,” which essentially captures how much people recognize the limits of their own perspectives and are open to changing their minds. Pref Neutrals tend to score low on intellectual humility. They also score a little low on the “need for cognition,” which captures how much people like to think. Emory Business: In one section it reads: “preference for neutrality (preference for extremity) should relate to seeing other people as moral, competent, and likeable, when those individuals have generally neutral (extreme) opinions.” Does this mean that they align with people who have their same opinion structure? Wallace: We find that people who score high on the preference for neutrality scale tend to have more favorable impressions of others who are more neutral and tend to be more persuaded by others who are labeled as holding neutral attitude positions. Emory Business: How would one identify this trait in a person, particularly, when the research shows they tend to self-censor? Wallace: In general, they are really hesitant to take stances on issues or they tend to avoid taking sides or expressing strong positions. And yes, they tend to self-censor, meaning they often avoid sharing their opinion at all. Emory Business: How does this preference for neutrality play out in a political sense? Specifically, if they are averse to extremes would they vote based on their values? Wallace: We have a lot of evidence that Pref Neutrals tend to be political centrists. We don’t have evidence for this, but I suspect that they sit out a lot of elections, and to the extent that they do vote, they favor more moderate candidates. They probably would not vote for a position or individual with an extreme view unless it was framed as neutral. This may sound like a silly, cerebral point, but I actually think it’s critical to the point we are making, as what is viewed as “extreme” in a given time is often socially determined. For example, now it would be viewed as an extreme stance to support slavery. However, in the early 1800s in the U.S., it would have been viewed as an extreme stance to oppose slavery. I imagine at the time, many Pref Neutrals were supportive of slavery as a means of being politically moderate. Emory Business: What was the most interesting result in this study for you? Wallace: We find that if you give Pref Neutrals the exact same information but label it as extreme or neutral, they are more persuaded by the exact same information when it is labeled as neutral. This results in a kind of ironic effect where they actually end up with a more extreme opinion when information has been labeled as neutral. Emory Business: Research wise, what’s next for you? Wallace: There are a few ways that we are following up on our work that I am excited about: First, we’re trying to understand more about how Pref Neutrals maintain neutral opinions in the face of extreme information. So, we are giving Pref Neutrals true, extreme facts, and then examining their thoughts to determine how they resist taking the extreme positions information would suggest that they should. Second, we thought that Pref Neutrals would be particularly likely to trivialize social issues, to say they are unimportant. We are actually finding that they rate all social issues as extremely important, which we are trying to understand more about. We suspect they might do this as a strategy to avoid taking action on social issues. If stubbed toes and human trafficking are both “extremely” important, then there are just too many issues to take action on, and so they are able to justify a lack of action. Third, we are interested in understanding what it is like to make decisions in a group with a Pref Neutral. There is a lot of evidence that groups tend to make bad decisions because people want to agree with each other. This might actually be an area where Pref Neutrals would shine – the fact that they don’t want to take a stance may force groups they are a part of to really think things through and make better decisions. This is all super preliminary, but it reflects the exciting work ahead and that there is much more to understand about these folks!

Expert Insights: Want More Engagement? Eliminate the Barriers.

Anyone born in the 70’s or earlier will probably remember it well. Time was when playing any kind of video game meant physically disporting yourself to the local arcade—a twilight zone of flashing neon, electronic beeps and bops, and the clink of quarters hitting the slot. As technology advanced, the videogame came to you. Home consoles and TV stations rigged with joysticks duly became the mainstay of gaming. The Atari 2600 brought the arcade experience into dens all over the US; Pac-Man, Space Invaders, and Asteroids now at the fingertips of a generation of games who no longer needed to leave home to play. Fast forward to the era of smart phones and hi-tech, and gaming has evolved again. Today, Fortnite, Minecraft, and The Legend of Zelda can accompany you pretty much anywhere—onto a train or a bus, into the canteen at work or school, or under the covers at 2am. In our always-on, on-demand world, video gaming increasingly meets players where they are; a play-anywhere, digital user experience that empowers individuals to engage with their game of choice wherever they are, whenever it suits, and via whatever platform they prefer, desktop or mobile. For users, the benefits seem clear. But what about game producers? As availability expands to new channels and platforms, how does it change user behavior? Does it deepen engagement or does cross-platform continuity simply end up redistributing play—the addition of each new platform shifting players away from, and effectively cannibalizing, existing channels? It’s a conundrum, and not just for video game producers. Retailers, bankers, insurance firms, media, and hospitality providers—anyone with an online-first approach looking to meet their customers wherever they are—should also be cognizant of the potential downsides of channel expansion in the digital space. Weighing in here is research by Professor of Marketing and expert in the intersection of sports and cultural analytics and marketing Michael Lewis. Together with Wooyong Jo of Purdue, Lewis looks at the impact of omni-channel strategy on videogames—a proxy, he says, for other sectors and industries. What they find is critical for marketers and decision-makers in any context or business setting. Increasing the digital touchpoints between your product and customers does impact behavior—but the net results are overwhelmingly positive. Video game players play more, they spend more frequently, and they integrate gameplay more deeply into their everyday lives. In other words, the investment pays off. And the dividends in customer engagement are serious. Switching to the Switch To unpack all of this, Lewis and Jo partnered with a large US video game publisher to analyze player-level behavioral data for one its major titles in the Multiplayer Online Battle Arena, or MOBA genre. Players form teams and compete to destroy opposing team’s bases, selecting a character from a set of 100+ options. Revenue for the publisher comes from a “freemium” business model—users can make voluntary purchases to unlock new characters or buy cosmetic enhancements. These purchases are geared toward enhancing the gaming experience but don’t affect competitive outcomes, making them a critical measure of engagement. In 2019, the game was released for the Nintendo Switch, which can be docked in home consoles but is most commonly used as a mobile, hand-held device. PC players were given the option to download this new version and continue gameplay seamlessly using their existing accounts. Analyzing player behavior before and after the adoption of the new Switch platform, Lewis and Jo were able to zoom in on some critical measures of user engagement including game usage or the total number of matches played, in-game spending—what, when and how much players spent—and player inactivity or churn. “We were able to really get into player behavior over time, and what happens when you introduce the Switch option and remove the constraints of having to play in one place—the home or gaming PC,” says Lewis. “What happens when you make it possible for players to access the game they love while they’re commuting or on their lunchbreak?” Plenty, it turns out. Mobile access: gameplay, spending and churn Crunching the data, Lewis and Jo find that mobile access dramatically increases gameplay. Players who adopted the Switch version played approximately 31% more games than before—a dramatic uptick that underscores how flexibility gains translate into new opportunities to play and engage. And that’s not all. Lewis and Jo also find that gameplay becomes less concentrated within narrow windows—after school or work, say—and is now more spread out across the day, the result of the “ubiquity effect,” says Lewis. “Take away the constraints of having to be in a fixed location and you see players adding additional play sessions. Interestingly though, we don’t find any adverse effect on PC gaming. Players are simply playing more, and playing longer, rather than replacing PC time.” Then there’s in-game purchasing. MOBA-type games typically give players the option to voluntarily buy modifications for characters, known as “skins.” These skins are cosmetic enhancements: new armor, costumes, skill animations or effects. Crucially, these kinds of purchases don’t advance players to new levels of success in the game. Instead, they are used for personalization—to demonstrate status or to celebrate an in-game event. Lewis and Jo find that mobile adopters make more frequent in-game purchases. While the overall total doesn’t increase materially, these players are spending small amounts, more often—almost 7% more frequently than before. This makes intuitive sense, says Lewis. If players are logging in more often, they have more opportunities to feel inspired to want to spend on skins. But there’s another factor that may be at work. “With this kind of in-game purchasing, it’s likely that a lot of it is about credibility. When you buy a skin or a character pack, it’s like you have more aura within the game; you want to signal something to other players and let yourself be known. And this is more than just monetary, it’s about a deeper kind of engagement,” says Lewis. “It’s possible that as mobile access makes the game more of a frequent companion, as the rate of play increases, there’s this effect that players fall deeper into the community—their engagement deepens even more.” Interestingly, the shift to mobile access had the most significant impact precisely on those players whose pre-Switch in-game purchasing was lowest. These users, who were arguably most likely to disengage and drift away from the game, became significantly more active once the hand-held option became available. “If you have players spending less and less inside the game, the intuition is that these are the customers you are most at risk of losing,” says Lewis. “Bringing in the Switch has seen these customers—those more prone to churn—actively reengage with the game, maybe because they have greater propensity for the mobile version.” Either way, this should be a particularly interesting finding for marketers, he adds; retaining existing users is typically cheaper than attracting new ones. “The evidence suggests that mobile access can serve not only as a growth strategy, but also a defensive one if it helps keep marginal users engaged; those who might otherwise have detached from the product altogether.” Help Them Switch So far, so encouraging. There is one potential downside to porting a game or online product to a new channel, however, and that is usability. Lewis and Jo find that players who switched between platforms experience a slight, initial decline in in-game performance—likely because of differences in the control systems between devices. Players who’ve been using keyboard and mouse controls may need time to adapt to hand-held controllers. To mitigate this, he and Jo suggest that producers could offer tutorials or introductory gameplay modes that accelerate the learning curve as users adjust to the new interface. In most cases, usability should be factored in as an additional, hidden cost, when developers and organizations are contemplating investing in more online customer touchpoints. “Expanding your online channels will always have some cost. Taking a game from one platform and porting it to another one isn’t free, so you will want to anticipate the hurdles, even as you weigh up the clear benefits,” says Lewis. “The key is to make sure you protect your users. With things like video games, you want to think about how to guide or upskill your players, maybe have them play bots at first to ramp up their capabilities. Whenever you create a new channel that has a different operating system from the user’s perspective, you’re probably going to want to provide some aid to your fan community.” The benefits of omni-channel access should always be weighted against the costs involved, counsels Lewis. Even so, today’s competitive pressures—the seemingly inexorable march of technological innovation and evolving user expectations—are likely to make platform expansion unavoidable for most online businesses. In the world of video gaming, as major franchises release new products across multiple platforms, and player preferences become more sophisticated, companies may simply have to adopt similar strategies to remain competitive. “As everyone else invests in the same new technologies, you almost have to do the same—just as a matter of doing business,” says Lewis. “If you are launching a video game, you’ve got to compete with whatever Call of Duty or Grand Theft Auto are doing. You can’t just tell your players they can only engage on one platform. The competition is continuously raising the stakes just in terms of the bare minimum.” Building Fandom: the Connective Cultural Tissue More broadly, Lewis and Jo’s findings speak to how human beings form communities of shared passion around business entities and, perhaps more compellingly, around cultural phenomena: video games, for sure, but also sports teams, music, films, comic books, fashion, and more. Understanding the mechanisms that drive and deepen engagement sheds more light on what Lewis calls the “connective cultural tissue of fandom: ”the powerful social bonds, camaraderie, and shared identity that connect people to cultural entities and to each other. Fandom, he argues, is the “key to our world.” Understanding fan behavior is critical to understanding how it is that games, brands, sporting teams, or politics forge communities built on shared passion. “Whatever your organization or business is, you are going to be interested in driving passion. You want people to engage and love what you do. What we’re looking at in this study is a building block towards understanding how cultural entities fit into consumers’ lives, and how eliminating barriers helps to expand communities and drive relationships—extending reach and engagement by weaving cultural experiences more deeply into everyday life.” The real challenge in front of organizations, be they video game producers or online retailers, says Lewis, is to give their product the kind of “cultural meaning” that creates fans—and not just users. “When you think about the behavior of fans, the level of passion and engagement that exists around cultural phenomena—whatever they are from video games to FIFA, the English Football League to the Super Bowl, Taylor Swift to the Republican Party—that’s where you see the passion that really drives the world. And that to me, is critical in understanding how business works, how societies function, and how our world evolves.”

The Biggest Study Yet on School Cellphone Bans Shows Results Aren’t So Simple

As more schools move to restrict or completely ban smartphones in classrooms, the largest study ever conducted on school cellphone bans is challenging assumptions about what these policies actually achieve. The new U.S. study, involving roughly 4,600 schools and researchers from institutions including Stanford, Duke, the University of Michigan, and the University of Pennsylvania, found that strict cellphone bans dramatically reduced phone use during the school day. In some schools, classroom phone use dropped from 61 percent to just 13 percent. It's a popular topic and media coverage of the results has been extensive. But the findings became more complicated from there. Researchers found little immediate evidence that phone bans significantly improved test scores, attendance, classroom attention, or bullying rates. Some schools even saw short-term increases in student discipline issues and declines in student well-being immediately after bans were introduced. Still, the study suggested that longer-term outcomes may improve as students adjust and schools refine enforcement strategies. Teachers consistently reported fewer classroom distractions and stronger learning environments. Mizuko Ito is a cultural anthropologist of technology use, focusing on children and youth's changing relationships to media and communications. She recently completed a research project supported by the MacArthur Foundation a three year ethnographic study of kid-initiated and peer-based forms of engagement with new media. View her profile The findings arrive as governments across North America continue expanding school cellphone restrictions amid growing concerns about distraction, screen addiction, anxiety, and the impact of social media on youth mental health. The study highlights a growing debate among educators, parents, and researchers: while limiting phone access may reduce distractions, the relationship between young people, technology, mental health, and learning is far more complex than simply removing devices from classrooms.

TCU Chemistry Researcher Named a Big 12 Faculty of the Year

Kayla Green has built an internationally recognized research program while mentoring the next generation of scientists at Texas Christian University, and her efforts are getting noticed. The chemistry professor and assistant dean of undergraduate affairs at the Louise Dilworth Davis College of Science & Engineering represents TCU among this year’s Big 12 Faculty of the Year honorees. The Big 12 Faculty of the Year Award honors outstanding faculty who excel in innovation and research at each of the athletic conference’s 16 universities. Honorees represent and reflect the best attributes that make a Big 12 college campus a bastion for learning and growth. “In my view, Professor Green exemplifies the fact that student success cannot happen without research, and world-leading research cannot happen without authentic, student-centered experiences,” wrote a nominator when Green was named the 2025 winner of the Chancellor’s Award for Distinguished Achievement as a Creative Teacher and Scholar. “Professor Green has maintained a vibrant, externally funded research program throughout the past 15 years, a distinction shared by very few TCU faculty.” Green was chosen in part for her international reputation in the field of inorganic chemistry as applied to neurodegenerative diseases and catalysis, as well as her leadership in a growing research program that has brought in more than $2.5 million in external support. This includes work with Ben Janesko, professor and chair of chemistry and biochemistry, and biology professors Giri Akkaraju and Michael Chumley on a grant from the National Institutes of Health. Green’s collaborative work with students highlights her ability to weave together research and mentorship. “Dr. Green’s vision and drive have strengthened the foundation of our college,” said T. Dwayne McCay, interim dean of Davis College. “Her ability to inspire students and colleagues alike reflects the kind of leadership that propels our mission forward.” One of her most impactful initiatives is Chemistry Boot Camp, a program she developed with colleagues Janesko and Heidi Conrad to help incoming students build confidence before their first chemistry class. The Big 12 Faculty of the Year Award is intended to showcase the diversity of research breakthroughs and educational opportunities afforded to students attending Big 12 institutions and helps attract future students. This year’s award recipients stretch across a vast array of departments. “We are constantly looking for ways to highlight how Big 12 faculty continue to educate and inspire the next generation of leaders,” Jenn Hunter, Big 12 chief impact officer said. “From the arts and filmmaking to business and engineering, this year’s cohort showcases the vast opportunities available to students pursuing an education on Big 12 campuses.” Faculty members were nominated by their institutions in conjunction with conference faculty athletic representatives, provosts and other university leaders. “I’m very honored to represent TCU as a Big 12 Faculty of the Year,” Green said. “I hope that I am not expected to exhibit any athletic skill sets but am happy to cheer on the Frogs in all they do in our classrooms and competitions! Congratulations to the honorees from across our great conference. TCU has the best faculty, and I am happy to represent them in this capacity.”

How the Class of 2026 can keep resumes out of the digital black hole

Students set to graduate this May are entering a job market where the rules of engagement are being rewritten in real-time. AI is both friend and foe, and ghosting has become the norm. University of Delaware career expert Jill Panté shares how college students can navigate these challenges in a rapidly shifting economy. Panté, director of the Lerner Career Services Center at UD, can apply her expertise to the following: The AI recruitment gap • How to prevent resumes from falling into the "digital black hole" of automated tracking systems. • Current recruitment in 2026 is heavily filtered by AI. If resumes don't mirror the language of the job description, a human might never even see it. • In 2026, AI is the gatekeeper. Students who aren’t using AI for assistance are working twice as hard for half the results. However, the goal is to use it as a co-pilot, not an autopilot. Beat the bots (tailor your content) • Use tools like Resume Worded or Generative AI like Microsoft Co-Pilot or Gemini to see how resumes stack up against specific job postings. • It is better to send five highly tailored, thoughtful applications than 50 generic ones that get auto-rejected by an algorithm. • Use AI to run a mock interview based on the job description and company. The "hidden” job market • If a "job search" consists solely of clicking "Easy Apply" on LinkedIn for six hours a day, it’s not searching; it’s just doom-scrolling with a resume. Roughly 80% of your time should be spent talking to humans. The other 20% should be spent on applications and research. • Find the recruiter or a department head on LinkedIn. Send a brief (2-3 sentence) note reiterating your interest. • Leverage alumni networks through LinkedIn. Narrative branding • Especially for Gen Z: Hiring managers don't just want to know what you did; they want to know the impact you made. • Instead of saying "Responsible for social media,” say "Increased engagement by 40% over 3 months by implementing a new video strategy." • Always lead with results (LinkedIn, resume, Interviews) to showcase the value you bring. Workforce anxiety • Managing the mental toll of the modern, high-speed job search and the professional "ghosting" epidemic. • Establish a personal "Board of Directors" to provide a balance of support, accountability and feedback. • Maintain momentum by volunteering, attending local networking events and learning new skills on platforms like LinkedIn Learning and Coursera. To reach Jill Panté directly and arrange an interview, visit her profile and click on the “contact” button.

New AI tool matches students with high-impact internships

Finding the right internship can be an important step for students, but it’s not always clear which opportunities will lead to the strongest growth. To help solve that problem, University of Florida researchers have developed an AI-powered tool that helps students identify internships most likely to accelerate their technical and professional development. Unlike traditional recommendation engines, Pro-CaRE not only predicts which opportunities will lead to stronger outcomes, it also explains why each suggestion is a good fit. In testing data collected from the students, Pro-CaRE’s predictions proved highly accurate, accounting for more than 72% of the differences in learning gains among participants. While the pilot is being tested in engineering, the tool could be adopted for other disciplines. “Internships are one of the most critical parts of an engineering education, but students often struggle to know which experiences will actually help them grow,” said Jinnie Shin, assistant professor of research and evaluation methodology in the UF College of Education. “What makes Pro-CaRE unique is that it doesn’t just offer a list of options. It provides personalized recommendations backed by data and it tells students clearly why an opportunity is a good match for them.” Pro-CaRE creates matches by analyzing each student’s coursework, major, background and self-reported interest, confidence and self-efficacy in engineering skills. It then compares that profile with a carefully chosen set of similar peers to refine suggestions. The result is more precise guidance that adapts to students at different stages of their degree programs. “Students shouldn’t have to guess or hope that an internship will be worthwhile,” Shin said. “With Pro-CaRE, they can approach opportunities knowing they’re backed by evidence, whether the role is onsite, hybrid or remote and whether it’s at a startup or a Fortune 500 company.” The system is designed to work across a wide range of companies and contexts, giving students flexibility while ensuring their choices align with their personal and professional goals. Each recommendation comes with a clear “why this?” explanation, so students can make confident decisions and discuss options more effectively with advisors. Pro-CaRE was developed by a cross-disciplinary UF team combining expertise in education and engineering. Alongside Shin, the project’s co-principal investigators include Kent Crippen in the College of Education and Bruce Carroll in the Herbert Wertheim College of Engineering. The team is exploring external funding opportunities to expand the usage and test the efficacy on a larger scale. “Ultimately, our goal is to empower students to invest their time in experiences that will have the greatest impact,” Shin said. “Pro-CaRE bridges the gap between what students hope to gain and what internships can truly deliver.”

Using AI tools empowers and burdens users in online Q&A communities

Whether you’ve searched for cooking tips on Reddit, troubleshooted tech problems on community forums or asked questions on platforms like Quora, you’ve benefited from online help communities. These digital spaces rely on people across the world to contribute their knowledge for free, and have become an essential tool for solving problems and learning new skills. New research reveals that generative artificial intelligence tools like ChatGPT are creating a double-edge effect on users in these communities, simultaneously making them more helpful while potentially overwhelming them to the point of decreasing their responses. “On the positive side, AI helps users learn to write more organized and readable answers, leading to a noticeable increase in the number of responses,” explained Liangfei Qiu, Ph.D., study coauthor and PricewaterhouseCoopers Professor at the University of Florida Warrington College of Business. “However, when users rely too heavily on AI, the mental effort required to process and refine AI outputs can actually reduce participation. In other words, AI both empowers and burdens contributors: it enables more engagement and better readability, but too much reliance can slow people down.” The study examined Stack Overflow, one of the world’s largest question-and-answer coding platforms for computer programmers, to investigate the impact of generative AI on both the quality and quantity of user contributions. Qiu and his coauthor Guohou Shan of Northeastern University’s D’Amore-McKim School of Business measured the impact of AI on users’ number of answers generated per day, answer length and readability. Specifically, they found that users who used AI tools to generate their responses contributed almost 17% more answers per day compared to those who didn’t use AI. The answers generated with AI were both shorter by about 23% and easier to read. However, when people relied too heavily on AI tools, their participation decreased. Qiu and Shan noted that the additional cognitive burden associated with heavier AI usage negatively affected the impact on a user’s answer quality. For online help communities grappling with AI policies, this research provides valuable insight into how these policies can be updated in the current AI environment. While some communities, like Stack Overflow, have banned AI tools, this research suggests that a more nuanced approach could be a better solution. Instead of banning AI entirely, the researchers suggest striking a balance between allowing AI usage while promoting responsible and moderated use. This approach, they argue, would enable users to benefit from efficiency and learning opportunities, while not compromising quality content and user cognition. “For platform leaders, the takeaway is clear: AI can boost participation if thoughtfully integrated, but its cognitive demands must be managed to sustain long-term user contributions,” Qiu said.

UF develops breakthrough magnet that could transform metal production

Imagine if producing steel parts for agricultural equipment or even aluminum soda cans required only a fraction of the energy it does today. A University of Florida-led innovation may soon make this a reality. In a groundbreaking collaboration backed by a nearly $11 million federal grant, UF researchers have developed a first-of-its kind superconducting magnet that could advance metal production and position the United States as a global leader in alloy production. “This revolutionary technology has the potential to substantially reduce the cost and energy use of heat treatments in the steel industry, and we are excited to help pave the way for its adoption in industry.” —Michael Tonks, Ph.D., UF’s interim chair of Materials Science and Engineering Funded by the U.S. Department of Energy’s Advanced Manufacturing Office, the project uses Induction-Coupled Thermomagnetic Processing, or ITMP, an advanced manufacturing method that integrates magnetic fields with high-temperature thermal processing. The national consortium of industry, academic and national laboratory partners is now led by Michael Tonks, Ph.D., UF’s interim chair of Materials Science and Engineering, who succeeded Michele Manuel, Ph.D., the project’s long-time leader. “This revolutionary technology has the potential to substantially reduce the cost and energy use of heat treatments in the steel industry, and we are excited to help pave the way for its adoption in industry,” said Tonks. It’s not just any piece of equipment; it’s a custom-built superconducting magnet with a unique ability to combine magnetic fields with high-temperature thermal processing. In partnership with the UF physics department, Oak Ridge National Laboratory, or ORNL, and six companies interested in the technology, the magnet and cylinder induction furnace now sit atop a 6-foot-high platform. The prototype, which costs more than $6 million to purchase and install, is capable of processing steel samples up to 5 inches in diameter making it a rare asset for academic research. Yang Yang, Ph.D., UF materials science research faculty member, estimated ITMP could reduce steel processing time by as much as 80 percent, cutting energy use and operational costs. “Thermomagnetic processing changes a material’s phase stability and kinetic properties, accelerating carbon diffusion in steel, said Yang. “Traditional furnaces cannot achieve these advanced material properties.” The system works by modifying the driving forces for important steel phase changes, which shortens heat treatment. “What normally takes eight hours can be done in just a few minutes.” Yang explained. “The magnetic field acts as an external driving force to make atoms diffuse faster.” Unlike conventional energy sources like electricity or natural gas, the ITMP process uses volumetric induction heating along with high-static magnetic fields to lower energy consumption. The project is still in a pilot phase and requires additional research and testing. At ORNL, researchers emphasized the rarity of UF’s prototype, citing its unprecedented combination of magnetic field strength and ability to process large samples and components. “This could significantly advance U.S. manufacturing and process efficiency for heat treatment of materials such as metal alloys of steel or aluminum,” said Michael Kesler, Ph.D., ORNL research scientist and lead collaborator. Kesler noted successful implementation of this technology could contribute to a reliable energy grid and more efficient industrial electrification. UF researchers contend it could also reduce carbon emissions, supporting cleaner, more sustainable manufacturing processes. The tall, two-level magnet now resides in the Powell Family Structures and Materials Laboratory on UF's East Campus. MSE plans to officially unveil it in December, inviting representatives from national labs, industry and academia. While Engineering students will have future opportunities to use it for research and experiential learning, UF researchers are optimistic about potential industry adoption for industrial manufacturing in the next five to 10 years. The award is part of a $187 million DOE initiative to strengthen competitiveness in U.S. manufacturing. If successful, the innovation could redefine how the world shapes the materials of tomorrow.

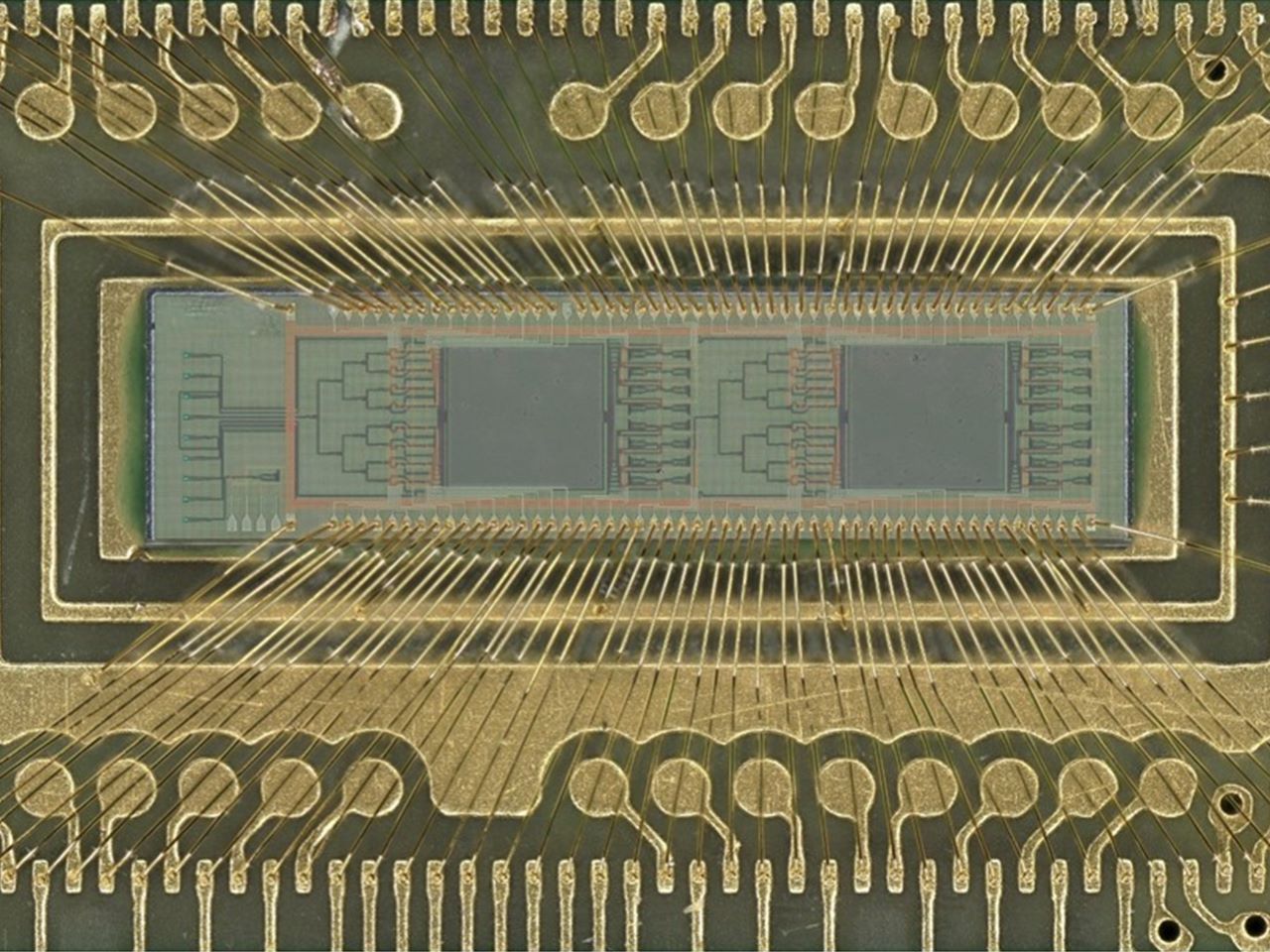

New light-based chip boosts power efficiency of AI tasks 100 fold

A team of engineers has developed a new kind of computer chip that uses light instead of electricity to perform one of the most power-intensive parts of artificial intelligence — image recognition and similar pattern-finding tasks. Using light dramatically cuts the power needed to perform these tasks, with efficiency 10 or even 100 times that of current chips performing the same calculations. Using this approach could help rein in the enormous demand for electricity that is straining power grids and enable higher performance AI models and systems. This machine learning task, called “convolution,” is at the heart of how AI systems process pictures, videos and even language. Convolution operations currently require large amounts of computing resources and time. These new chips, though, use lasers and microscopic lenses fabricated onto circuit boards to perform convolutions with far less power and at faster speeds. In tests, the new chip successfully classified handwritten digits with about 98% accuracy, on par with traditional chips “Performing a key machine learning computation at near zero energy is a leap forward for future AI systems,” said study leader Volker J. Sorger, Ph.D., the Rhines Endowed Professor in Semiconductor Photonics at the University of Florida. “This is critical to keep scaling up AI capabilities in years to come.” “This is the first time anyone has put this type of optical computation on a chip and applied it to an AI neural network,” said Hangbo Yang, Ph.D., a research associate professor in Sorger’s group at UF and co-author of the study. Sorger’s team collaborated with researchers at UF’s Florida Semiconductor Institute, the University of California, Los Angeles and George Washington University on study. The team published their findings, which were supported by the Office of Naval Research, Sept. 8 in the journal Advanced Photonics The prototype chip uses two sets of miniature Fresnel lenses using standard manufacturing processes. These two-dimensional versions of the same lenses found in lighthouses are just a fraction of the width of a human hair. Machine learning data, such as from an image or other pattern-recognition tasks, are converted into laser light on-chip and passed through the lenses. The results are then converted back into a digital signal to complete the AI task. This lens-based convolution system is not only more computationally efficient, but it also reduces the computing time. Using light instead of electricity has other benefits, too. Sorger’s group designed a chip that could use different colored lasers to process multiple data streams in parallel. “We can have multiple wavelengths, or colors, of light passing through the lens at the same time,” Yang said. “That’s a key advantage of photonics.” Chip manufacturers, such as industry leader NVIDIA, already incorporate optical elements into other parts of their AI systems, which could make the addition of convolution lenses more seamless. “In the near future, chip-based optics will become a key part of every AI chip we use daily,” said Sorger, who is also deputy director for strategic initiatives at the Florida Semiconductor Institute. “And optical AI computing is next.”