Experts Matter. Find Yours.

Connect for media, speaking, professional opportunities & more.

Start changing your phone habits with Offline.now

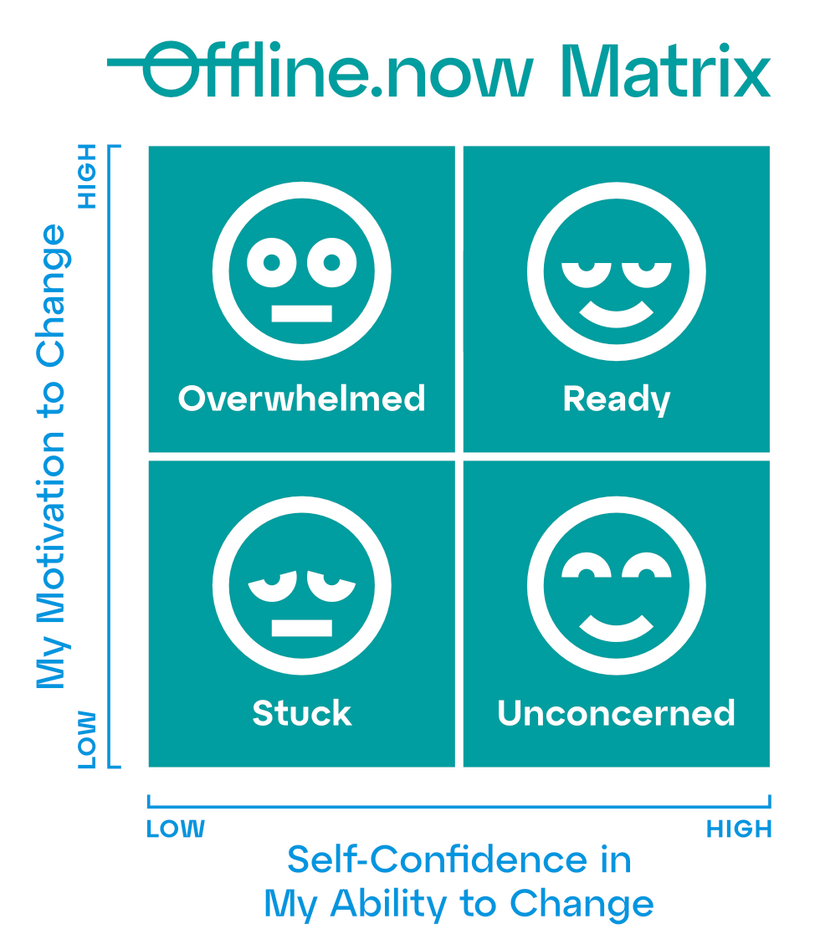

WHY THIS MATTERS Changing your relationship with a phone is hard. We see it at home and at work, across generations. Shame and all-or-nothing fixes don’t last. Offline.now focuses on practical tools and plain-language guidance people can act on today. WHAT OFFLINE.NOW OFFERS Homepage: take the 2-question quiz - clarifies where you are in your journey to change phone habits. Expert Directory - human support from therapists, coaches, counsellors, social workers, and specialists trained in everything from doomscrolling and nomophobia to online dating burnout and notification overload. Digital Balance Hub - quick guides and explainers you can act on today. HOW THE OFFLINE.NOW MATRIX HELPS Our intuitive Offline.now Matrix assessment tool presents four quadrants - Overwhelmed, Ready, Stuck, and Unconcerned - each offering practical real-world strategies to help people move towards their goals. STORY ANGLES FOR JOURNALISTS The two-question start: a practical way to change phone habits Doomscrolling isn’t a willpower problem - match the fix to the person From screen-time shame to personal progress: small-wins that stick Night routines that hold: what changes when people start in the right quadrant Parents, teens, and teams: a common language for digital balance INTERVIEW AVAILABILITY Eli Singer, Founder & CEO of Offline.now - pioneered early social strategy for Coca-Cola, Ford, and MoMA; published in Harvard Business Review; lead researcher on The Power of the 2×2 Matrix. See Eli’s profile for full bio and contact. FOR PRACTITIONERS Experts are invited to join the Offline.now directory.

First AI-powered Smart Care Home system to improve quality of residential care

Partnership between Lee Mount Healthcare and Aston University will develop and integrate a bespoke AI system into a care home setting to elevate the quality of care for residents By automating administrative tasks and monitoring health metrics in real time, the smart system will support decision making and empower care workers to focus more on people The project will position Lee Mount Healthcare as a pioneer of AI in the care sector and opening the door for more care homes to embrace technology. Aston University is partnering with dementia care provider Lee Mount Healthcare to create the first ‘Smart Care Home’ system incorporating artificial intelligence. The project will use machine learning to develop an intelligent system that can automate routine tasks and compliance reporting. It will also draw on multiple sources of resident data – including health metrics, care needs and personal preferences – to inform high-quality care decisions, create individualised care plans and provide easy access to updates for residents’ next of kin. There are nearly 17,000 care homes in the UK looking after just under half a million residents, and these numbers are expected to rise in the next two decades. Over half of social care providers still retain manual and paper-based approaches to care management, offering significant opportunity to harness the benefits of AI to enhance efficiency and care quality. The Smart Care Home system will allow for better care to be provided at lower cost, freeing up staff from administrative tasks so they can spend more time with residents. Manjinder Boo Dhiman, director of Lee Mount Healthcare, said: “As a company, we’ve always focused on innovation and breaking barriers, and this KTP builds on many years of progress towards digitisation. We hope by taking the next step into AI, we’ll also help to improve the image of the care sector and overcome stereotypes, to show that we are forward thinking and can attract the best talent.” Dr Roberto Alamino, lecturer in Applied AI & Robotics with the School of Computer Science and Digital Technologies at Aston University said: “The challenges of this KTP are both technical and human in nature. For practical applications of machine learning, it’s important to establish a common language between us as researchers and the users of the technology we are developing. We need to fully understand the problems they face so we can find feasible, practical solutions. For specialist AI expertise to develop the smart system, LMH is partnering with the Aston Centre for Artificial Intelligence Research and Application (ACAIRA) at Aston University, of which Dr Alamino is a member. ACAIRA is recognised internationally for high-quality research and teaching in computer science and artificial intelligence (AI) and is part of the College of Engineering and Physical Sciences. The Centre’s aim is to develop AI-based solutions to address critical social, health, and environmental challenges, delivering transformational change with industry partners at regional, national and international levels. The project is a Knowledge Transfer Partnership. (KTP). Funded by Innovate UK, KTPs are collaborations between a business, a university and a highly qualified research associate. The UK-wide programme helps businesses to improve their competitiveness and productivity through the better use of knowledge, technology and skills. Aston University is a sector leading KTP provider, ranked first for project quality, and joint first for the volume of active projects. For more information on the KTP visit the webpage.

Immigrants in the United States earn 10.6% less than similarly educated U.S.-born workers, largely because they are concentrated in lower-paying industries, occupations and companies, according to a major new study published July 16 in Nature, co-authored by a University of Massachusetts Amherst sociologist who studies equal opportunity in employment. The research—one of the most comprehensive global comparisons of immigrant labor market integration to date—analyzes linked employer-employee data from over 13 million people across nine advanced economies in Europe and North America. The U.S. results, drawn from a unique combination of Census Bureau, earnings and employer data, reveal that only about one-quarter of the wage gap is due to pay inequality within the same job and company. Instead, the majority stems from structural barriers that limit immigrants’ access to better-paying workplaces. “These findings are important because they show that most of the immigrant wage gap isn’t about being paid less for the same work—it’s about not getting into the highest-paying jobs and firms in the first place,” says Donald Tomaskovic-Devey, professor of sociology and founding director of the Center for Employment Equity at UMass Amherst Key U.S. Findings First-generation immigrants with legal status in the U.S. earn 10.6% less than comparable native-born workers. 3.4%, a third of that gap, is attributable to unequal pay for the same job at the same employer. No data was available on second-generation immigrants in the U.S., but other countries showed persistent but smaller gaps into the next generation. The study suggests that efforts to close immigrant wage gaps should focus on increasing immigrants’ access to better jobs and firms. Promising approaches include: Language and skills training Recognition of foreign credentials Access to professional networks Employer anti-bias interventions “Improving job access is essential,” says co-author Andrew Penner, professor of sociology at the University of California, Irvine. “This means addressing the barriers that keep immigrants out of the highest-paying firms and occupations.” As of 2023, immigrants constituted approximately 14% of the U.S. population, totaling over 47 million people. There are approximately 1 million new long-term permanent residents annually. U.S. immigration policy encompasses diverse pathways, including family-based migration, employment-based visas, the Diversity Visa Lottery and humanitarian protection. Immigration has been a defining feature of the U.S. population since its founding, with distinct waves shaped by economic needs, political developments and global conflicts. “For almost 250 years, we have been a nation of immigrants, and this pay gap indicates that we can do more as a country to help people following the paths of our forebears realize the American dream,” Tomaskovic-Devey adds. Global Comparison The study includes 13.5 million individuals in nine immigrant-receiving countries: the U.S., Canada, France, Germany, Denmark, Netherlands, Norway, Spain and Sweden. The U.S. had one of the smallest pay gaps (10.6%) among the nine countries studied. By contrast, Canada showed a 27.5% gap and Spain a 29.3% gap. The most favorable outcomes for immigrants were in Sweden (7% gap) and Denmark (9.2%). The authors identify two main sources of the immigrant-native pay gap: Sorting—Immigrants are more likely to work in lower-paying industries, occupations and firms. Within-job inequality—In all countries immigrants are paid less than natives doing the same job for the same employer, but these gaps are relatively small. Across the nine countries, three-quarters of the 17.9% average wage gap for immigrants was due to sorting; just one-quarter stemmed from unequal pay within jobs. In the U.S., this pattern was consistent: structural job access—not wage discrimination—was the dominant force. The study also exposes persistent disadvantages for immigrants from certain world regions, including Sub-Saharan Africa, Latin America and the Middle East. Across all countries, immigrants from these regions faced larger wage gaps than immigrants from Western or Asian countries. The international research is the latest in a series of high-profile publications from a team spanning over a dozen countries in North America and Europe that has been investigating the dynamics of workplace earnings distributions for the last decade.

Study reveals how race-evasive coverage of student loans fuels policy failures

For years, news coverage of the student debt crisis has left out a crucial part of the story: race. A new study in Educational Evaluation and Policy Analysis analyzed 15 years of student loan reporting in eight major newspapers to reveal that most media outlets avoided mentioning race until just a few years ago (even though disparities existed for all 15 years of the study). While one might assume this shift came after the racial justice uprisings of 2020, the data shows that the turn toward more explicit racial language actually began around 2018. Dominique Baker, associate professor in the College of Education and Human Development’s School of Education and the Joseph R. Biden, Jr. School of Public Policy and Administration at the University of Delaware, was the lead researcher. “Even when newspapers did eventually address race, they focused primarily on documenting the size of disparities instead of talking about the structural reasons underlying them, like racism,” Baker said. “Other research has shown that when the news media solely focuses on the disparities and not the structural issues, readers are more likely to punish people of color instead of supporting solutions that could help them." Why does this information matter? Because how the media talks about policy shapes how the public—and policymakers—see the problem. If coverage ignores the racial disparities in student loan burdens, it makes race-neutral, one-size-fits-all solutions seem more logical—even if they fail to address real inequities. It’s not just about adding a few words. It’s about changing the lens entirely. Baker has appeared in dozens of national news outlets for her expertise. She is available for interviews on this paper and other topics surrounding higher education. Email mediarelations@udel.edu to contact her.

Reclaiming 'Spend': A Retirement Rebellion

June is Pride Month—a celebration of identity, resilience, and the powerful act of reclaiming. Over the years, LGBTQ+ communities have reclaimed words that once marginalized them. “Queer” used to be a slur. Now, it’s a proud badge of honor. Similarly, the Black community has transformed language once used to oppress into expressions of cultural pride and connection. So, here's a thought: What if retirees approached the word “spend” similarly? Yes, you read that right. The psychological Tug-of-War This isn't just about numbers; it’s about narratives. Most retirees have spent their entire adult lives in accumulation mode: save, earn, invest, delay gratification, rinse, and repeat. But retirement flips that formula on its head, and most people weren’t provided with a “mental user guide” for the transition. Now, instead of saving, they’re expected to spend? Without a paycheck? It triggers everything from guilt to fear to a low-grade existential crisis. The Challenge of Saving for an Extended Period Let’s get serious for a moment. The data tells a troubling story: - Canadians over 65 collectively hold $1.5 trillion in home equity (CMHC, 2023) - The average retiree spends just $33,000 per year, despite often having far more resources (StatsCan, 2022) - Nearly 70% of retirees express anxiety about running out of money—despite having significant savings (FCAC, 2022) We’re talking about seniors who could afford dinner out, a trip to Tuscany, or finally buying that electric bike—and instead, they’re clipping coupons and debating the cost of almond milk. Why? Because spending still feels wrong. I Know a Thing or Two About Reclaiming Words As a proud member of the LGBTQ2+ community and a woman who has worked in the traditionally male-dominated world of finance, I’ve had a front-row seat to the power of language, both its ability to uplift and its tendency to wound. There were many boardrooms where I was not only the only woman but also the only gay person, and often the oldest person in the room. I didn’t just have a seat at the table; I had to earn, protect, and sometimes fight to keep it. I’ve learned that words can be weapons, but they can also be amour—if you know how to use them. Reflect on Your Boundaries Take a moment. Have you ever felt prejudged, marginalized, or dismissed? Perhaps it was due to your gender, sexuality, accent, skin colour, culture, or age. It leaves a mark. One way to preserve your dignity is by building a mental toolkit in advance. Prepare a few lines, questions, or quiet comebacks you can use when someone crosses the line—whether they intend to or not. Here are five strategies that helped me stand tall—even at five feet nothing: 1. Humour – A clever remark can defuse tension or highlight bias without confrontation. 2. Wit – A precisely timed comeback can silence a room more effectively than an argument. 3. Over-preparation – Know your stuff inside and out. Knowledge is power. 4. Grace under fire – Not everything deserves your energy. Rise above it when it matters. 5. Vulnerability – A simple “Ouch” or “Did you mean to hurt me?” can be quietly disarming—and deeply human. Let’s Talk About Microaggressions The term microaggression may sound small, but its effects are significant. These are the subtle, often unintentional slights: backhanded compliments, dismissive glances, and “jokes” that aren’t funny. They quietly chip away at your sense of belonging. Dr. Robin DiAngelo’s book White Fragility is a brilliant read on this topic. She explains how early socialization creates bias— “Good guys wear white hats. Bad guys wear black hats.” These unconscious associations become ingrained from an early age. Some people still say, “I’m not racist—I have a Black friend,” or “I’m not homophobic—my cousin is gay.” The truth? Knowing someone from a marginalized group doesn’t exempt you from unconscious bias. It might explain the behaviour, but it doesn’t excuse it. And no, there is no such thing as reverse discrimination. Discrimination operates within systems of power and history. When someone points out a biased comment or unconscious microaggression, they’re not discriminating against you—they’re holding up a mirror. That sting you feel? It’s not oppression. It’s shame—and it’s warranted. It signals that your intentions clashed with your impact. And that’s not a failure; it’s an invitation to grow. Calling it “reverse discrimination” is just a way to dodge discomfort. But real progress comes when we sit with that discomfort and ask: Why did this land the way it did? What am I missing? Because the truth is, being uncomfortable doesn’t mean you’re being attacked. It often means you’re being invited into a deeper understanding—and that’s something worth showing up for. Let’s Reclaim 'Spend' What if we flipped the script? What if spending in retirement was viewed as a badge of honour? Spending on your grandkids’ education, your bucket list adventures or even a high-end patio chair should not come with any shame. You’ve earned this. You’ve planned for this. It’s time to reclaim it. Let’s make “spend” the new “thrive.” Let’s make super-saver syndrome a thing of the past. Let the Parade Begin Imagine it: a Seniors’ Spend Parade. Golden confetti. Wheelchairs with spoilers. Luxury walkers with cupholders and chrome rims. T-shirts that say: - “Proud Spender. Zero Shame.” - “I’m not broke—I’m retired and woke.” - “My equity funds my gelato tour.” Dreams Aren’t Just for the Young What’s the point of spending decades building wealth if you never enjoy it? Reclaiming “spend” isn’t about being reckless—it’s about being intentional. So go ahead—book the trip. Upgrade the sofa. Take the wine tour. You’re not being irresponsible; you’re living the life you’ve earned. And if anyone questions it? Smile and say: “I’m reclaiming the word spend. Care to join the parade?” Sue Don’t Retire…Rewire! 8 Guilt-Free Ways to Spend in Retirement A checklist to help you spend proudly, wisely, and joyfully: ☐ Book the Trip – Travel isn’t a luxury; it’s a memory maker. ☐ Upgrade for Comfort – That recliner? That mattress? Worth every penny. ☐ Gift a Down Payment – Help your kids become homeowners. ☐ Fund a Grandchild’s Dream – Tuition, ballet, a first car—you’re building a legacy. ☐ Outsource the Chores – Pay for help so you can reclaim your time. ☐ Invest in Wellness – Healthy food, massage therapy, yoga. Health is wealth. ☐ Pursue a Passion – From pottery to piloting drones, go for it. ☐ Celebrate Milestones – Anniversaries, birthdays… or Tuesdays. Celebrate always! Want More? If this speaks to you, visit www.retirewithequity.ca and explore more: - From Saver to Spender: Navigating the Retirement Mindset - Money vs. Memories in Retirement - Fear Of Running Out (FORO) Each piece explores the emotional and psychological aspects of retirement—the parts no one talks about at your pension seminar.

Hidden in plain sight: UD researcher exposes gaps in college application process

In a groundbreaking study in the American Educational Research Journal, University of Delaware Associate Professor Dominique Baker and others has unveiled significant disparities in how students report extracurricular activities on college applications, highlighting inequities in the admissions process. Analyzing over 6 million Common App submissions using natural language processing, the researchers discovered that white, Asian, wealthier, and private-school students tend to list more activities, leadership roles, and unique accomplishments compared to their peers from underrepresented racial, ethnic, and socioeconomic backgrounds. However, when underrepresented minority students did report leadership roles, they did so at rates comparable to their white and Asian American counterparts. “All students do not have the ability to sign up for eight, 10 or 15 extracurricular activities,” Baker noted, emphasizing that many students must work to support their families, limiting their participation in extracurriculars. To address these disparities, the researchers recommend reducing the number of activities students can list on applications—suggesting a cap of four or five—to encourage a focus on the quality and intensity of involvement rather than quantity. This approach aims to level the playing field, ensuring that students with limited opportunities can still showcase their potential effectively. Baker and her colleagues draw attention to Lafayette College, which has recently reduced the number of extracurricular activities it reviews from 10 to six. While data on the impact of such changes is still forthcoming, the move aligns with the researchers’ recommendations and signals a shift toward more fair admissions practices. Other institutions are beginning to take note. If you wish to delve deeper into this research and explore its implications for college admissions, Baker is available for interviews and has been in a number of national outlets like The Wall Street Journal, ABC News, and Inside Higher Ed. Her insights could provide valuable perspectives on creating a more fair admissions landscape.

Google's New AI Overviews Isn’t Just Another Search Update

Google's recent rollout of AI Overviews (previously called “Search Generative Experience”) at its annual developer conference is being hailed as the biggest transformation in search since the company was founded. This isn’t a side project for Google — it fundamentally alters how content gets discovered, consumed, and valued online. If you're in marketing, PR, content strategy, or run a business that depends on online visibility, this requires a fundamental shift in your thinking. What Is AI Overviews? Instead of showing users a familiar list of blue links and snippets, Google now uses artificial intelligence to generate a summary answer at the very top of many search results pages. This AI-generated box pulls together content from across the web and tries to answer the user’s question instantly—without requiring them to click through to individual websites. Here’s what that looks like: You type in a question like “What are the best strategies for handling a media crisis?” Instead of just links, you see a big AI-generated paragraph with summarized strategies, possibly quoting or linking to 3-5 sources—some of which might not even be visible unless you scroll or expand the summary. Welcome to the new digital gatekeeper. Elizabeth Reid, VP of Search at Google states "Our new Gemini model customized for Google Search brings together Gemini’s advanced capabilities — including multi-step reasoning, planning and multimodality — with our best-in-class Search systems. Let's breakdown this technobabble. Think of Gemini as the brain behind Google’s search engine that’s now: Even More Focused on User intent For years, SEO strategies were built around guessing and gaming the right keywords: “What exact phrase are people typing into Google?” That approach led to over-optimized content — pages stuffed with phrases like “best expert speaker Boston cleantech” — written more for algorithms than actual humans. But with Google Gemini and other AI models now interpreting search queries like a smart research assistant, the game has changed entirely. Google is no longer just matching phrases — it’s interpreting what the user wants to do and why they’re asking. Here’s What That Looks Like: Let’s say someone searches: “How do I find a reputable expert on fusion energy who can speak at our cleantech summit?” In the old system, pages that mentioned “renewable energy,” “expert,” and “speaker” might rank — regardless of whether they actually helped the user solve their problem. Now Google more intuitively understands: • The user wants to evaluate credibility • The user is planning an event • The user needs someone available to speak • The context is likely professional or academic If your page simply has the right keywords but doesn’t send the right signals — you’re invisible. Able to plan ahead Google and AI search platforms now go beyond just grabbing facts. They string together pieces of information to answer more complex, multi-step queries. In traditional search, users ask one simple question at a time. But with multi-step queries, users are increasingly expecting one search to handle a series of related questions or tasks all at once — and now Google can actually follow along and reason through those steps. So imagine you’re planning a conference. A traditional search might look like: "Best conference venues in Boston” But a multi-step query might be: “Find a conference venue in Boston with breakout rooms, check availability in October, and suggest nearby hotels with group rates.” This used to require three or four different searches, and you’d piece it together yourself. Now Google can handle that entire chain of related tasks, plan the steps behind the scenes, and return a highly curated answer — often pulling from multiple sources of structured and unstructured data. Even Better at understanding context Google now gets the difference between ‘a speaker at a conference’ and ‘a Bluetooth speaker’ — because it understands what you mean, not just what you type.” In the past, Google would match keywords literally. If your page had the word “speaker,” it might rank for anything from event keynotes to audio gear. That’s why so many search results felt off or required extra digging. Now Google reads between the lines. It understands that “conference speaker” likely refers to a person who gives talks, possibly with credentials, experience, and a bio. And that “Bluetooth speaker” is a product someone might want to compare or buy. Why this matters for marketers: If you’re relying on vague or generic content — or just “keyword-stuffing” — your pages will fall flat. Google is no longer fooled by superficial matches. It wants depth, clarity, and specificity. Reads More Than Just Text Google now processes images, videos, charts, infographics, and even audio — and uses that multimedia information to answer search queries more completely. This now means that your content isn’t just being read like a document — it’s being watched, listened to, and interpreted like a human would. For example: • A chart showing rising enrollment in nursing programs might get picked up as supporting evidence for a story about healthcare education trends. • A YouTube video of your CEO speaking at a conference might be indexed as proof of thought leadership. • An infographic explaining how your service works could surface in an AI-generated summary — even if the keyword isn’t mentioned directly in text. Ignoring multimedia formats? Then, your competitors’ visual storytelling could be outperforming your plain content. Because you're not giving Google the kind of layered, helpful content that Gemini is now designed to highlight. Why This Matters There's a big risk here. Marketers who ignore these developments are in danger of becoming invisible in search. Your old SEO tricks won’t work. Your content won’t appear in AI summaries. Your organization won’t be discovered by journalists, customers, or partners who now rely on smarter search results to make decisions faster. If you’re in communications, PR, media relations, or digital marketing, here’s the key message. You are no longer just fighting for links. You need to fight to be included in the Google AI summary itself at the top of search results - that's the new #1 goal. Why? Journalists can now find their answers before ever clicking on your beautifully written news page. Prospective students, donors, and customers will often just see the AI’s version of your content. Your brand’s visibility now hinges on being seen as “AI-quotable.” If your organization isn’t optimized for this new AI-driven landscape, you risk becoming invisible at the very moment people are searching for what you offer. How You Can Take Action (and Why Your Role Is More Important Than Ever) This isn’t just an IT or SEO problem. It’s a communications strategy opportunity—and you are central to the solution. What You Can Do Now to Prepare for AI Overviews 1. Get Familiar with How AI “Reads” Your Content AI Overviews pull content from websites that are structured clearly, written credibly, and explain things in simple language. Action Items: Review your existing content: Is it jargon-heavy? Outdated? Lacking expert quotes or explanations? Then, it's time to clean house. 2. Collaborate with your SEO and Web Teams Communicators and content creators now need to work hand-in-hand with technical teams. Action Items: Check your pages to see if you are using proper schema markup. Are you creating topic pages that explain complex ideas in simple, scannable formats? 3. Showcase Human Expertise AI values content backed by real people—especially experts with credentials. Action Items: Make sure your expert profiles are up to date. Make sure you continue to enhance them with posts, links to media coverage, short videos, images and infographics that highlight the voices behind your brand and make you stand out in search. 4. Don’t Just Publish—Package AI favors content that it can easily digest and display such as summary paragraphs, FAQs, and bold headers that provide structure for search engines. This also makes your content more scannable and engaging to humans. Action Items: Repurpose your best content into AI-friendly formats: think structured lists, how-tos, and definitions. 5. Monitor Your Presence in AI Overviews Regularly search key topics related to your organization and see what shows up. Action Items: Is your content featured? If not, whose is—and identify what they doing differently. A New Role for Communications: From Media Pitches to Machine-Readable Influence This isn’t the end of communications as we know it—it’s an evolution. Your role now includes helping your organization communicate clearly to machines as well as to people. Think of it as “PR for the algorithm.” You’re not just managing narratives for the public—you’re shaping what AI systems say about you and your brand. That means: • Ensuring your best ideas and experts are front and center online. • Making complex information simple and quotable. • Collaborating cross-functionally like never before. Final Thought: AI Search Rewards the Prepared Google’s new AI Overviews are here. They’re not a beta test. This is the future of search, and it’s already rolling out. If your institution, company, or nonprofit wants to be discovered, trusted, and quoted, you can no longer afford to ignore how AI interprets your online presence. Communications and media professionals are now at the front lines of discoverability. And the best way to lead is to act now, work collaboratively, and elevate your role in this new era of search. Want to see how leading organizations are getting ahead in the age of AI search? Discover how ExpertFile is helping corporations, universities, healthcare institutions and industry associations transform their knowledge into AI-optimized assets — boosting visibility, credibility, and media reach. Get your free download of our app at www.expertfile.com

Why Simultaneous Voting Makes for Good Decisions

How can organizations make robust decisions when time is short, and the stakes are high? It’s a conundrum not unfamiliar to the U.S. Food and Drug Administration. Back in 2021, the FDA found itself under tremendous pressure to decide on the approval of the experimental drug aducanumab, designed to slow the progress of Alzheimer’s disease—a debilitating and incurable condition that ranks among the top 10 causes of death in the United States. Welcomed by the market as a game-changer on its release, aducanumab quickly ran into serious problems. A lack of data on clinical efficacy along with a slew of dangerous side effects meant physicians in their droves were unwilling to prescribe it. Within months of its approval, three FDA advisors resigned in protest, one calling aducanumab, “the worst approval decision that the FDA has made that I can remember.” By the start of 2024, the drug had been pulled by its manufacturers. Of course, with the benefit of hindsight and data from the public’s use of aducanumab, it is easy for us to tell that FDA made the wrong decision then. But is there a better process that would have given FDA the foresight to make the right decision, under limited information? The FDA routinely has to evaluate novel drugs and treatments; medical and pharmaceutical products that can impact the wellbeing of millions of Americans. With stakes this high, the FDA is known to tread carefully: assembling different advisory, review, and funding committees providing diverse knowledge and expertise to assess the evidence and decide whether to approve a new drug, or not. As a federal agency, the FDA is also required to maintain scrupulous records that cover its decisions, and how those decisions are made. The Impact of Voting Mechanisms on Decision Quality Some of this data has been analyzed by Goizueta’s Tian Heong Chan, associate professor of information systems and operation management. Together with Panos Markou of the University of Virginia’s Darden School of Business, Chan scrutinized 17 years’ worth of information, including detailed transcripts from more than 500 FDA advisory committee meetings, to understand the mechanisms and protocols used in FDA decision-making: whether committee members vote to approve products sequentially, with everyone in the room having a say one after another; or if voting happens simultaneously via the push of a button, say, or a show of hands. Chan and Markou also looked at the impact of sequential versus simultaneous voting to see if there were differences in the quality of the decisions each mechanism produced. Their findings are singular. It turns out that when stakeholders vote simultaneously, they make better decisions. Drugs or products approved this way are far less likely to be issued post-market boxed warnings (warnings issued by FDA that call attention to potentially serious health risks associated with the product, that must be displayed on the prescription box itself), and more than two times less likely to be recalled. The FDA changed its voting protocols in 2007, when they switched from sequentially voting around the room, one person after another, to simultaneous voting procedures. And the results are stunning. Tian Heong Chan, Associate Professor of Information Systems & Operation Management “Decisions made by simultaneous voting are more than twice as effective,” says Chan. “After 2007, you see that just 3.4% of all drugs and products approved this way end up being discontinued or recalled. This compares with an 8.6% failure rate for drugs approved by the FDA using more sequential processes—the round robin where individuals had been voting one by one around the room.” Imagine you are told beforehand that you are going to vote on something important by simply raising your hand or pressing a button. In this scenario, you are probably going to want to expend more time and effort in debating all the issues and informing yourself before you decide. Tian Heong Chan “On the other hand, if you know the vote will go around the room, and you will have a chance to hear how others’ speak and explain their decisions, you’re going to be less motivated to exchange and defend your point of view beforehand,” says Chan. In other words, simultaneous decision-making is two times less likely to generate a wrong decision as the sequential approach. Why is this? Chan and Markou believe that these voting mechanisms impact the quality of discussion and debate that undergird decision-making; that the quality of decisions is significantly impacted by how those decisions are made. Quality Discussion Leads to Quality Decisions Parsing the FDA transcripts for content, language, and tonality in both settings, Chan and Markou find evidence to support this. Simultaneous voting or decision-making drives discussions that are characterized by language that is more positive, more authentic, and more even in terms of expressions of authority and hierarchy, says Chan. What’s more, these deliberations and exchanges are deeper and more far-ranging in quality. We find marked differences in the tone of speech and the topics discussed when stakeholders know they will be voting simultaneously. There is less hierarchy in these exchanges, and individuals exhibit greater confidence in sharing their points of view more freely. Tian Heong Chan “We also see more questions being asked, and a broader range of topics and ideas discussed,” says Chan. In this context, decision-makers are also less likely to reach unanimous agreement. Instead, debate is more vigorous and differences of opinion remain more robust. Conversely, sequential voting around the room is typically preceded by shorter discussion in which stakeholders share fewer opinions and ask fewer questions. And this demonstrably impacts the quality of the decisions made, says Chan. Sharing a different perspective to a group requires effort and courage. With sequential voting or decision-making, there seems to be less interest in surfacing diverse perspectives or hidden aspects to complex problems. Tian Heong Chan “So it’s not that individuals are being influenced by what other people say when it comes to voting on the issue—which would be tempting to infer—rather, it’s that sequential voting mechanisms seem to take a bit more effort out of the process.” When decision-makers are told that they will have a chance to vote and to explain their vote, one after another, their incentives to make a prior effort to interrogate each other vigorously, and to work that little bit harder to surface any shortcomings in their own understanding or point of view, or in the data, are relatively weaker, say Chan and Markou. The Takeaway for Organizations Making High-Stakes Decisions Decision-making in different contexts has long been the subject of scholarly scrutiny. Chan and Markou’s research sheds new light on the important role that different mechanisms have in shaping the outcomes of decision-making—and the quality of the decisions that are jointly taken. And this should be on the radar of organizations and institutions charged with making choices that impact swathes of the community, they say. “The FDA has a solid tradition of inviting diversity into its decision-making. But the data shows that harnessing the benefits of diversity is contingent on using the right mechanisms to surface the different expertise you need to be able to see all the dimensions of the issue, and make better informed decisions about it,” says Chan. A good place to start? By a concurrent show of hands. Tian Heong Chan is an associate professor of information systems and operation management. he is available to speak about this topic - click on his con now to arrange an interview today.

Expert Perspective: Mitigating Bias in AI: Sharing the Burden of Bias When it Counts Most

Whether getting directions from Google Maps, personalized job recommendations from LinkedIn, or nudges from a bank for new products based on our data-rich profiles, we have grown accustomed to having artificial intelligence (AI) systems in our lives. But are AI systems fair? The answer to this question, in short—not completely. Further complicating the matter is the fact that today’s AI systems are far from transparent. Think about it: The uncomfortable truth is that generative AI tools like ChatGPT—based on sophisticated architectures such as deep learning or large language models—are fed vast amounts of training data which then interact in unpredictable ways. And while the principles of how these methods operate are well-understood (at least by those who created them), ChatGPT’s decisions are likened to an airplane’s black box: They are not easy to penetrate. So, how can we determine if “black box AI” is fair? Some dedicated data scientists are working around the clock to tackle this big issue. One of those data scientists is Gareth James, who also serves as the Dean of Goizueta Business School as his day job. In a recent paper titled “A Burden Shared is a Burden Halved: A Fairness-Adjusted Approach to Classification” Dean James—along with coauthors Bradley Rava, Wenguang Sun, and Xin Tong—have proposed a new framework to help ensure AI decision-making is as fair as possible in high-stakes decisions where certain individuals—for example, racial minority groups and other protected groups—may be more prone to AI bias, even without our realizing it. In other words, their new approach to fairness makes adjustments that work out better when some are getting the short shrift of AI. Gareth James became the John H. Harland Dean of Goizueta Business School in July 2022. Renowned for his visionary leadership, statistical mastery, and commitment to the future of business education, James brings vast and versatile experience to the role. His collaborative nature and data-driven scholarship offer fresh energy and focus aimed at furthering Goizueta’s mission: to prepare principled leaders to have a positive influence on business and society. Unpacking Bias in High-Stakes Scenarios Dean James and his coauthors set their sights on high-stakes decisions in their work. What counts as high stakes? Examples include hospitals’ medical diagnoses, banks’ credit-worthiness assessments, and state justice systems’ bail and sentencing decisions. On the one hand, these areas are ripe for AI-interventions, with ample data available. On the other hand, biased decision-making here has the potential to negatively impact a person’s life in a significant way. In the case of justice systems, in the United States, there’s a data-driven, decision-support tool known as COMPAS (which stands for Correctional Offender Management Profiling for Alternative Sanctions) in active use. The idea behind COMPAS is to crunch available data (including age, sex, and criminal history) to help determine a criminal-court defendant’s likelihood of committing a crime as they await trial. Supporters of COMPAS note that statistical predictions are helping courts make better decisions about bail than humans did on their own. At the same time, detractors have argued that COMPAS is better at predicting recidivism for some racial groups than for others. And since we can’t control which group we belong to, that bias needs to be corrected. It’s high time for guardrails. A Step Toward Fairer AI Decisions Enter Dean James and colleagues’ algorithm. Designed to make the outputs of AI decisions fairer, even without having to know the AI model’s inner workings, they call it “fairness-adjusted selective inference” (FASI). It works to flag specific decisions that would be better handled by a human being in order to avoid systemic bias. That is to say, if the AI cannot yield an acceptably clear (1/0 or binary) answer, a human review is recommended. To test the results for their “fairness-adjusted selective inference,” the researchers turn to both simulated and real data. For the real data, the COMPAS dataset enabled a look at predicted and actual recidivism rates for two minority groups, as seen in the chart below. In the figures above, the researchers set an “acceptable level of mistakes” – seen as the dotted line – at 0.25 (25%). They then compared “minority group 1” and “minority group 2” results before and after applying their FASI framework. Especially if you were born into “minority group 2,” which graph seems fairer to you? Professional ethicists will note there is a slight dip to overall accuracy, as seen in the green “all groups” category. And yet the treatment between the two groups is fairer. That is why the researchers titled their paper “a burden shared is a burdened halved.” Practical Applications for the Greater Social Good “To be honest, I was surprised by how well our framework worked without sacrificing much overall accuracy,” Dean James notes. By selecting cases where human beings should review a criminal history – or credit history or medical charts – AI discrimination that would have significant quality-of-life consequences can be reduced. Reducing protected groups’ burden of bias is also a matter of following the laws. For example, in the financial industry, the United States’ Equal Credit Opportunity Act (ECOA) makes it “illegal for a company to use a biased algorithm that results in credit discrimination on the basis of race, color, religion, national origin, sex, marital status, age, or because a person receives public assistance,” as the Federal Trade Commission explains on its website. If AI-powered programs fail to correct for AI bias, the company utilizing it can run into trouble with the law. In these cases, human reviews are well worth the extra effort for all stakeholders. The paper grew from Dean James’ ongoing work as a data scientist when time allows. “Many of us data scientists are worried about bias in AI and we’re trying to improve the output,” he notes. And as new versions of ChatGPT continue to roll out, “new guardrails are being added – some better than others.” “I’m optimistic about AI,” Dean James says. “And one thing that makes me optimistic is the fact that AI will learn and learn – there’s no going back. In education, we think a lot about formal training and lifelong learning. But then that learning journey has to end,” Dean James notes. “With AI, it never ends.” Gareth James is the John H. Harland Dean of Goizueta Business School. If you're looking to connect with him - simply click on his icon now to arrange an interview today.

With Rise in US Autism Rates, Florida Tech Expert Clarifies What We Know About the Disorder

A new report from the Centers for Disease Control and Prevention (CDC) found that an estimated 1 in 31 U.S. children has autism; that's about a 15% increase from a 2020 report, which estimated 1 in 36. The latest numbers come from the CDC’s Autism and Developmental Disabilities Monitoring (ADDM) Network, which tracked diagnoses in 2022 among 8-year-old children. Autism spectrum disorder (ASD) is a neurological disorder that refers to a broad range of conditions affecting social interaction. People with autism may experience challenges with social skills, repetitive behaviors, speech and nonverbal communication. The news has experts like Florida Tech's Kimberly Sloman, Ph.D, weighing in on the matter. She noted that the definition of autism was expanded to include mild cases, which could explain the increase. “Research shows that increased rates are largely due to increased awareness and changes to diagnostic criteria. Much of the increase reflects individuals who have fewer support needs, women and girls and others who may have been misdiagnosed previously," said Sloman. Her insight follows federal health secretary Robert F. Kennedy Jr.'s recent declaration, vowing to conduct further studies to identify environmental factors that could cause the disorder. In his remarks, he also miscategorized autism as a "preventable disease," prompting scrutiny from experts and media attention. “Autism destroys families,” Kennedy said. “More importantly, it destroys our greatest resource, which is our children. These are children who should not be suffering like this.” Kennedy described autism as a “preventable disease,” although researchers and scientists have identified genetic factors that are associated with it. Autism is not considered a disease, but a complex disorder that affects the brain. Cases range widely in severity, with symptoms that can include delays in language, learning, and social or emotional skills. Some autistic traits can go unnoticed well into adulthood. Those who have spent decades researching autism have found no single cause. Besides genetics, scientists have identified various possible factors, including the age of a child’s father, the mother’s weight, and whether she had diabetes or was exposed to certain chemicals. Kennedy said his wide-ranging plan to determine the cause of autism will look at all of those environmental factors, and others. He had previously set a September deadline for determining what causes autism, but said Wednesday that by then, his department will determine at least “some” of the answers. The effort will involve issuing grants to universities and researchers, Kennedy said. He said the researchers will be encouraged to “follow the science, no matter what it says.” April 17 - Associated Press Sloman emphasized that experts are confident that autism has a strong genetic component, meaning there's an element of the disorder that may not be preventable. However, scientists are still working to understand the full scope of the disorder, and much is still unknown. “We know that there’s a strong genetic component for autism, but environmental factors may interact with genetic susceptibility," Sloman said. "This is still not well understood.” Kimberly Sloman’s research interests include best practices for treating individuals with autism spectrum disorder (ASD). She studies the assessment and treatment of problem behavior with methods such as stereotypy, individualized skill assessments and generalization of treatment effects. Are you covering this story or looking to know more about autism and the research behind the disorder? Let us help. Kimberly is available to speak with media about this subject. Simply click on her icon now to arrange an interview today.